Responsible use in the academe

The proliferation of artificial intelligence (AI) tools such as large language models and chatbots has considerably changed the academic landscape. Teachers have had to adapt on the fly, straddling the line behind harnessing these tools for their own educational gain in the classroom while preventing their potential misuse.

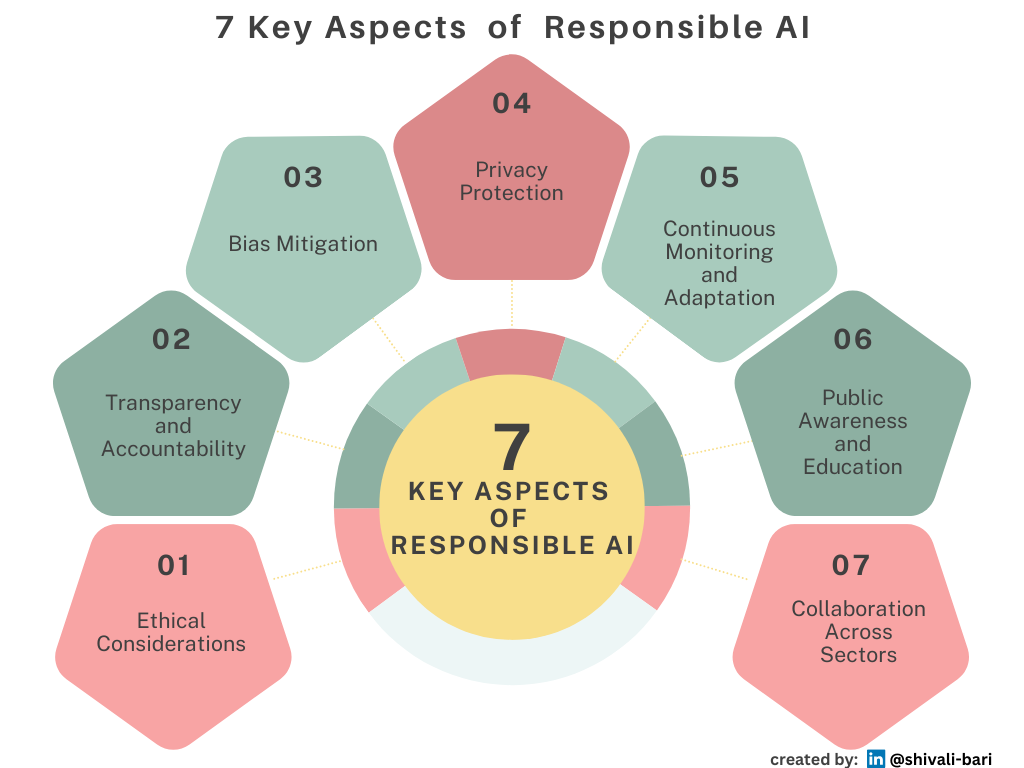

In its wake, AI’s rapid ascendence has left behind a lot of ethical gray areas for teachers and students to navigate. This is why a new tool called Graidients from the Center for Digital Thriving — a research and innovation lab housed at Project Zero — aims to provide a framework for making these ethically unclear areas of generative AI visible to educators inside the classroom.

Avoiding plagiarism and misuse

Finding Our Lines

Even with guidance from teachers and schools, gray areas remain when it comes to AI. But Graidients aims to help make the “lines” we are or are not willing to cross more visible by turning a conversation about what’s an acceptable use of AI tools into a valuable learning experience.

The tool helps educators scaffold a conversation with students around how to use AI to support learning for a given classroom assignment. Teachers pick a specific assignment — the center cites a five-page paper about The Great Gatsby as an example — then asks students to brainstorm how they could use AI to help with that assignment.

The center stressed this type of brainstorming should be “a no judgment zone,” where there are no bad ideas. Once the brainstorm is over, though, students are asked to reflect on those ideas and sort them into five different categories based on how they feel. A “totally fine” idea, or one that “crosses the line” into feeling unethical or unfair, are on the far ends of the spectrum. In the middle sit three other gray area categories — “mostly OK,” “not really sure” or “feels sketchy” — which house ideas students aren’t sure about ethically. [1]

While some classrooms may embrace AI fully, other educators are combatting its proliferation and potential academic dishonesty with a return to oral or written exams. The Wall Street Journal recently reported sales of “blue books” for written essays have risen dramatically, while schools and organizations have developed their own guidelines for using AI with academic integrity. The Harvard Graduate School of Education, for example, “encourages responsible experimentation with generative AI tools,” but warns there are “important considerations” regarding information security, data privacy, copyright issues, and trustworthiness of content and its impact on academic integrity. The guidance is part of an overall student policy the school released for the 2025–26 academic year, which notes that individual instructor rules may offer more specific guidance for students in certain contexts. [1]

Accuracy and verification of AI outputs

Accepting AI Edits Without Review: Automated suggestions may misinterpret context or introduce inaccuracies. Overreliance on AI for Citations: While AI tools can suggest references, it's crucial to verify each source's relevance and accuracy. Blindly accepting AI-generated citations can lead to misrepresentation or inclusion of non-existent sources.[3]

Bias and fairness

AI systems can inherit and even amplify biases present in their training data. This can result in unfair or discriminatory outcomes, particularly in hiring, lending, and law enforcement applications. Addressing bias and ensuring fairness in AI algorithms is a critical ethical concern.

AI in Criminal Justice: The use for predictive policing, risk assessment, and sentencing decisions can perpetuate biases and raise questions about due process and fairness.

Bias in Content Recommendation: AI-driven content recommendation systems can reinforce existing biases and filter bubbles, influencing people’s views and opinions.[4]