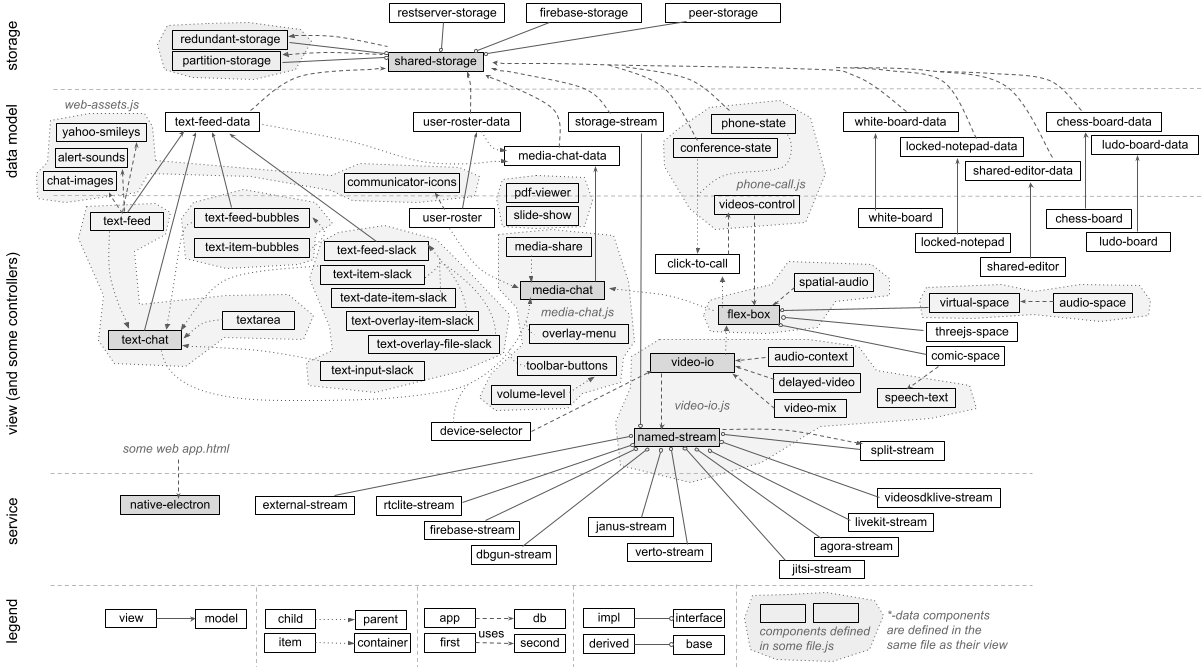

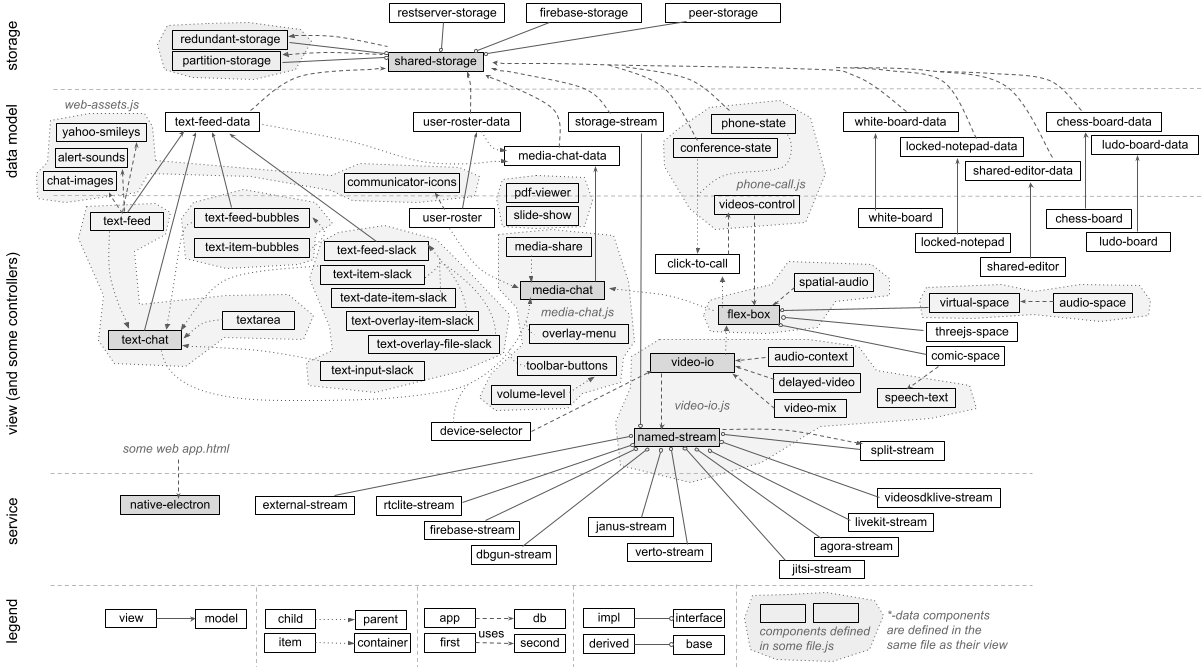

This project aims to unleash WebRTC creativity using web components. It has a collection of more than seventy web components for building a wide range of real-time communication and collaboration web applications.

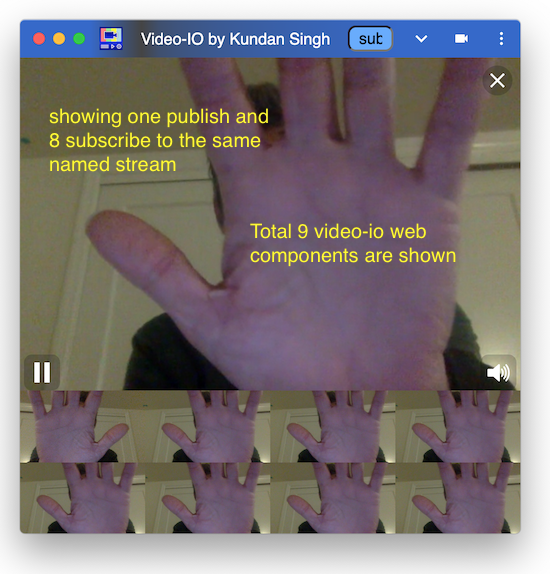

I started this project with one generic web component, <video-io>, for publish-subscribe in WebRTC apps. It has a simple video box abstraction that can be published or subscribed to a named stream. It was inspired by my earlier flash-videoio project, and implemented a subset of the ideas presented in my blog post. The motivation, software architecture, implementation, and various connectors of <video-io> are presented in the research paper, A Generic Web Component for WebRTC Pub-Sub. In summary, it promotes reuse of media features across different applications, reduces vendor lock-in of communication features, and provides a flexible, extensible, secure, feature-rich, and end-point driven web component to support a large number of communication scenarios.

As I worked through many sample and demo web apps using this <video-io> component, I kept creating more abstractions and web components, e.g., for layout of multiple video boxes, or text chat, or speech transcription, or call signaling, and so on. The collection will keep growing, and improving, as I work on more application scenarios. But the basic foundational ideas or the theme of this project remains the same, as follow:

I will create a separate research paper for the motivation and software architecture.

Here, I present an extensive hands-on tutorial of the various web components, or RTC Bricks.

Please follow the basic topics below for a tutorial on how to use the video-io and

named-stream web components. The tutorial is intended to be

sequential. Even if you need information on a specific use case, I recommend that you go through

all the topics in sequence for the first time, under the basic category. The other

advanced topics do not need to be followed in sequence.

These include advanced usages such as video image manipulation and multi-party

conferencing, and include other components such as flex-box

for conference display layout and shared-storage for generic end-point driven

software implementations of communication applications. Web components for several common

scenarios are also described, e.g., text-chat, locked-notepad or

click-to-call. A navigatable and interactive content is shown below.

Video-io is a web component demonstrating several generic WebRTC related use cases, e.g., live camera view, recording of multimedia messages, live video call or conferencing, in client-server as well as peer-to-peer topology. It combines the various media and connection abstractions available in WebRTC, and exposes a single video box abstraction. The video box can be attached to a named stream, and configured in either a publish or subscribe mode.

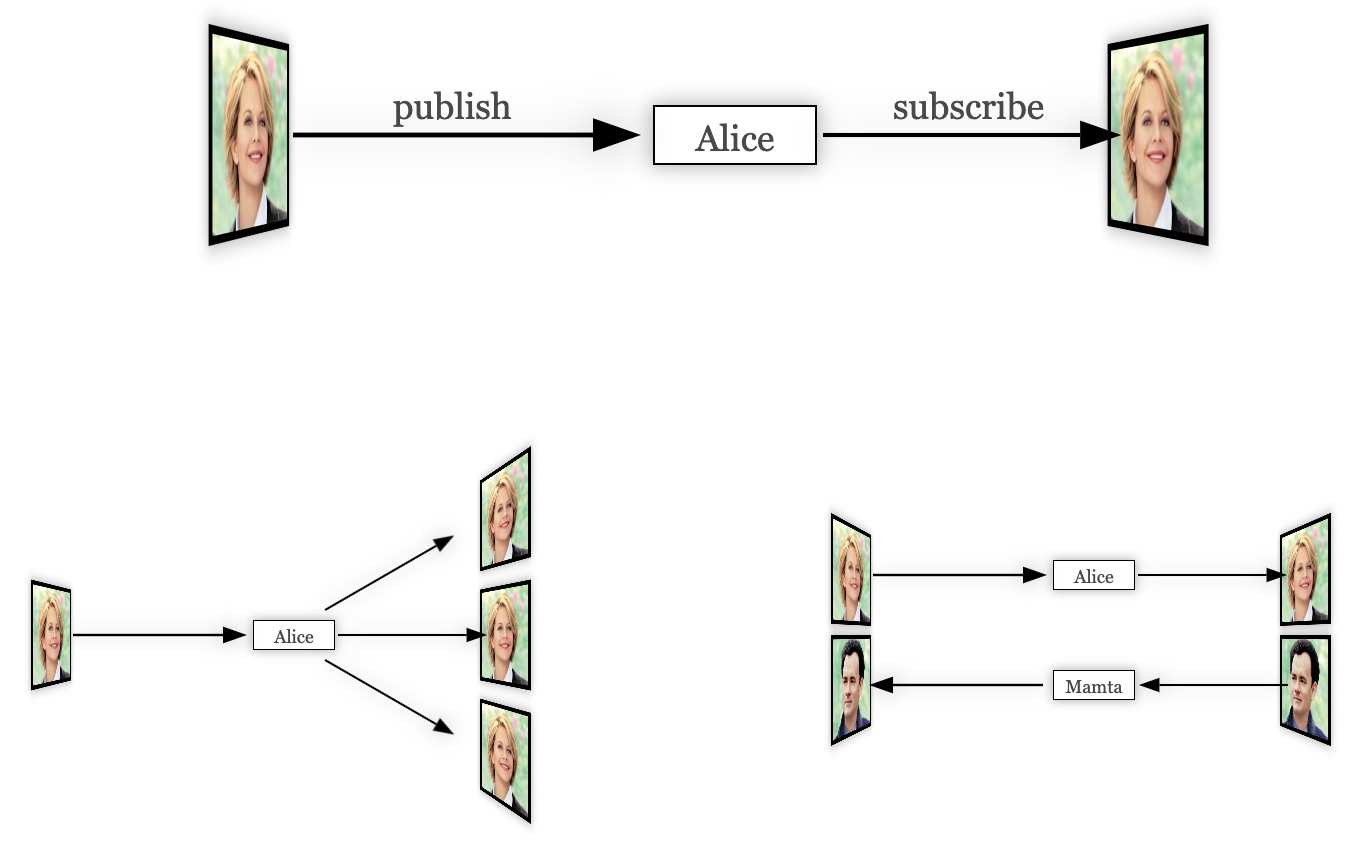

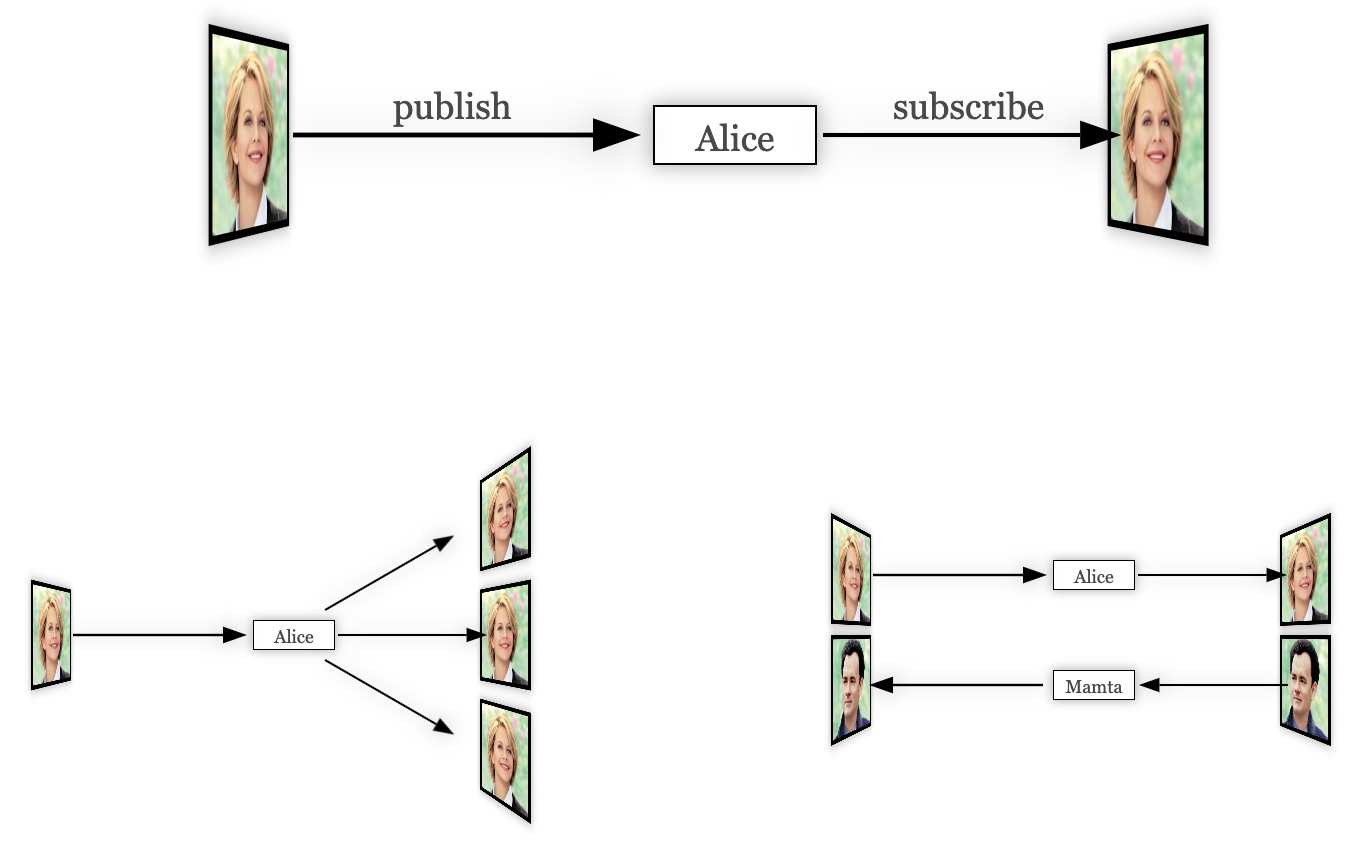

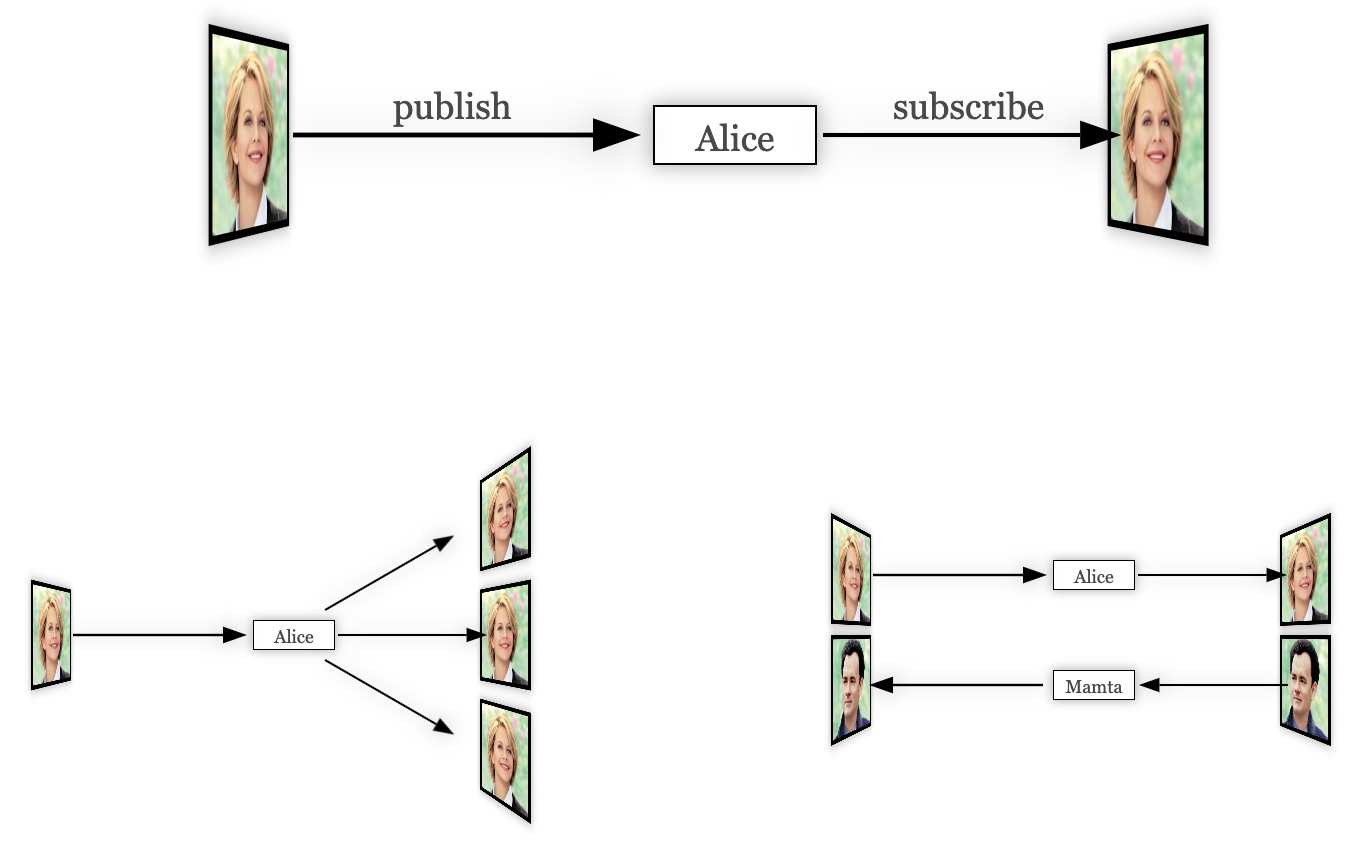

The basic idea is very simple. It is based on the well proven named stream abstraction previously used by Flash Player and ActionScript application developers for building real-time multimedia applications.

Consider the above example with two video boxes, both attached to the same named stream, "Alice", one to publish and the other to subscribe. When two more video boxes subscribe to the same named stream, "Alice", you get one to many video broadcast. When two video boxes come in the reverse direction attached to a different named stream, "Bob", you get two-party video call. This simple abstraction facilitates a wide range of use cases as your will see later.

To include the web component, do the following in the head section of your HTML document.

<script type="text/javascript" src="https://rtcbricks.kundansingh.com/v1/video-io.js"></script>

Although web component is now ubiquitous, to ensure compatibility across various browsers, you may want to include the light weight polyfill for Web components before including any of the components described in this document, as shown below.

<script type="text/javascript" src="https://rtcbricks.kundansingh.com/v1/webcomponents-lite.js"></script>

<script type="text/javascript" src="https://rtcbricks.kundansingh.com/v1/video-io.js"></script>

Alternatively, a better option is to checkout the code from the project repository, and host it on your own web server. Our components are vanilla JavaScript files without dependency on external frameworks, so should be pretty easy to include in any of your web application with or without your favorite framework.

In the body section of the HTML document, include a video-io

element, e.g.,

<video-io></video-io>

The component instance can now be used in the script.

You can also specify additional attributes such as id or controls

in HTML, e.g.,

<video-io id="video" controls></video-io>

See How to use the video-io API? for a complete list of attributes and properties.

All the web components and sample apps defined in this project interpret

a consistent configuration for console logging. The included "log-level.js" script

defines a global log_level function which can be used by any

existing or new code to get a custom console object that adheres to

the same consistent configuration. To use this, just include the script in

the main HTML as follows.

<script type="text/javascript" src="https://rtcbricks.kundansingh.com/v1/log-level.js"></script>

Then, in the web component definition or in any other script code,

override the global console object with the one obtained from

this customized logging as follows.

const console = (window || self).log_level();

It allows passing the log-level URL parameter to the main HTML page to configure the

console logging, e.g., "index.html?log-level=4".

The default in most cases is to enable level 3 (info) and lower. The

allowed values for the URL parameter are number 0 to 5, which correspond to

disable (0), error (1), warn (2), info (3), debug or log (4), and trace (5), respectively.

Any non-numeric value is interpreted as to enable all log levels.

This tutorial's main web page also accepts the log-level URL parameter,

and passes it to any included demo or test pages.

Since the parent web app that uses our web component,

may or may not include this script,

all our web components include the following code, or its variation, to

check if log_level is defined, and to fallback to the global console

if not.

const console = (window || self).log_level?.(document.currentScript?.src.match(/\blog-level=(\d+)\b/)?.[1]) || (window || self).console;

This allows other web apps to define their own customization for

console logging if needed.

Furthermore, the above code allows enabling log level individually in a specific

script, e.g., the following shows logging enabled only for the flex-box

component but not for the video-io component.

<script type="text/javascript" src="video-io.js"></script>

<script type="text/javascript" src="flex-box.js?log-level=5"></script>

For the purpose of this tutorial, just supplying the log-level URL parameter should be enough to control the console logging in various demo and test apps.

One of the first things that you can do is to capture from webcam and display the video.

This is done by using the publish attribute as follows.

<video-io controls publish="true"></video-io>

You will notice such buttons throughout this tutorial. These are intended to show you live demonstration of the relevant code and usage of the component. Click on it to try the specific example usage. Use shift+click on desktop or long click on mobile to open it in a new window. Make sure to stop the loaded demo after you are done, to unload that external web app. Click on the show code link on the right to see the code for that demo.

When you try the above example, you will notice a few things.

The default size of the component is 320x240 pixels.

The default camera capture ratio is 4:3. The controls

attribute causes it to display the controls on mouse hover. A publisher mode component

by default has three buttons - one to pause or play the video, and

two for camera and microphone.

Stopping the publishing is done by resetting the publish property as follows.

<script type="text/javascript">

video.publish = false; // stop publish

video.publish = true; // start again

</script>

By default, publish is done for both audio and video. To disable one, you can use the

microphone or camera attributes.

<video-io controls microphone="false" publish="true"></video-io>Or in JavaScript, using properties, as follows.

video.microphone = false;

video.publish = true;

The above example uses an external button to control the publish state. You will notice in the above example that the microphone icon is muted.

Use the CSS styles to change the display size. Use the camdimension attribute and

property to change the camera capture size.

<video-io style="width: 960px; height: 540px;"></video-io>

<script type="text/javascript">

video.camdimension = "1280x720";

video.publish = true;

</script>

Although the code snippet above shows camdimension set as property, it can also

be specified as an attribute. Many properties of the component instance are also available as attributes,

that can be set in markup. See How to use the video-io API? for a

complete list of attributes and properties.

The local camera preview is mirrored by default. This can be changed to not mirror the preview

video using the mirrored attribute to false

<video-io mirrored="false" publish="true"></video-io>

Alternatively, you can use the --preview-transform CSS style attribute.

<video-io style="--preview-transform: none;" publish="true"></video-io>

Use the screen attribute or property to publish using screen capture instead of camera. Note that, unlike the camera preview, the screen capture preview is not mirrored.

<video-io screen="true" microphone="false" publish="true" style="background: black;"></video-io>

Generally, for screen share, you should disable the microphone, so that sound is not captured too, and you should style the background color, so that the difference in aspect ratio is prominently shown as black.

Additionally, you can alter the other constraints such as camdimension or framerate

that apply to both camera and screen capture. It is recommended to use lower framerate for screen capture,

if the screen size is too big, e.g.,

<video-io screen="true" ... desiredframerate="3" publish="true"></video-io>

A lower screen share framerate is useful in keeping the bitrate in check, without losing the picture

quality. Unlike camera capture, a screen capture treats camdimension as maximum constraint,

and the actual dimension may be smaller, e.g., if the value is 640x480 but the screen size is 1280x720, then

the screen capture is scaled down to 640x360 due to maximum width and height constraints.

When the video is playing, the playing property is changed to reflect that.

The pause control element of the component may be used by the user to directly change the

playing state of the video.

For a publisher component, this playing state does not affect the state of the published media stream.

This may cause unintended behavior, when the publisher side user pauses her video, but

the subscribers continue to see and hear the live audio and video stream from the publisher.

The autoenable property and attribute can change this behavior. If set for

a publisher component, it ties the displayed video with the published stream, i.e., when the

video pauses, then the published media stream is disabled too.

This section describes some ways to change the appearance and general behavior of the component, independent of the publisher or subscriber mode.

The default background color of the component is transparent. This can be changed using the

background or background-color CSS style attribute.

posterUse the poster attribute or property to display an image before the video is

loaded or played.

<video-io mirrored="false" poster="some-image.jpg" ...></video-io>

When using the poster attribute, it is recommended to reset the mirrored

attribute, to prevent the poster image from getting flipped horizontally.

If the aspect ratio of the video in the media stream is different than the display size aspect

ratio, then the object-fit CSS style attribute determines how the video is displayed.

The default is contain. This can be changed to cover or fill.

<style>video-io { width: 180px; height: 240px; }</style>

...

<video-io id="video1" style="background: green;"></video-io>

<video-io id="video2" style="object-fit: cover;"></video-io>

<video-io id="video3" style="object-fit: fill;"></video-io>

The component has three slots for header, footer and controls in the display. The header and footer are displayed as persistent overlay on top of the video element, but behind the controls if any. The controls are displayed in the middle of the built-in controls when visible. An example usage of these slots is to display the name of the person in a video call, or the status of the connection.

<style>video-io > span { color: white; }</style>

...

<video-io id="video">

<span slot="header">Queen Elsa</span>

<span slot="footer" style="margin-left: 40%; line-height: 30px;"></span>

<span slot="controls" onclick="alert('clicked');"></span>

</video-io>

<script type="text/javascript">

...

video.addEventListener("propertychange", e => {

if (e.property == "playing")

video.querySelector('span[slot="footer"]').innerText = "Playing: " + e.newValue;

});

</script>

The controls, header or footer sizes are fixed in the component implementation, e.g., the control buttons are 36x36 pixels, irrespective of how large the component is displayed. An application may need to adjust the controls, header or footer sizes to match the display size of the component, e.g., if displaying in 160x120 then use smaller size buttons, and if displaying in 640x480 then use larger size buttons. This can be done using the CSS transform and zoom attributes.

There is also a zoom property in the component which can be used as

follows. The default is treated as no zoom, or value of 1.0. The controls are made smaller

using zoom of less than 1 and larger using zoom of more than 1. For example, using zoom

value of 0.5 will make the control buttons half the size, and using 1.25 will make them

25% larger.

<video-io zoom="0.5" controls />

<video-io zoom="1.25" controls />

The following example shows the components displayed in small sizes, one without zoom and other with zoom. The header, footer and controls are enabled for all the instances for comparison.

One way to calculate the right value for zoom is as follows. Suppose the buttons appear the right size for component size of 320x240. To scale the buttons proportionally, you can derive the zoom factor using the ratio of the actual display size and this right size.

const desired = {width: 320, height: 240}; // right size for buttons to appear good.

const actual = {width: 640, height: 360}; // actual size for display of component.

video.style.width = actual.width; video.style.height = actual.height;

video.zoom = Math.min(actual.width/desired.width, actual.height/desired.height);

Be careful when using a small zoom value, especially on mobile or low accessibility devices, that the buttons may become too small to click.

The magnifier attribute or property can be used to show a magnifying glass on mouse

hover for the displayed video.

<video-io ... magnifier="40px,2x" />

The example above creates a magnifying glass with diameter about 40px, and performs 2x magnification. Alternatively, the values can be specified as percent, e.g., "50%,300%" will cause the diameter to be about 50% of the component height, and magnification of 3x. Note that magnification of less than 1x is not allowed.

<video-io ... magnifier="50%,300%" />

Try the following example to experiment with the magnifier glass.

This and the next feature are useful for quickly checking some small text during screen share, when the component size is small.

The zoomable property controls whether the user can drag-select a

portion of the video and zoom.

<video-io ... zoomable="true" />

Try the following example to experiment with select to zoom. Once the publish starts, click, drag and select a rectangle on the video, to zoom in. Then click again to restore.

If already zoomed, then a mouse down on the component resets the zoom. If already

zoomed, and the component is resized, then the zoom value is preserved and the

object-fit style is honored. To try that out, use the following

example - it opens in a new tab; try resizing the window, with or without zoom on the video.

A video-io component instance can be in a publish or subscribe mode. Previously, we

showed examples of the publish mode. The subscribe mode is relatively easy, as there is no capture

device involved. For real-time media flow, a publisher instance is connected to a subscriber

instance. These instances may be running on separate browsers or machines. For discussion in this

section, we assume that both are running on the same web page.

There are two ways to connect the publisher and subscriber instances: point-to-point and named streams. The point-to-point connection is similar to the WebRTC peer connection abstraction, albeit unidirectional only. The named streams abstraction will be discussed in the next sub-section.

In the point-to-point connection, one instance of publisher connects to one instance of subscriber. The application manages data exchange between these instances. In particular, the instance emits the data event, which must be applied to the other instance. An example follows.

<video-io id="video1" controls autoenable="true"></video-io>

<video-io id="video2" controls></video-io>

<script type="text/javascript">

const video1 = document.querySelector("#video1"),

video2 = document.querySelector("#video2");

video1.addEventListener("data", e => video2.apply(e.data));

video2.addEventListener("data", e => video1.apply(e.data));

video1.publish = video2.subscribe = true;

</script>

The above example creates two instances of video-io. The first one publishes

microphone and camera to the second one. The second one is in subscribe mode. The data event contains

signaling data for offer/answer and ICE candidates as specified in the WebRTC APIs. If you are familiar

with the WebRTC APIs, you can follow the rough sequence of events by logging the data value.

Although both the instances are shown as part of the same web page here, a real application will use some WebRTC notification service to exchange the data between the two distributed instances.

As before you can stop the publish mode, by resetting the publish property.

Similarly, resetting the subscribe property stops the play. In the point-to-point

mode, once the subscribe has stopped, it cannot be started again, and a new component

instance must be used for any new media stream subscription.

The point-to-point abstraction should avoided unless the named stream mode is not easily applicable. Point-to-point is typically useful when integrating with existing communication service for which a named stream implementation is not available or hard to do. The named stream abstraction provides several benefits as discussed next. It also decouples the client-side app and the notification service, so that the service can be easily replaced by another in the future, while keeping the client application logic intact.

In this approach, at most one publisher and zero or more subscribers can be attached to a named stream. All the subscribers receive the media stream from the publisher if present. Using named streams for connecting the publisher and subscriber is preferred to point-to-point, because of several reasons.

NetStream model of Flash PlayerThe named-stream component provides the bare bone necessity needed for

implementing the above approach, within a single web application. In a real application, this

is extended to other components that use a WebRTC notification service or a media server

to allow distributed publishers and subscribers.

The following example illustrates the basic concept using the named-stream component.

It uses three video-io components, one as publisher and two as subscribers, all

attached to the same named-stream instance.

<named-stream id="stream"></named-stream>

<video-io id="video1" controls autoenable="true" microphone="false"></video-io>

<video-io id="video2" controls></video-io>

<video-io id="video3" controls></video-io>

<script type="text/javascript">

const [video1, video2, video3, stream] = ["video1", "video2", "video3", "stream"]

.map(id => document.getElementById(id));

video1.srcObject = video2.srcObject = video3.srcObject = stream;

video1.publish = video2.subscribe = video3.subscribe = true;

</script>

To try the example above, click on the publish and subscribe buttons in different order and see the effect. Then try to stop and restart them in different orders.

As shown above, the srcObject property is used to bind a video-io instance

to a named stream, and the publish or subscribe function is used to

configure it as a publisher or subscriber. Note that if the srcObject is set then

the application should not use the data event, because the named stream instance

processes that internally.

If using thesrcObjectproperty, make sure to set thepublishorsubscribeproperty after setting thesrcObject, and not to use them as attributes.

Alternatively, you can use the for attribute in the markup to configure the named stream

as shown below. The attribute value is the ID of the DOM element of the named stream.

<named-stream id="stream"></named-stream>

<video-io id="video1" controls autoenable="true" for="stream" publish="true"></video-io>

<video-io id="video2" for="stream" subscribe="true"></video-io>

<video-io id="video3" for="stream" subscribe="true"></video-io>

If using theforattribute, make sure that the named stream DOM element thatforrefers to is included before thevideo-ioelement, so that when thevideo-iocomponent instance is attached to the DOM, it can find the correspondingnamed-streamin the DOM too.

The example above uses one instance of the named-stream component. This works only for

this type of named stream component, but not for the others described below. For the other named

stream components that connect to a server in some way, a separate instance of the component is needed

for each video-io component instance.

When the publisher pauses the video, the subscriber sees black video feed. This default behavior

can be changed using the autopause property, set on the publisher. If true, then it

sends an event to the subscriber before the playing property is changed on the publisher.

The subscriber in turn can pause and play the video, to avoid showing the black video feed during

publisher side pause.

<named-stream id="stream"></named-stream>

<video-io id="video1" controls autoenable="true" autopause="true" for="stream" publish="true"></video-io>

<video-io id="video2" for="stream" subscribe="true"></video-io>

Setting autopause shows consistent video behavior on publisher and subscriber side,

instead of publisher paused and subscriber with black video feed.

rtclite-stream for NotificationAs mentioned before, a real application will likely use a WebRTC notification service to

exchange the signaling data among the different distributed instances of the video-io

components running in different user browsers.

My earlier open source project rtclite has a light weight notification server based on named streams. The concept is first explained in a blog post on WebRTC notification system, but extended to named stream with the server code in streams.py file and an example client is in streams.html.

First, follow the instructions there to install and run the notification server, and try out the example client code. I run the notication service for local testing as follows, using Python 2.7, on TCP port 8080.

python -m rtclite.app.web.rtc.streams -l tcp:0.0.0.0:8080 -d

I have also ported that notificaion server to NodeJS, and included in this project for convenience. I run this notification server for local testing as follows, after installing the dependencies.

cd srv

npm install

node streams.js -p 8080 -d

Default port is already 8080, so the -p option above is redundant.

Default log level is info, and -d option creates verbose log,

whereas -q is for the quieter mode with only error logging.

Next, use the rtclite-stream component instead of the default named-stream,

and try it out below. Note that the named streams in separate web pages refer to the same stream at the

notification server, if the same src attribute is used.

<!-- in <head> -->

<script type="text/javascript" href="https://rtcbricks.kundansingh.com/v1/rtclite-stream.js"></script>

...

<!-- in <body> -->

<rtclite-stream id="stream" src="ws://localhost:8080/stream/1234"></rtclite-stream>

<video-io id="video" controls autoenable="true" for="stream"></video-io>

<script type="text/javascript">

const video = document.querySelector("#video");

...

video.publish = true;

... // or

video.subscribe = true;

</script>

Try the following to launch one publisher and one or more subscribers for a named stream. You can

pick any randomly unique stream name. This example uses a standalone video.html

web app that configured one video-io and one specific named stream instance

using the various URL parameters.

Note that this rtclite-stream component provides a one-to-one

mapping to the stream publish or subscribe operation,

and hence, each such stream instance may be attached to only one video-io instance.

Please note that the notification server described above does not have any access control. It may be extended to add some form of authentication and access control for your need on top of the basic connection.

The following table describes all the attributes and properties of the component. Additional methods are in the named-stream base class.

I have created another component, firebase-stream, that uses the

Firebase project to implement the notification system.

Unlike the previous rtclite-stream component, which requires a separate stream object for attaching to each

video-io instance, the single firebase-stream object may be attached to multiple

video-io instances in your web app. Behind the scenes, the component provides a proxy to reach

and manipulate data on the Cloud Firestore

database. So it does not matter whether you use a single or separate firebase-stream objects

for all the video-io instances on the web page.

First, signup for the Firebase account, create a sample app, and add the Cloud Firestore feature to the app.

Second, include the relevant scripts in your web application as shown below. Next, use

the firebase-stream component instead of the default named-stream. Then, configure the

component by providing your app's details such as apiKey, projectId and appId. Other fields are

not needed. After that, this component instance may be used with video-io as shown in

other examples previously.

<!-- in <head> -->

<script type="text/javascript" href="https://www.gstatic.com/firebasejs/12.9.0/firebase-app-compat.js"></script>

<script type="text/javascript" href="https://www.gstatic.com/firebasejs/12.9.0/firebase-firestore-compat.js"></script>

<script type="text/javascript" href="https://rtcbricks.kundansingh.com/v1/firebase-stream.js"></script>

...

<!-- in <body> -->

<firebase-stream id="stream" src=""></firebase-stream>

<script type="text/javascript">

document.querySelector("#stream").src = "id:123456?config=" + encodeURIComponent(JSON.stringify({

apiKey: ..., projectId: ..., appId: ...

}));

</script>

I use the legacy API with chaining, as I find it easier to understand and program with. You are free to update the firebase-stream.js component file to use the modular API.

To make sure that the following sample uses the right configuration, first set the apiKey, projectId and appId in your localStorage, using the JavaScript console. Keep the console open to see any error or warning when you try the example later.

localStorage["firebase-stream"] = JSON.stringify({

apiKey: "...", projectId: "...", appId: "..."

});Try the following example which opens one publisher and two subscribers, and allows you to start or stop the publisher and subscriber.

Try the following to launch one publisher and one or more subscribers for a named stream. You can

pick any randomly unique stream name. It uses the same video.html web app mentioned earlier,

but to use the firebase-stream instance this time.

Please note that the notification server described above may require privacy protection of the keys. Please read the Firebase and Firestore documentation to secure your application by adding appropriate authentication, and access control on your database. Also, take measures to secure the app credentials.

The following table describes all the attributes and properties of the component. Additional methods are in the named-stream base class.

Graph Universe Node database is an open source

project

for fast and distributed data storage and synchronization.

To use this, first follow the simple instructions of that project

to install the node locally. Next, use our dbgun-stream

web component as the named stream implementation as shown below. It takes

the stream name, and a config with list of peers and other options.

<!-- in <head> -->

<script type="text/javascript" href="https://cdn.jsdelivr.net/npm/gun/gun.js"></script>

<script type="text/javascript" href="https://rtcbricks.kundansingh.com/v1/dbgun-stream.js"></script>

...

<!-- in <body> -->

<dbgun-stream id="stream"></dbgun-stream>

<script type="text/javascript">

document.querySelector("dbgun-stream").src = "id:1234"

+ "?config=" + encodeURIComponent(JSON.stringify({

peers: [ 'http://localhost:8765/gun', ... ], // may include others

localStorage: false, radisk: false,

}));

</script>

Try the following example which opens one publisher and two subscribers, connected to the locally running database as mentioned above.

The following table describes all the attributes and properties of the component. Additional methods are in the named-stream base class.

Use of other databases to implement the notification service is discussed in How to create a stream using shared data? Use of external media servers such as Janus or FreeSwitch is discussed later in How to work with a media server?

Use the record attribute or property to enable recording of the published or

subscribed stream.

Note that the recording is done on the client side by default. Use the recorddata property

to access the recorded blob. This can then be converted and assigned to the src URL of a media

element, or downloaded locally. An example follows.

<video-io record="true" publish="true"></video-io>

<video autoplay></video>

<script type="text/javascript">

const video = document.querySelector("video-io");

video.record = false; // to stop recording

const blob = video.recordeddata;

if (blob) {

const url = URL.createObjectURL(blob);

... // play this url in <video> element or download it

const player = document.querySelector("video");

player.src = url;

}

</script>

There are few other properties that can alter the behavior. The recordmode controls

whether the recorded data is overwritten (default) or appended ("append") for subsequent recordings in the

same component instance. In the example above, this should be set before the first recording,

to ensure that it takes effect on subsequent recording. The recordtype controls the content type of

the recorded data. Supported content types depend on the browser.

Playing the recorded data, converted to a URL, can be done in the standard video element

as shown in the example above.

The recordmax property controls the maximum duration of recording in seconds.

If a negative value is supplied, then the last that many seconds are accessible in recordeddata.

In this mode, the recordeddata value may be a promise, and must be resolved to access

the actual data.

video.recordmax = -10; // last 10s of recordings

...

const blob = video.recordeddata; // it may be a blob or a promise

Promise.resolve(blob).then(blob => {

if (blob) {

const url = URL.createObjectURL(blob);

... // play this url in <video> element or download it

const player = document.querySelector("video");

player.src = url;

}

});

</script>

Try the following example to record the subscribed stream, instead of the published, and to allow playing the last 30 seconds at any time.

You can use an audio elements instead

of video to only play sound of the last N-seconds. The recordmax is set to

30 seconds in the above example, but if there is not enough recorded data, the played recording will be smaller.

You can also use currentTime on the media element to play the last 10s for example, even

if the recording is for last 30s.

Some applications need delayed playback of audio and video instead of in real-time. For example, in real-time captioning, live events with moderation, or ability to look at your back or side in a video mirror. Such delayed video can be played using the recording feature shown earlier.

A naive approach can setup a periodic timer, say for 10s, and

access the recordeddata, and play in a video element.

The code snippet is shown below. This uses the continuous recording with default

recordmode, where the recording is restarted after the recordeddata

property is accessed.

<video-io record="true" publish="true"></video-io>

<video autoplay></video>

<script type="text/javascript">

const video = document.querySelector("video-io");

const player = document.querySelector("video");

setInterval(() => {

player.src = URL.createObjectURL(video.recordeddata);

}, 10000);

</script>

This works, with a problem, that everytime the video elements src is updated

with the last recorded segment of 10s, there is flicker.

To solve the issue, a new delayed-video component is used. Internally,

it employs two video elements, and two MediaRecorder instances,

running in parallel. It switches the display to the video element that has started playing

recently, thus avoiding any flicker. The following example shows how to use it.

<video-io id="video" record="true" publish="true"></video-io>

<delayed-video for="video" delay="10"></delayed-video>

Instead of specifying the for attribute

with value as the identifier of the video-io instance, you can set the input

property as the video-io instance, or the srcObject property as a

MediaStream instance. The following are equivalent, assuming that the stream exists.

player.setAttribute("for", "video");

player.input = document.getElementById("video");

player.srcObject = document.getElementById("video").videoStream;

Try the following example. You can change the delay value before starting.

The delayed-video component has attributes for delay, controls,

and for. It has properties for input and srcObject in addition

to delay and controls. Only one of for,

input or srcObject must be specified, in addition to a

valid delay, for the recording and delayed playback to start.

These attributes and properties are summarized below.

Previously, we have shown several examples of attributes and properties. Here we formally define those and enumerate and describe all the available values.

An attribute is accessible and settable in the HTML markup of the component. A property is readable and/or writable in the JavaScript code. Generally every attribute in our component is available as a property too, but not the reverse.

Some properties are also reflected as an attribute, which means that the current value of the property is updated in the component attribute. Unless marked as such, this is not the default. Thus, if a property is updated via attribute, but accessed via property, it will get the last update from attribute. But, if the property is updated via JavaScript property setter, and accessed via attribute, its value will not be correct. If the property is marked as "reflected as attribute" in the table below, then its value will be correct. Note that only a non-default property value is reflected as attribute, because a missing attribute indicates the default property value.

Following example shows the camera attribute and property.

<video-io ... camera="true" ...></video-io>

<script type="text/javascript">

let video = document.querySelector("video-io");

video.camera = false; // access the property

</script>

A property can have one of the four types - bool (for boolean), string, number or object.

The corresponding attribute is always a string in the HTML markup, but can represent the

correct value in string, e.g., camera="true".

Some boolean attributes may be treated differently - their presence may indicate a value of

true, and absence a value of false, e.g., the controls attribute.

For a number-type property, typically the JavaScript NaN indicates that the property

is not yet set, whereas an empty string in the attribute value indicates the same. For a string-type

property, typically an empty string indicates an unset value both in property and attribute.

Generally, a number-type property defaults to JavaScript NaN and a string-type

property defaults to an empty string. For several number-type properties, setting the value to NaN

does not do anything. For example, even though the default volume on launch of the component is 1,

once it is set to say 0.5, setting it next to NaN keeps it at 0.5 instead of reverting to original

default of 1. Similarly for other number properties such as the desiredframerate property.

Some properties are read-only, some are write-only, but most of them are read-write properties.

A write-only property is useful for invoking a function, e.g., getstats is set

to true, to have the component populate the quality metrics such as the

lost, bitrate and framerate properties. This avoids the need to

periodically capture the quality metrics, and only updates them when the trigger property,

getstats, is set.

Setting an attribute in the markup initializes the property, if it is not read-only. A read-only property cannot be set and has no effect even if the corresponding attribute is set.

While setting a property as an object is trivial for primitive types of bool, number or string, it is not so for an object type. We assume JSON formatted string value for the attribute when setting an object type property via the attribute.

You can try out the various properties and attributes below, and test the publish-subscribe behavior

of the component. It includes two video-io components, and allows you

to control various properties, and view their changes. Although not shown in the screenshot

below, it allows read/write of object properties as well. Note that some properties must be

set before publish or subscribe is set for them to take any effect.

Try enabling the publish checkbox on first and the subscribe on second to get started.

Some of the properties below are not yet implemented, and are marked so. Some of the properties are related to some other properties, and are marked so.

The table above is the complete reference for the properties and attributes of the video-io component.

Previously, we used the "data" event from the component instance. In addition, there are some other events dispatched by the component as shown below. The "propertychange" event is the most important.

Previously, we used the "data" event from the component instance to deliver to another component instance

via the apply function, when creating a point-to-point publisher-subscriber connection.

Furthermore, we used a few implementations of named streams to connect with the component instance.

Here, we go through the video-io component and named stream APIs that are useful for such interactions, including the

functions in the components.

Sample implementations of three named streams are available from us. If you want to implement another named stream component, e.g., to use a different signaling or notification channel for your WebRTC application, you can use the following interface to do so. A named stream component must implement the following functions. The video-io component instance invokes these functions on the attached named stream component instance.

Please see the named-stream.js or rtclite-stream.js file for examples of how to

implement a named stream component.

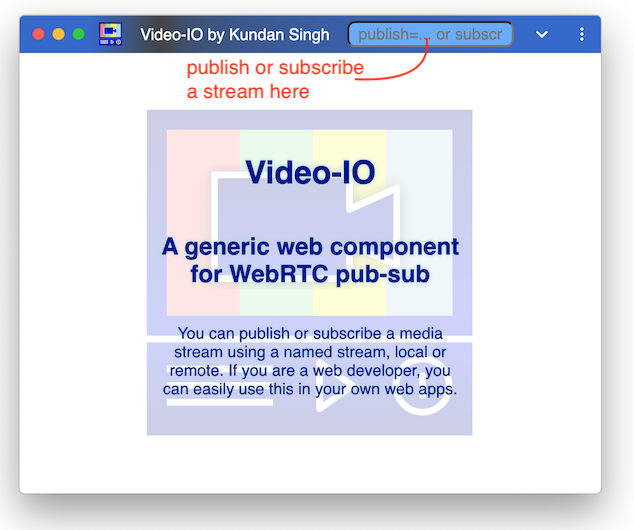

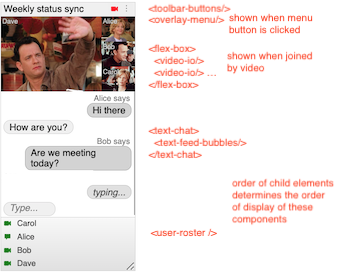

We have also created a standalone web app named video-io that can show zero or more video-io web components wrapped in a flex-box component. Although the video-io web component shows a single video in publish or subscribe mode, many video conferencing related use cases require showing multiple video elements at the same time. The flex-box web component, which is described later How to customize multi-video layout?, is used to layout multiple video-io or other elements.

This video-io standalone web app can be installed as a progressive web app (PWA), on desktop or mobile. To use the installed app, first launch the web app in the browser, then use the browser's prompt or the install button near the address bar to install it locally. Try the following to launch the web app with a local camera preview, and a local subscribed view of the same, and then click the install button near the address bar.

https://rtcbricks.kundansingh.com/v1/video-io.html?publish=...&subscribe=...

Note that the PWA manifest file for this app expects

the web page of the app to be at /v1/video-io.html. So if your testing

is not on this path, it may not allow or show the install button. Alternatively,

you can edit the video-io.json file for another path as per your hosting.

The user interface allows publish or subscribe of the named streams, e.g.,

by entering publish=alice or subscribe=alice it uses the local named-stream components,

and it attaches a video-io component to each such stream. For testing with

external named streams, you need to specify the full description, e.g.,

publish=rtclite:ws://localhost:8080/streams/1234

will publish to that named stream using the rtclite-stream component. A list of publish and subscribe

parameters can also be supplied on launch. It can also be launched on

desktop, if not already open, to add a new stream using its URL protocol handlers:

web+ezpub or web+ezsub, for publish or subscribe, respectively.

Note that the protocol handler feature is not supported on mobile.

If a user opens a URL

web+ezpub:rtclite:ws://localhost:8080/streams/1234,

it adds a video-io web component instance, and an attached rtclite-stream web component.

It then connects to the presumed rtclite service at localhost port 8080, over a

websocket transport, and publishes camera/mic media to the named stream, streams/1234.

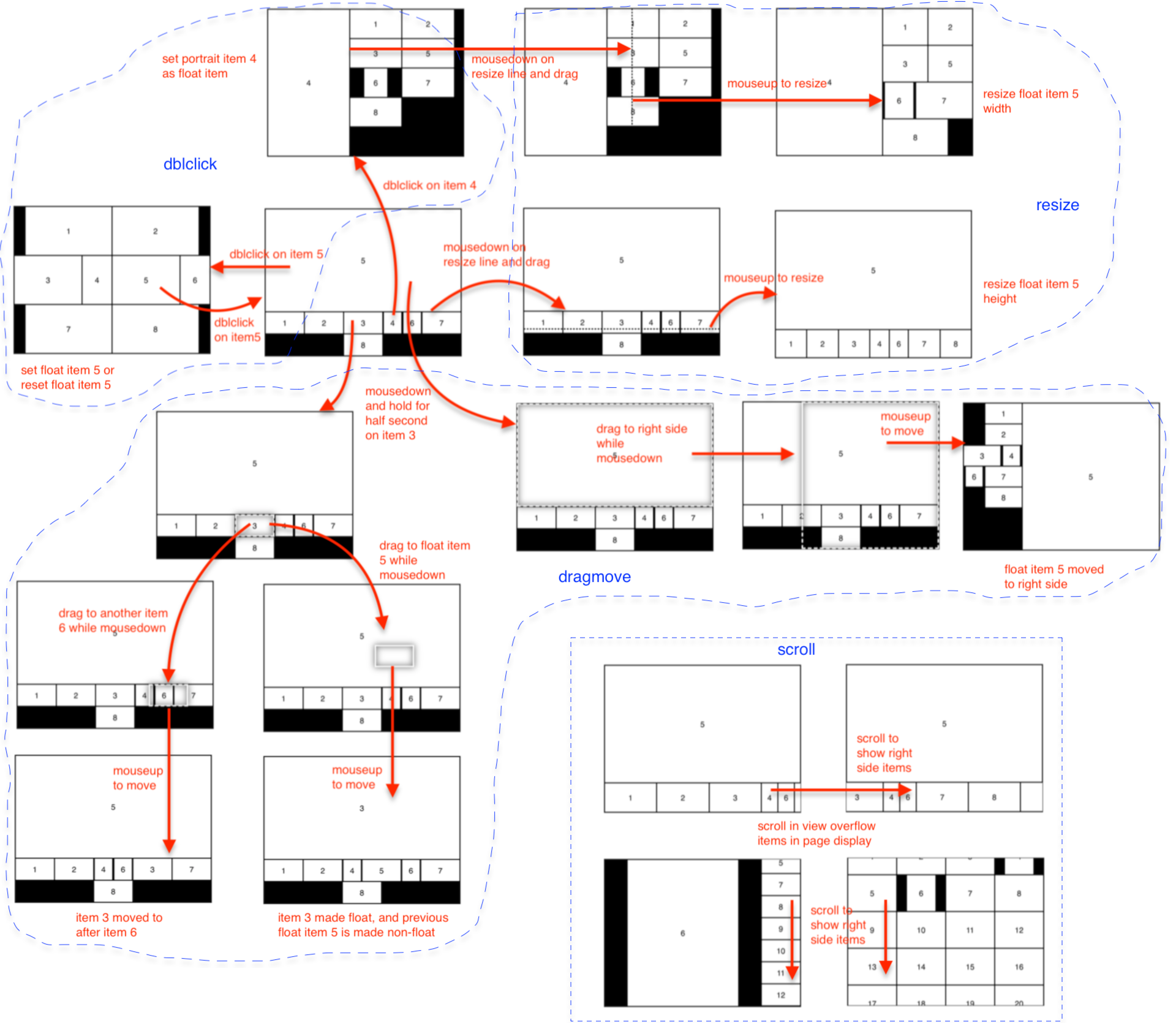

The flex-box component allows dynamically adjusting the layout, e.g., double clicking on a video to put it in presenter mode, or resize videos based on available size and aspect ratio of the window. It allows drag-and-drop of the video elements, e.g., to re-order them in the display, or to pop out a video to a separate window or tab, or to move or copy a publish or subscribe video stream from one app instance to another.

Besides the publish and subscribe URL parameters, it also accepts config parameter,

and the corresponding web+ezcfg: protocol handler. The config parameter

value can be used to set the attributes or styles on all included or individual video-io

elements. Consider the following example of the URL parameter:

?publish=...&config=video1.screen%3Dtrue%26video.style.object-fit%3Dcontain

Note that the parameters are parsed and processed sequentially.

It creates a publish video-io first after processing the publish parameter, and labels it as video1.

Then the config parametre is parsed, to interpret two actual values as follows. Note that

the full parameter value is escaped.

video1.screen=true

video.style.object-fit=contain

The first one is applied to the individual video-io instance labeled video1,

and causes it to use screen share to publish, instead of the default camera video feed.

The second is applied generically to all video-io instances including the previously

created as well as those that will be created in the future in this instance of the app.

In this case it changes the object-fit style of the video-io component to

contain instead of default cover, so that sides in the video

are not cut-off, and a padding is used instead.

The config parameter can also be used to set the attribute or style on the flex-box container. Consider the following example, which turns the flex-box display to pip or picture-in-picture mode, and uses the second video as the background.

...&config=box.display%3Dpip%26video2.float%3D (same as below)

box.display=pip

video2.float=

All the properties and styles of the video-io components are detailed in the previous chapter, How to use the video-io API? Additionally, standard CSS styles can be used too.

The goal of this video-io app, separate from the video-io web component, is to create a generic user interface for web video conferencing applications, where the conferencing app logic can reside in external software, which invokes this app to display, capture, publish and subscribe various media streams using the protocol handler. The extensive video-io component API can be used to further customize its behavior using the URL parameters.

We have also built a native Electron app, which uses the same code as the PWA, but wraps it in the Electron packager. The protocol handlers are ezpub, ezsub, and ezcfg without the "web+" prefix. It supports the same set of configurations as the PWA.

The sample app can built, tested and packaged as follows:

cd 11-electron-app

npm install

npm start ezpub:one ezsub:one

npm run make

The main app logic is in main.js, which parses the command line or launch parameters, and opens the external video-io.html web app. To enable screen sharing or desktop capture from the electron app, it uses preload.js and renderer.js to replace getDisplayMedia with custom desktop capturer. There is also an example package.json file for building the app. These are only for trial purpose, and a real world app will need to edit and customize these.

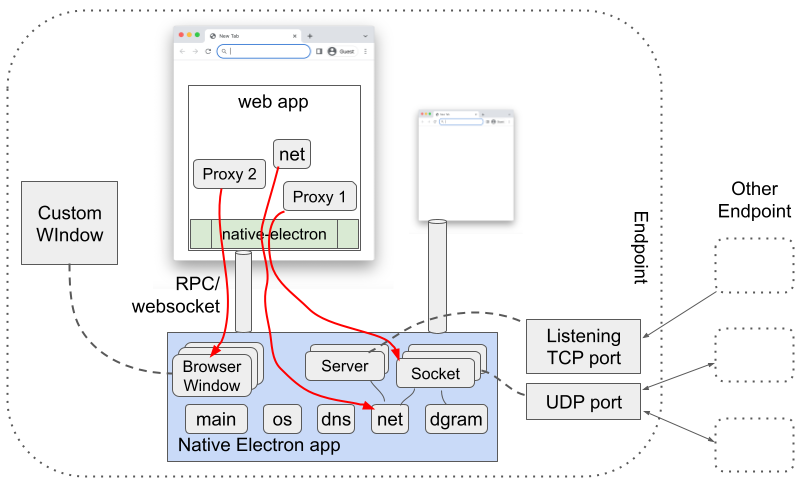

The Electron app and the progressive web app described in the previous chapter behave almost the same. In general, a progressive web app is preferred to an Electron app, for similar functionality. However, the native Electron can provide many additional features that are not available in a web app. In this chapter, we describe a separate native Electron app that enables a wide range of features and constructs that are not available in a web app.

The architecture is shown below, where the native Electron app acts as a plugin to enable and expose several Electron APIs such as for opening a native window, getting system information, DNS resolver, and TCP and UDP sockets. The basic idea is inspired by my earlier project, flash-network (see more here), that used a native AIR (Adobe Integrated Runtime) app to expose certain network APIs to web apps, including for my SIP-in-JavaScript project.

These Electron features are exposed using a web component, native-electron,

from a web app. This allows the web app to use, say, UDP or TCP socket,

for implementing advanced networking applications such as peer-to-peer or

application level multicast, or for implementing custom window behavior,

such as to open a transparent or frameless browser window

for a more immersive video conferencing display, or for drawing on the screen

or showing the mouse pointer of the other participants during a screen share enabled

video collaboration.

To use these feature, first build the native app as follows, and run it on the local machine.

cd 12-native-electron-app

npm install

npm start

npm run make

You may enable it to bind to all the interfaces, instead of only the local interface by default, using the -h option shown below. If no port, using -p, is specified, then it uses the default port of 9090. You may also want to edit the package.json or other source files to customize your native app.

cd 12-native-electron-app

npm start -- -h 0.0.0.0 -p 9090

Once the native electron app is running locally, use a native-electron web

component in the web app to connect to the native app as shown below.

<script type="text/javascript" src="https://rtcbricks.kundansingh.com/v1/native-electron.js"></script>

...

<native-electron src="ws://localhost:9090/native-electron"></native-electron>

When the src property is set, it attempts to connect to that

service, using websocket, assuming it as a locally running Native Electron app.

This websocket connection is then used as a channel for various RPC (remote procedure call)

interactions between the client (web app's native-electron component instance)

and the server (locally running Native Electron app).

Although the native app is expected to run on the local machine, it is not a requirement. The web component can connect to a remotely running native app as long as such connections are allowed. The native app itself may restrict incoming connection to be from only a localhost, by binding to the local IP interface (default) instead of all.

The local native app assigns an identifier to each channel, so that it can corelate the various APIs and proxy objects related to that channel. The web app can supply this identifier, using the id parameter, or let it be generated by the client side web component, as follows. The component internally replaces "{id}" in the URL with its own randomly generated identiier.

<native-electron src="ws://localhost:9090/native-electron?id={id}"></native-electron>

The native features exposed by this component are grouped into five modules. These are os, dns, net, dgram and main, corresponding to the similarly named modules in NodeJS, except that main is used for the browser window related APIs. The client side component just exposes these as proxy objects, so that the web app can invoke any method on those.

For example, if the web app calls a method, say, dns.lookup("www.google.com"), as shown below, then the web component serializes the method request and all the parameters, and sends it to the native app.

const {dns, os, dgram} = document.querySelector("native-electron");

const platform = await os.platform(); // "darwin", "win32", or "linux"

const result = await dns.lookup("www.google.com"); // e.g., {"address": "142.250.189.154","family":4}

When the native app receives this, it deserializes the request, runs the command using the NodeJS module, and returns the response or error, again after serialization. The web component then returns that response, after deserialization, as the resolved value of the promise returned by that method, dns.lookup.

Some NodeJS APIs such as dgram.createSocket return an object that are only valid in the native app, not in the web app. For such cases, the serialization and deserialization step ensures that a proxy object is exposed in the web app, with a unique identifier, so that further method calls or usage of that proxy object in the web app, results in the corresponding action on the real object in the native app. Such implementations are well known and thoroughly researched in RPC literature.

In the following example, the socket variable is actually a proxy object. Further method such as send on that object ensures that the native app uses the right underlying socket object. Setting the event handler, allows the web app to receive that event.

const socket = await dgram.createSocket({type: "udp4", ...});

socket.onmessage = (msg, rinfo) => { ... };

await socket.send("testing", 8123, "192.168.1.2");

Try the following example to test some APIs including opening a window, doing DNS lookups, create TCP and UDP server and client sockets, and sending messages. The test takes several seconds to complete successfully. Use the JS console of the browser to see any errors outside the component.

When the channel that created the proxy object is closed, the proxy object is automatically deleted and cleaned up by the native app. This avoids leaking memory, and automatically closes any windows or sockets when the connected web page closes.

The native-electron component is agnostic to the full set of APIs supported in these modules, and those are actually determined by the connected native app. If an API is not supported, e.g., due to mismatch in platform or version of the NodeJS used in the native app, it responds with an appropriate error.

Because of the nature of such RPC-based APIs, all the methods on the various modules or various proxy objects must be asynchronous, using promise based syntax in the web app, as illustrated above. Note that all the proxy objects in the web component receive all the relevant events from the native app, and depending on which event has a handler installed, only those are triggered or dispatched by the component. This may change in the future to optimize, e.g., to receive only the events with installed handlers from the native app, instead of all the events.

Property access in JavaScript is synchronous, whereas our RPC-based API requires asynchronous interaction to get or set a property. Hence, our proxied objects or modules do not provide any direct property access. However, if the property access is via a method call, such as address() on net.Socket, then those are supported. This may change in the future, to proactively get the property values and keep them updated in the proxied object in the web component.

The following table describes all the properties of the component.

The following table shows all the events dispatched by the component instance.

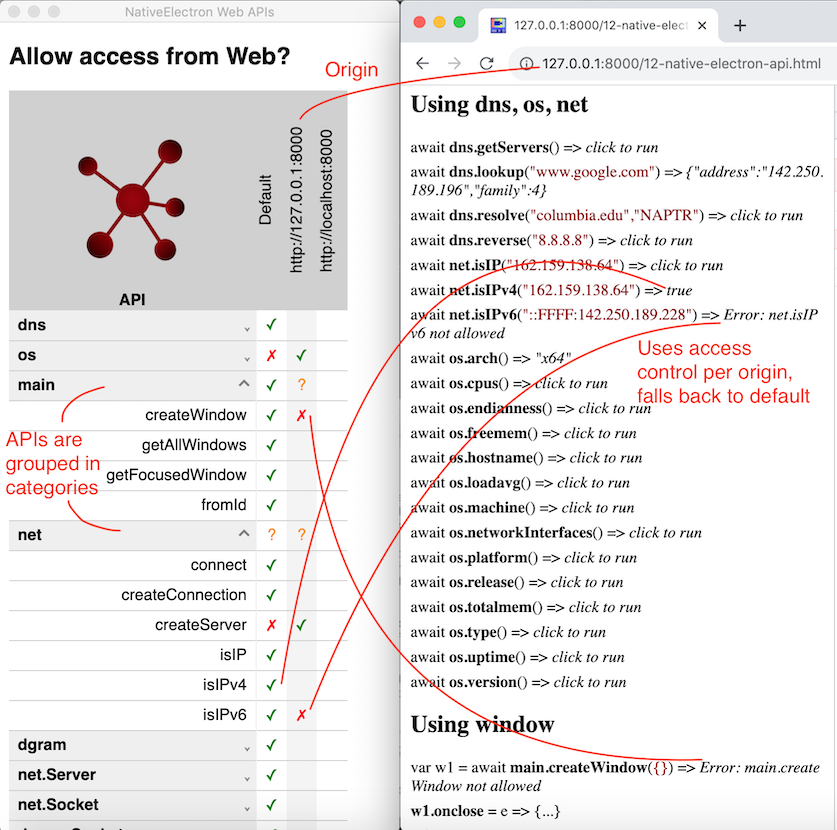

As mentioned earlier, the native app defines what APIs are supported in the various modules, os, dns, dgram, net and main, as well as on various proxied objects such as net.Socket, net.Server, dgram.Socket or BrowserWindow. These APIs are well documented in NodeJS and/or ElectronJS as referenced above.

Our native electron app also has a subset list of supported APIs as described here. In particular, these global methods on the modules are supported. These are documented in the NodeJS and/or ElectronJS references.

| Module | Methods |

|---|---|

| dns | getServers, lookup, resolve, resolve4, resolve6, resolveAny, resolveCaa, resolveCname, resolveMx, resolveNaptr, resolveNs, resolvePtr, resolveSoa, resolveSrv, resolveTlsa, resolveTxt, reverse |

| os | arch, cpus, endianness, freemem, hostname, loadavg, machine, networkInterfaces, platform, release, totalmem, type, uptime, version |

| net | connect, createConnection, createServer, isIP, isIPv4, isIPv6 |

| dgram | createSocket |

| main | createWindow, getAllWindows, getFocusedWindow, fromId |

The following methods on the proxied objects are supported. These objects are either created by the modules methods, or received in an event or method of another proxied object. These are documented in the NodeJS and/or ElectronJS references mentioned above.

| Object | Methods |

|---|---|

| net.Server | address, close, getConnections, listen |

| net.Socket | address, connect, destroy, end, pause, pipe, resetAndDestroy, resume, setEncoding, setKeepAlive, setNoDelay, setTimeout, getTypeOfService, setTypeOfService, write |

| dgram.Socket | addMembership, addSourceSpecificMembership, address, bind, close, connect, disconnect, dropMembership, dropSourceSpecificMembership, getRecvBufferSize, getSendBufferSize, getSendQueueSize, getSendQueueCount, remoteAddress, send, setBroadcast, setMulticastInterface, setMulticastLoopback, setMulticastTTL, setRecvBufferSize, setSendBufferSize, setTTL |

| BrowserWindow | destroy, close, focus, blur, isFocused, show, showInactive, hide, isVisible, isModal, maximize, unmaximize, isMaximized, minimize, restore, isMinimized, setFullScreen, isFullScreen, setSimpleFullScreen, isSimpleFullScreen, isNormal, setAspectRatio, setBackgroundColor, setBounds, getBounds, getBackgroundColor, setContentBounds, getContentBounds, getNormalBounds, setEnabled, isEnabled, setSize, getSize, setContentSize, getContentSize, getMinimumSize, setMinimumSize, getMaximumSize, setMaximumSize, setResizable, isResizable, setMovable, isMovable, setMinimizable, isMinimizable, setMaximizable, isMaximizable, setFullScreenable, isFullScreenable, setClosable, isClosable, setAlwaysOnTop, isAlwaysOnTop, moveAbove, moveTop, center, setPosition, getPosition, setTitle, getTitle, flashFrame, setSkipTaskbar, setKiosk, isKiosk, isTabletMode, getMediaSourceId, loadURL, reload, setOpacity, getOpacity, setIgnoreMouseEvents, setContentProtection, isContentProtected, setFocusable, isFocusable |

Note that some method results are redacted, e.g., the MAC address field is redacted in the result of os.networkInterfaces. Some methods have restrictions, e.g., net.Socket's listen or dgram.Socket's bind does not allow Unix path, and must use a port, and it does not allow listening or binding to port number less than or equal to 1024 for some protection.

Try the following example to view most of the APIs, and try them in real time.

The test example above allows you to edit the parameters of various methods, and invoke them in any order. It also shows when an event handler is called. It has extensive test setup for TCP and UDP socket, as well as browser window.

A major benefit of using the native electron app is that the web app can implement browser window features that are not available to the web app. The BrowserWindow object in ElectronJS is versatile and flexible to accomplish a number of use cases, such as transparent background, frameless window, semi-transparent overlay, and so on. We demonstrate two such use cases below.

The first example uses the rtclite stream to connect a publisher and subscriber to the same locally running service, but displays each video-io component in its own transparent window, with rounded shape to display the videos in circle. Those windows have no visible controls, and are displayed with always-on-top flag enabled. They can be dragged around on the screen to move, or dragged to resize near the corner.

Make sure that the rtclite streams service and the Native Electron app are running locally. Then try the following example to launch the two video-io instances.

The second example allows drawing on the screen. It uses a transparent full size window and loads the overlay-draw.html web app in that window. This web app is a simple drawing application that uses SVG and user mouse input to draw lines. You can first try the web app as follows. Click on the color picker to pick a pen color. Then click-and-drag the mouse within the web app area to draw lines.

Next, to test drawing with the overlay transparent window, make sure that the Native Electron app is running locally on the default port. Then try the following example. Then click the Start button to start drawing, and double click anywhere to stop. Click anywhere and drag to draw. The drawing disappears 8s after completing the last continuous drawing. Use Shift key when completing a drawing to change the disappearance timer to 60s.

A color picker is shown by default on top-left of the screen when the drawing is enabled. Click the picker to change the pen color if needed. You can also long press and drag the picker to a different position on the screen.

Internally the above example loads a generic overlaw-draw.html web app in a transparent window.

The Native Electron app's menu includes options to launch these windows: a self camera preview in a circle shape, and the drawing overlay described above.

The web APIs exposed by the native-electron component in conjunction with the locally running Native Electron app are very powerful. If misused, they can cause damage to the local system such as by exploiting vulnerability in Electron, NodeJS or their dependencies. Thus, such APIs should be allowed only from trusted web pages or apps.

The Native Electron app implements a simple authentication to ensure that the web apps cannot connect to the native app for the first time without a user interaction and input. This mechanism attempts to keep the end user in control of the authentication and authorization phases. Here, we describe how it works.

When the native-electron web component, used in a web app, attempts to connect to the local app, on WebSocket, the native app checks the HTTP Origin header of the request, and the access code in the connection URL parameter. If the access code is missing, or if the native app already has an access code for this origin but is different from the access code supplied in the URL parameter, then the native app displays the access code prominently on the screen, and responds with an error. The access code disappears after a brief timeout, of say, 5s. The native-electron web component, on an error, prompts the end user to enter the access code. If the user cancels the prompt, the connection is no longer re-attempted. If the user manually enters the access code in the prompt, and continues, it is reattempted. It causes the native-electron web component to retry the WebSocket connection, with the new access code in the URL parameter. If the access code is successfully verified by the native app, it allows the WebSocket connection to proceed. This authentication process is similar to what was used in my earlier flash-network project mentioned before. Subsequent RPC on this connection are further controlled based on API access control described later.

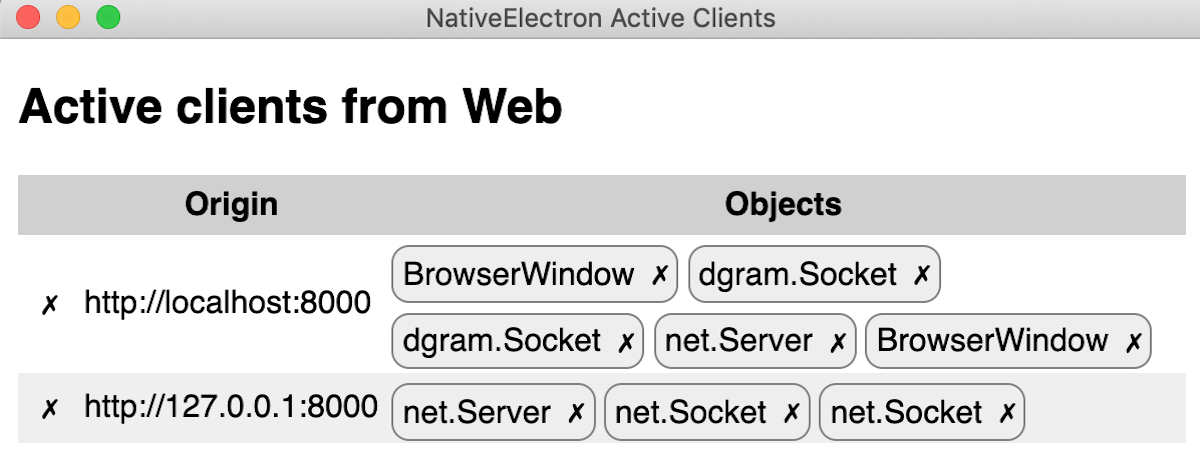

The Native Electron app has three user interface windows for access control, that can be shown directly using the application menu of the locally running native app. The first one is for access code management for various origins, such as to edit or delete them. The second one for web API access control, which allows the end user to disable some APIs from certain origins, or from all. And the third one is for listing and controlling all the active connections from the web app. This interface also shows all the created proxy objects such as TCP or UDP sockets and windows, and allows the end user to close an individual object, or the whole connection from that web app to the native app.

The above screenshot shows the native app's web API access control window on the left, and the test web page opened in the browser on the right. It also shows how the various permissions on the APIs affect the tests, e.g., isIPv6 is blocked for the origin of the web page, but isIPv4 has no override permission so it falls back to the default, which is allowed. The access control window shows the API methods grouped in high level categories, and allows setting the access control for the whole category, or the individual method in that category. Access control can be set for the web page origin, or if not set, then it falls back to the default column as shown above.

The above screenshot shows the native app's active client window, with various active connections from web apps, and their created objects. The cross button in the row closes the connection from that web app, and the cross button next to the object type closes that object. Note that our native-electron web component is designed to reconnect automatically, so closing the connection usually results in an immediate reconnect. To really block the web app, you should also delete or change the access code in the first user interface described earlier.

The device-selector component can be used to display a user interface to allow

selecting media devices. It allows selecting microphone, speaker and camera devices.

When this component is attached to another target video-io component,

it automatically sets the devices on the target. The controls in this

component automatically adjust on resize.

Try the following example to explore device selection. Give it a try from a machine that has multiple devices such as two webcams or two microphones.

The following table describes all the properties of the component.

The following table shows all the methods of the component.

The following table shows all the events dispatched by the component instance.

The user interface of the component can be customized using the following styles.

The channel property of the video-io component can be used to enable the

underlying data channel between publisher and subscribers, similar to the media path, but

bi-directional.

<video-io id="video1" channel="true" publish="true"></video-io>

<video-io id="video2" channel="true" subscribe="true"></video-io>

This then allows you to send some text data using the send method, which is then received

and delievered via the message event on the other end.

video1.send("... some text ...");

video2.addEventListener("message", event => {

// event.data is the text data received.

});

The data channel is established along with the media path, when the component is published or subscribed.

Hence, the channel property must be set before publish or subscribe is initiated. A data-only path may be

achieved by disabling camera and microphone properties of the publish side.

In the full mesh or peer-to-peer case,

the data channel, like the media path, is between the publisher and all the subscribers. Thus, it allows

sending data from the publisher to the subscribers, and from the subscriber to the publisher. It does not

allow sending data among the subscribers, as the peer connection is only between the publisher and

the subscriber, but not between two subscribers.

However, the application can implement higher-level logic to

facilitate data routing at the publisher, by receiving from one subscriber and sending back to all,

such that the original subscriber ignores the reflected data.

Try the following example to explore how the bi-directional data path works.

The example does not enable audio/video by default, but uses the video-io component display to

show the shared image files, if any. To enable audio/video, you need to click to enable publish and

subscribes, and the camera/microphone on the publisher.

Similar to the underlying WebRTC data channel, the component can support sending text string, Blob,

ArrayBuffer or ArrayBufferView. The image file send in the above example uses

a Blob, and if that fails, it falls back to ArrayBuffer,

because Blob support was added only recently in Chrome's data channel.

When a media server such as Janus is used in the media path, then the data channel is between the publisher and the media server, and between the subscriber and the media server. Thus, unless the media server supports the data channel and implements the message routing logic, you will not have publisher to subscriber or subscriber to publisher data path using this mechanism. This is similar to the media path requirement at the media server.

Later, we will see how this feature can be combined with the speech-text component

to implement captioning and other features. Although, the channel feature is useful, it is not

enough to implement several communication use cases such as discovering participants in a call or

registering to receive incoming call. For that we need some other application logic such as based on

shared data, described next.

The ability to access and modify shared data is crucial in a distributed application such as two-party call, multi-party conference or online panel discussion. Furthermore, the clients can get notified when a piece of shared data is modified, and can update the client display state. For example, participants list or user's presence information can be stored as shared data. And user's client can modify and get notified on modification of such, to implement the entire application logic in the endpoint.

This type of resource-oriented software architecture has been researched and has matured already, e.g., in my previous work onrestserverandvvowproject, as well as with the popular Firebase real-time database.

The SharedStorage class in this project has an implementation of the shared data

abstraction for local testing and demonstrations. The individual subclass implementations further

extend this for real world applications by using specific server side storage, such as in

restserver-storage.js. This section shows how

to use the base SharedStorage class for initial testing, and to replace it with

others if needed.

To get started, include the implementation script, and create a SharedStorage

instance as follows.

<script type="text/javascript" src="https://rtcbricks.kundansingh.com/v1/shared-storage.js"></script>

<script type="text/javascript">

const db = new SharedStorage("local");

</script>

The constructor parameter in the above example causes it to use an internal LocalSharedStorage

implementation, using the browser's localStorage, and is good only for local

demonstrations. The shared-storage implementation can be extended

to support other specific storage implementations using this mechanism as shown below.

To construct the shared storage with a specific implementation such as in restserver-storage.js, use the following.

<script type="text/javascript" src="https://rtcbricks.kundansingh.com/v1/restserver-storage.js"></script>

<script type="text/javascript">

const db = new SharedStorage(new RestserverStorage(...));

</script>

Alternatively, the shared-storage component can be included as follows by supplying

the string source attribute.

<shared-storage id="storage" src="..."></shared-storage>

If src is "local", then it uses localStorage internally.

If src is a websocket URL such as "wss://...", then it uses restserver-storage

with the supplied server information.

The id attribute of the shared-storage instance identifies the

shared storage element on the web page, and can be used by other components to link to this

data storage. Such other components are described later in this document including in

the collaboration and telephony topics. Those components accept the storage element identifier

using the for-storage attribute as shown below.

<text-feed-data for-storage="storage" ...></text-feed-data>

There are two types of data structures allowed in such storage - single object or a list of objects. Such data is identified by an hierarchical path on that storage, e.g., "users/alice/contacts" may represent the contacts list of user Alice. The following example shows how to get reference to an object or a list, or a nested object or list.

const uref = db.list("users");

const vref = db.object("version");

const sref = db.object("version").object("software");

const cref = db.list("users").object("alice").list("contacts");

As you can see, the data is organized hierarchically. An object's parent may be another object or a list. A list's parent may not be another list. Hierarchical structure allows storing and managing data in a scope for access control and cleanup. Those familiar with Firestore will find that object here is like a document, and list here is like a collection, except that our list is implicitly ordered.

Getting the reference can be done via a nested path directly. The two lines in each of the following blocks of code are identical.

const sref = db.object("version").object("software");

const sref = db.object("version/software");

const cref = db.list("users").object("alice").list("contacts");

const cref = db.list("users/alice/contacts");

Following data operations are allowed on an object reference: create, read,

update or delete. Every function in the following example returns a promise.

The difference between create and update is that create will fail if the object

already exists, whereas update will not. If the object does not already exist, then

create and update will have the same effect. Moreover, update can be used to perform

partial update of the object value, e.g., to add, change or delete some attributes.

In particular, using undefined as attribute value deletes an existing

attribute.

await ref.create({name: "Bob", email: "bob@office.com", phone: "+12123334444"});

const data = await ref.read();

await ref.update({name: "Bob Smith", phone: undefined}); // change name, remove phone, unchanged email

await ref.delete();

When creating a new object, if you do not care about whether the object existed previously, then use the update function, instead of create. You can use create to implement some form of lock or mutex actually. For example, a client does a create on a object path. If that succeeds, it continues performing some contentious operation, otherwise it does not. After completing the operation, it deletes the object path.

Following data operations are allowed on a list reference: add, getall, removeall. Every function in the following example returns a promise.

const id = await ref.add({name: "Alice", email: "alice@home.com"});

const list = await ref.getall();

await ref.removeall();

The getall function allows filtering, ordering and limiting the result.

Note that the list children are ordered in their natural creation order, i.e., the

order in which the children are created either using add on the list reference

or using create or update on the child object reference.

Some examples of filtering, ordering and limiting the result follows.

const sorted = await ref.getall({order: 'name'});

const page2 = await ref.getall({offset: 10, limit: 10}); // zero-based index 10-19

const result = await ref.getall({filter: data => data.name.match(/kundan/i)});

const count = await ref.getall({reduce: (data, sum) => (sum+1), initial: 0}); // returns count

In addition to the data operations on the object or list reference, you can also perform

event subscription and notification. The onchange handler on the object

reference is called whenever the object value at that path is created, updated or deleted.

The onchange handler on the list reference is called whenever its child object

is created, updated or deleted. Additionally, any application level event data may be

dispatched on any object or list reference using the notify function,

which invokes the onnotify handler of that object or list reference.

To unsubscribe to receive events, just reset the handler to null.

With remote shared object, the event handlers are crucial in detecting changes in the

shared data structures of the application. For example, a contact list application

sets the onchange handler on the contacts list, to detect any change in that

data, and to show the updated list. Some examples of onchange are shown below.

oref.onchange = ({type, value}) => { // on object reference

// type is one of "create", "update" or "delete"

// data is the updated object value for create or update, and previous value for delete.

};

lref.onchange = ({type, value, id}) => { // on list reference

// type and value are as before.

// id the child object's id.

};

Note that if both getall and onchange is used on a list

reference, then there may be duplicates either due to race condition, timing of

when the item is added to the list, or a nuance of the actual storage implementation.

The application should check for duplicates, e.g., by using unique item identifier

in the list.

Some examples of onnotify and the corresponding notify function

are shown below. The notify function returns a promise that resolves to the count of

receivers that received the notification. If the count is 0, that means the notification

was not really delivered to any, such as when were no listeners. Note that the notification

is not stored for later delivery. This may require the application to use other

ways to find out if the notification was sent before it installed the listener.

ref.onnotify = ({data, from}) => {

// data is whatever serializable object was supplied in notify.

};