Measuring Forecasting Proficiency: An Item Response Theory Approach

1Fordham University, 2University of British Columbia, 3University of Cambridge, 4Federal Reserve Bank of Chicago, 5Georgia Institute of Technology, 6Forecasting Research Institute

Psychometrics

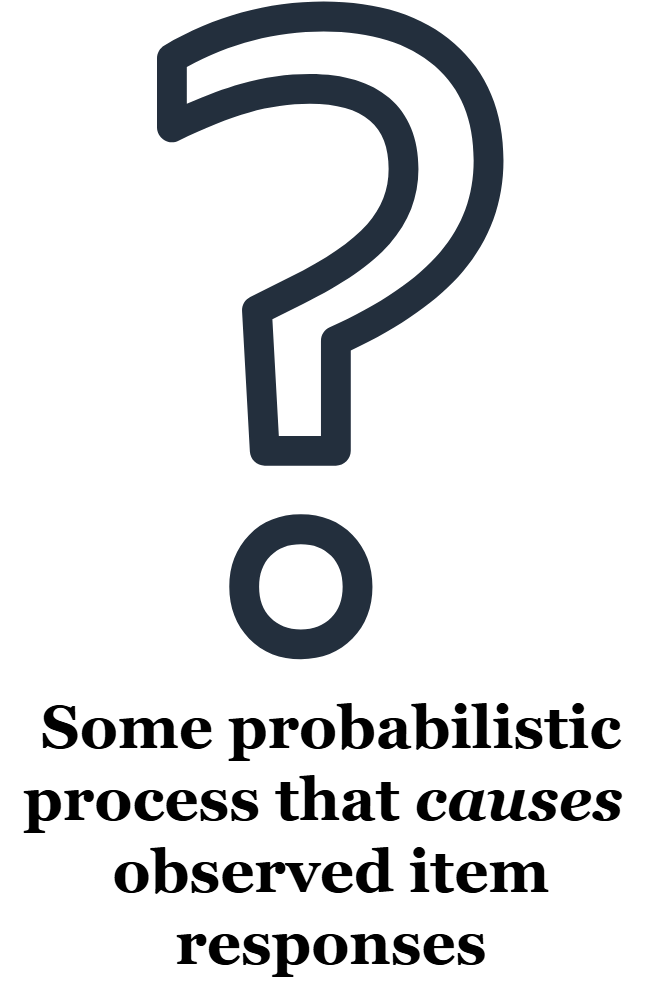

in general, psychometric models are statistical models that predict item responses. All psychometric models include:

- Parameters defining item properties

- Parameters defining person properties

- Allow for some randomness in item responses (random measurement error)

A Classical IRT Model

According to this IRT model, the probability of a correct response to a binary item \(i\) for person \(j\) (\(Y_{ij} = 1\)) is:

\[P(Y_{ij} = 1|a_i,b_i,\theta_j) = \mathrm{logit}(a_i(\theta_j - b_i))\]

- \(\theta_j\) is an unobserved person trait that explains why responses from the same individuals are similar to each other (i.e., ability)

- \(a_i\) and \(b_i\) are characteristics that vary across items (e.g., items can be more or less difficult)

Why Psychometrics and IRT?

With psychometric models, not only do we explain the observed responses, but we also obtain item characteristics and person characteristics.

Question: Can we do the same for quantile forecast items in the Forecasting Proficiency Test (FPT; Himmelstein et al., 2024)?

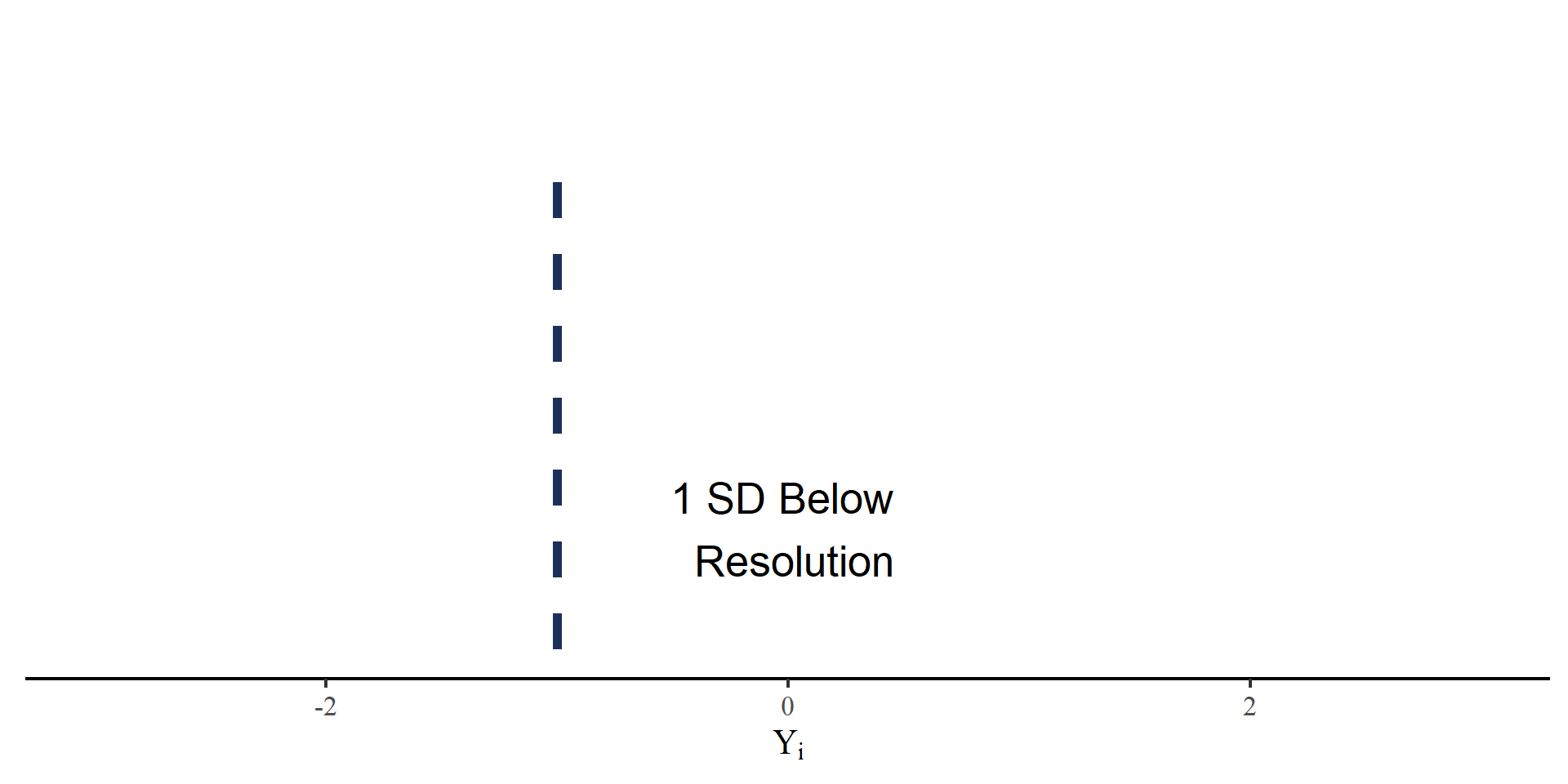

Historical Standardization

Responses to FPT quantile forecast items are on very different scales (e.g. dollars/gallon, thousands of dollars, percentages,…). We define the outcome measure, historically scaled signed error, as

\[ Y_i = \frac{\hat{Y}_i - Y_{\mathrm{res},i}}{SD_{Y_{\mathrm{hist},i}}} \]

- \(\hat{Y}_i\): Reported forecast for item \(i\) at any quantile.

- \(Y_{\mathrm{res},i}\): The resolution for item \(i\).

- \(SD_{Y_{\mathrm{hist},i}}\): The \(SD\) of the historical time series of item \(i\).

\(Y_i\): SD units away from the resolution.

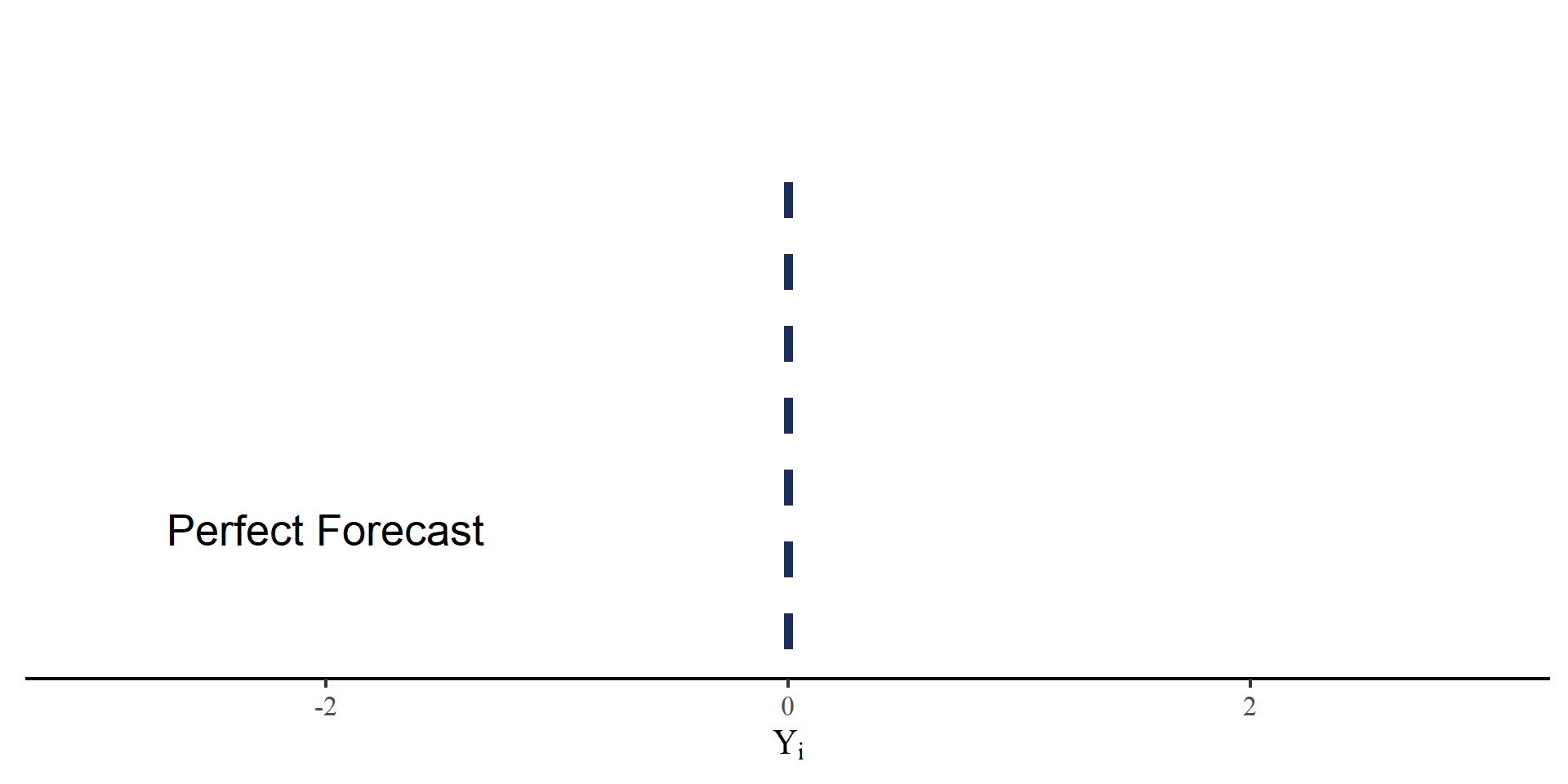

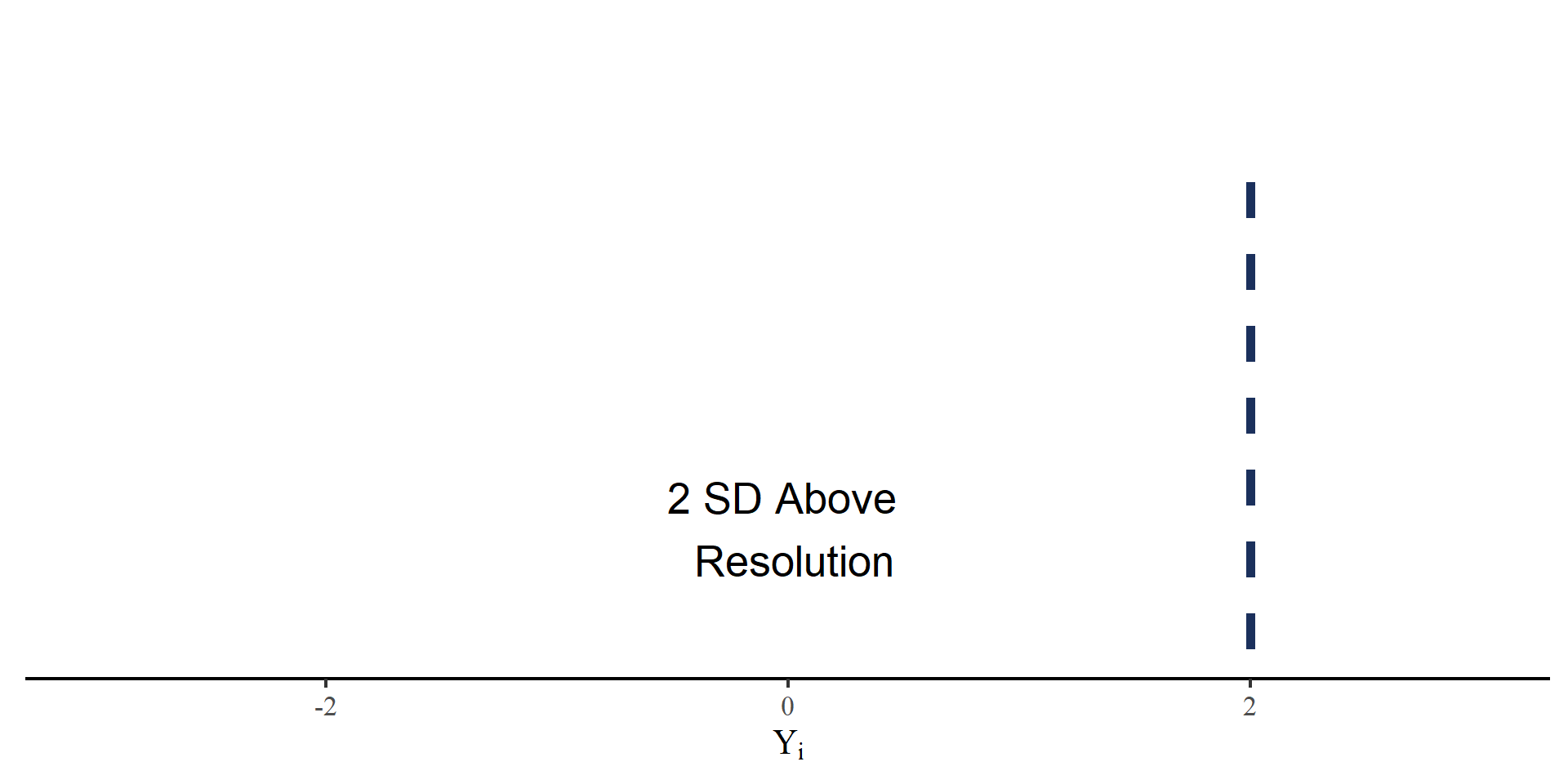

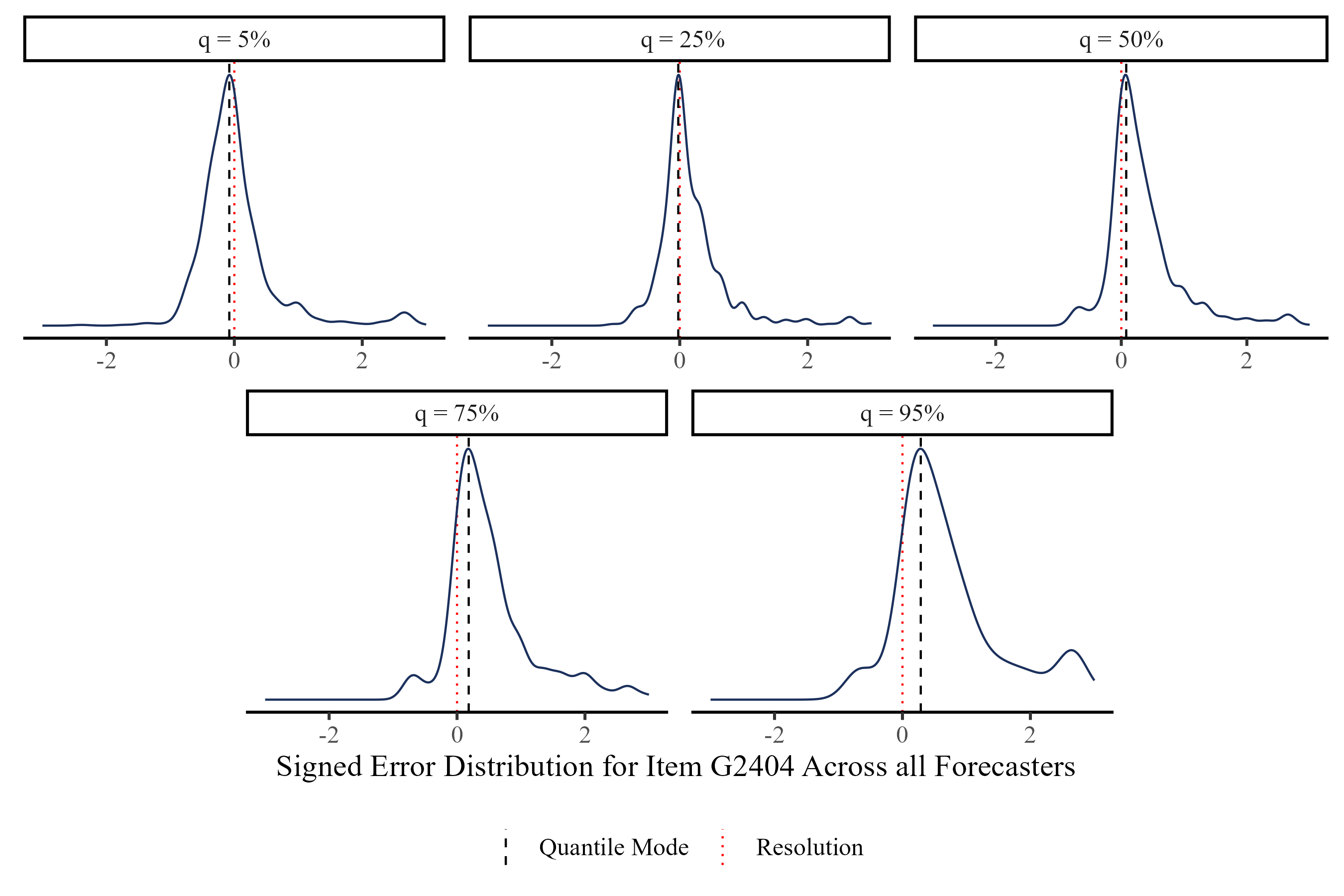

Observed Distributions of Signed Error

Item G2404: What will be the 12-month percentage change in the U.S. Consumer Price Index (CPI) for “Food” in the month between May 1, 2024 and May 31, 2024?

Modeling Signed Error

We model \(Y_{jiq}\), the signed error of person \(j\) to item \(i\) at quantile \(q\).

\[Y_{jiq} \sim \mathrm{Student\ T}(\mu_{iq}, \sigma_{ji}, \mathrm{df}_i) \\ \mu_{iq} = b_i + Q_q \times d_i \\ \sigma_{ji} = \frac{\sigma_i}{\mathrm{Exp}[a_i \times \theta_j]}\]

- \(b_i\): item bias (irreducible uncertainty)

- \(d_i\): expected quantile distance. \(Q_q\) is a vector of constants that ensures monotonicity of \(\mu_{iq}\)

- \(\sigma_i\): item difficulty

- \(\theta_j\): Forecasting ability, the only person parameter in the model

- \(a_i\): item discrimination (i.e. the magnitude of the effect of \(\theta_j\) on \(\sigma_i\))

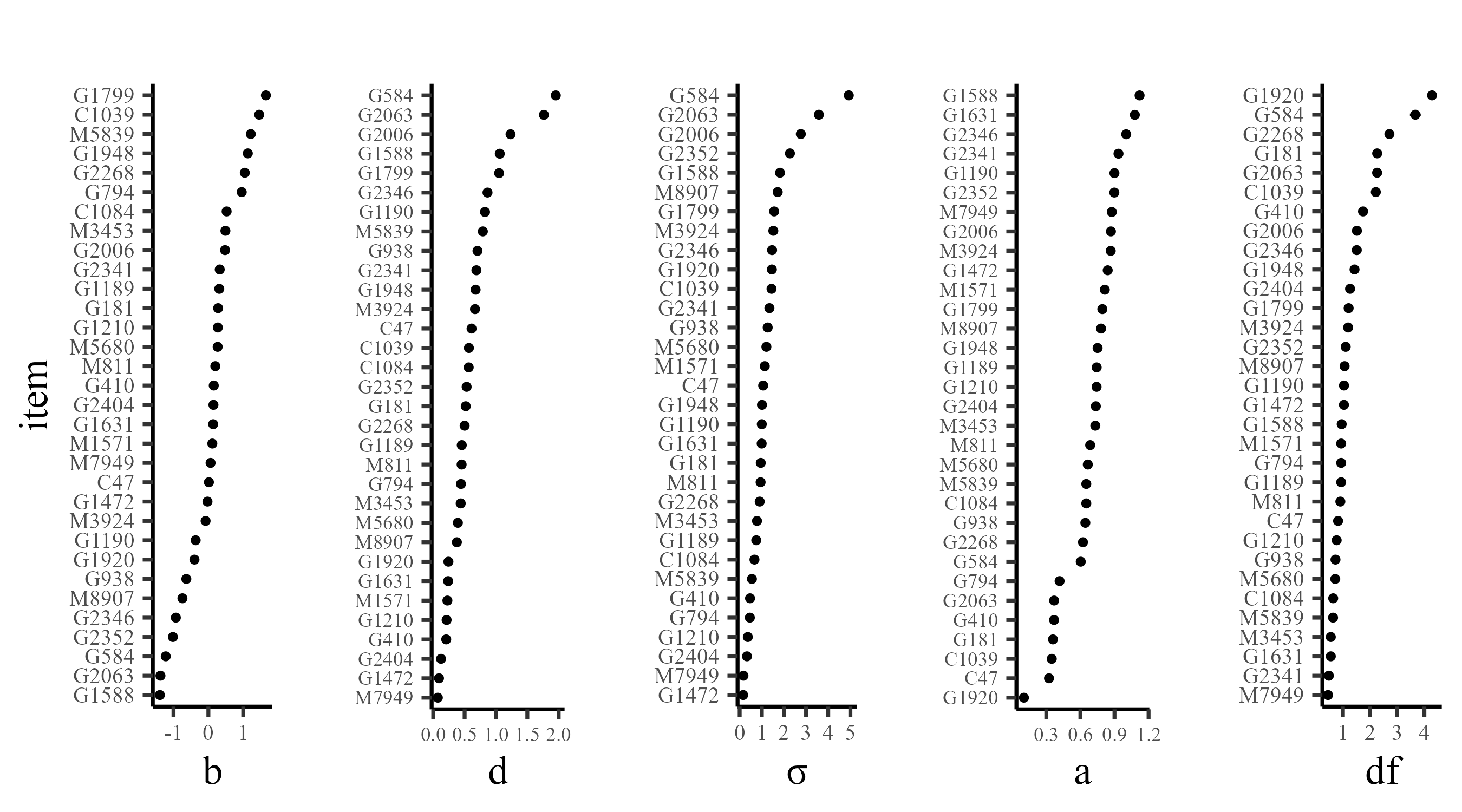

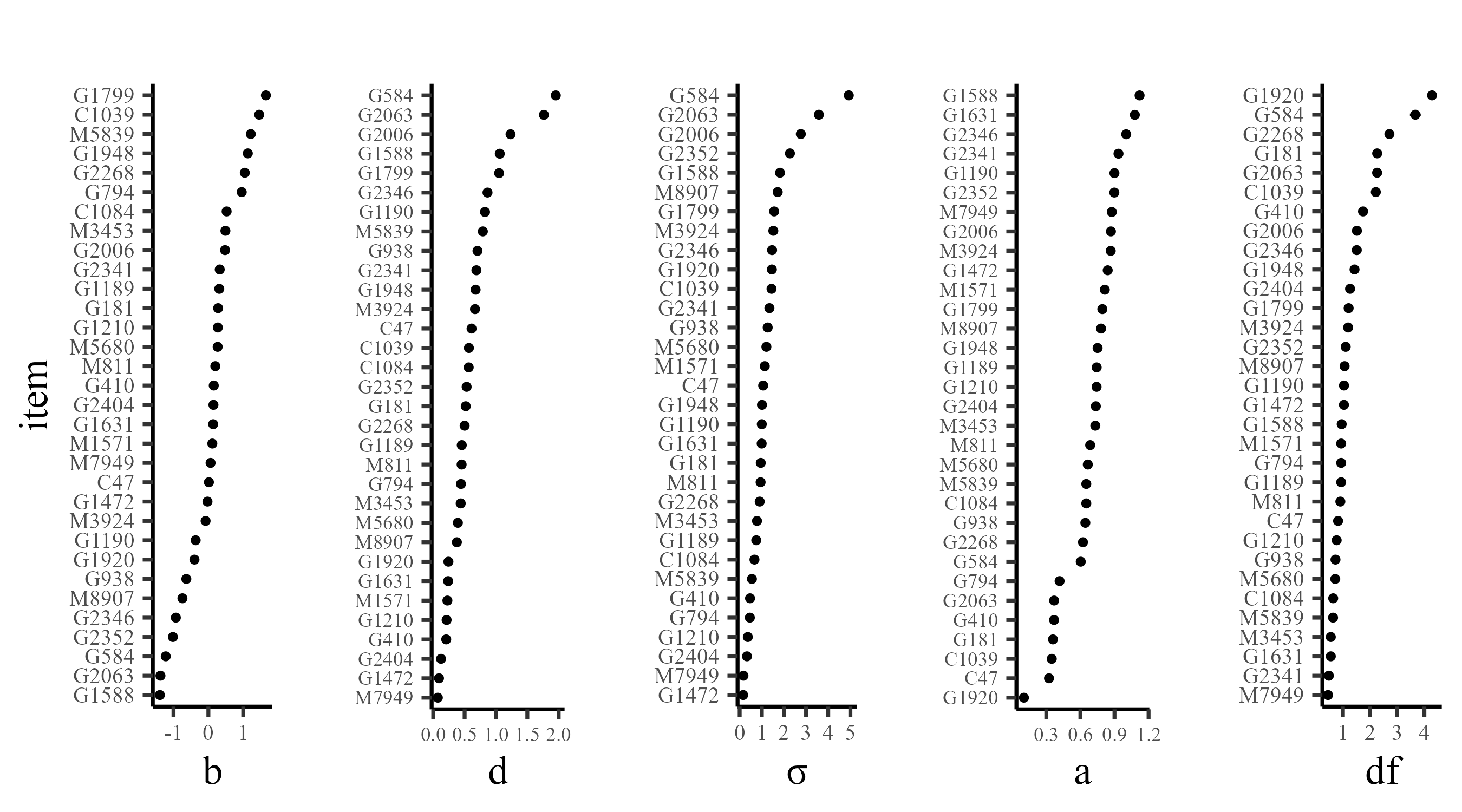

Model Estimation and Item Parameters

All models were estimated in PyMC (Abril-Pla et al., 2023) using Markov Chain Monte Carlo (MCMC) estimation (warmup = 1000, draws = 5000, ~ 40 minutes). All Rhats \(\leq 1.01\).

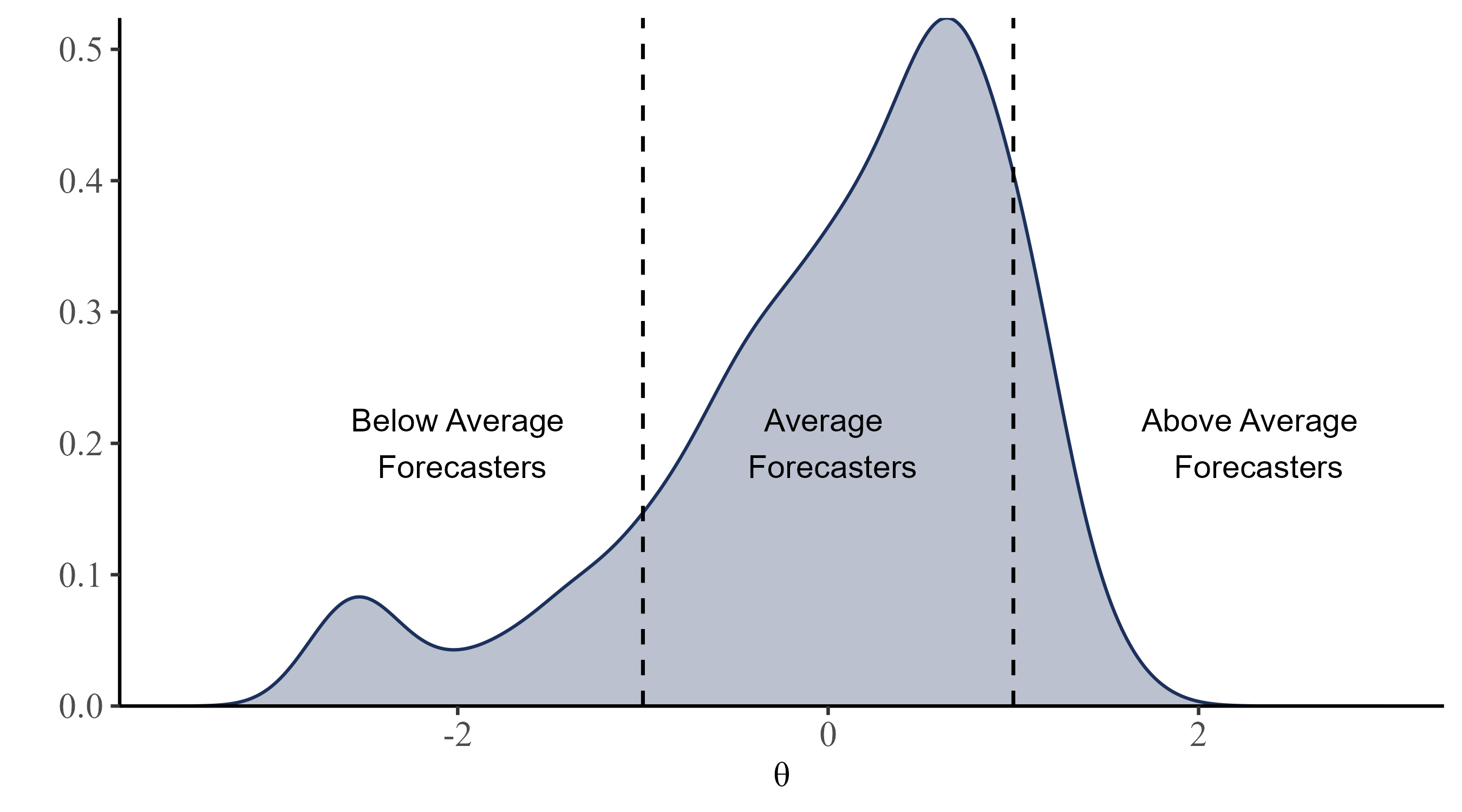

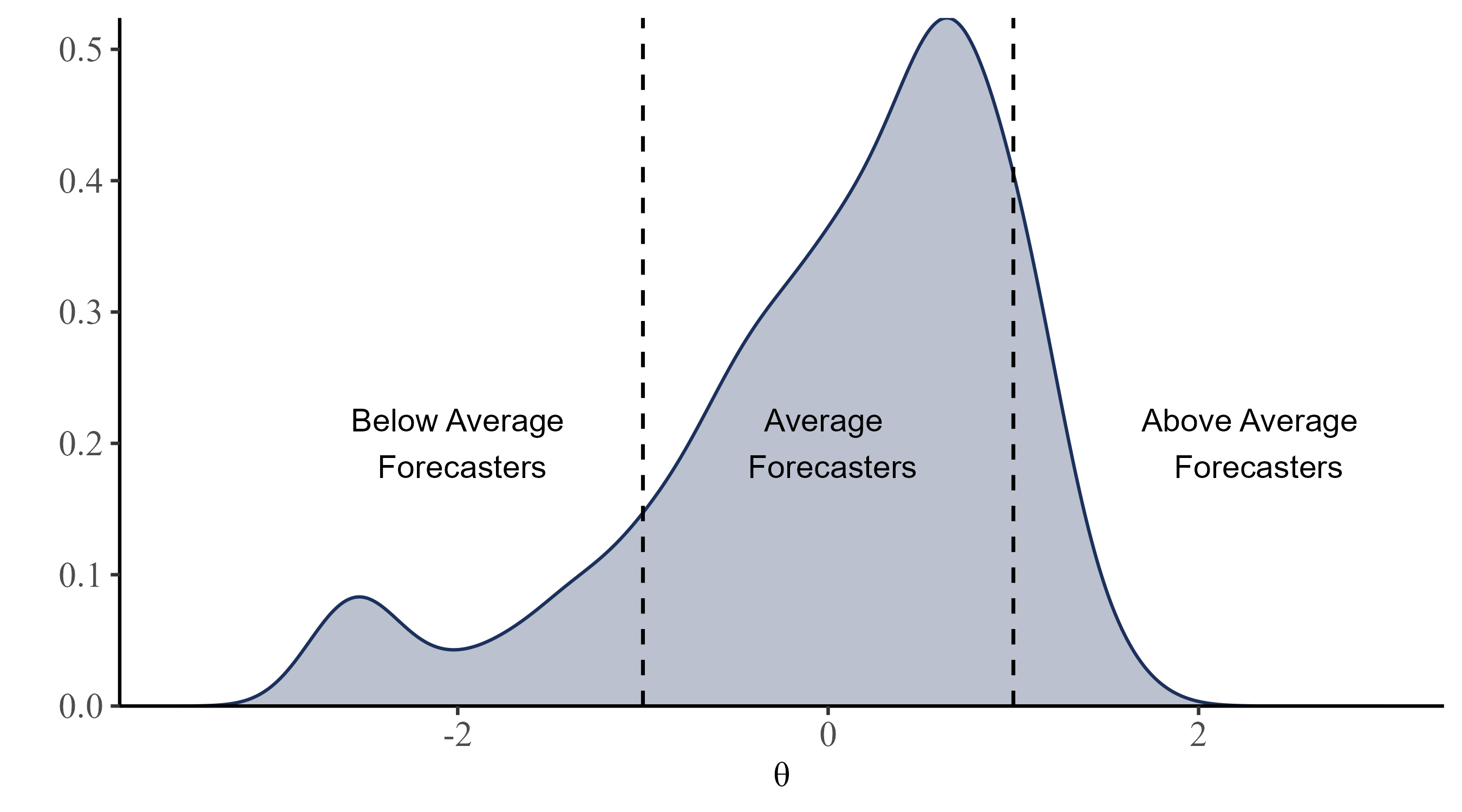

Person Parameter: \(\theta\)

Distribution of \(\theta\) for the 1194 forecasters (better forecasters have higher \(\theta\) values).

Who gets Higher \(\theta s\)?

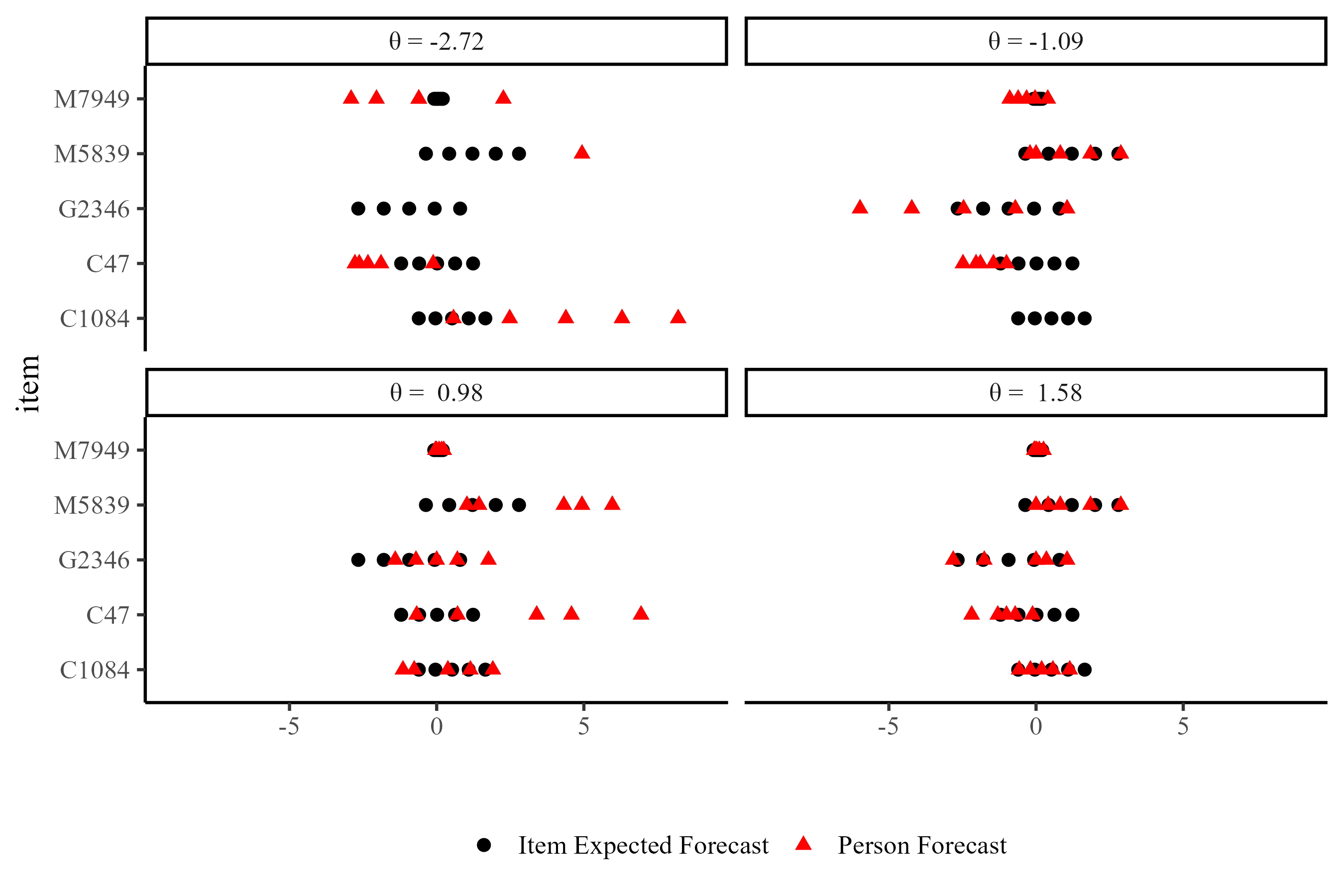

For any set of quantile forecasts, \(\theta\) is maximized if and only if all person forecasts equal exactly the corresponding item expected forecasts.

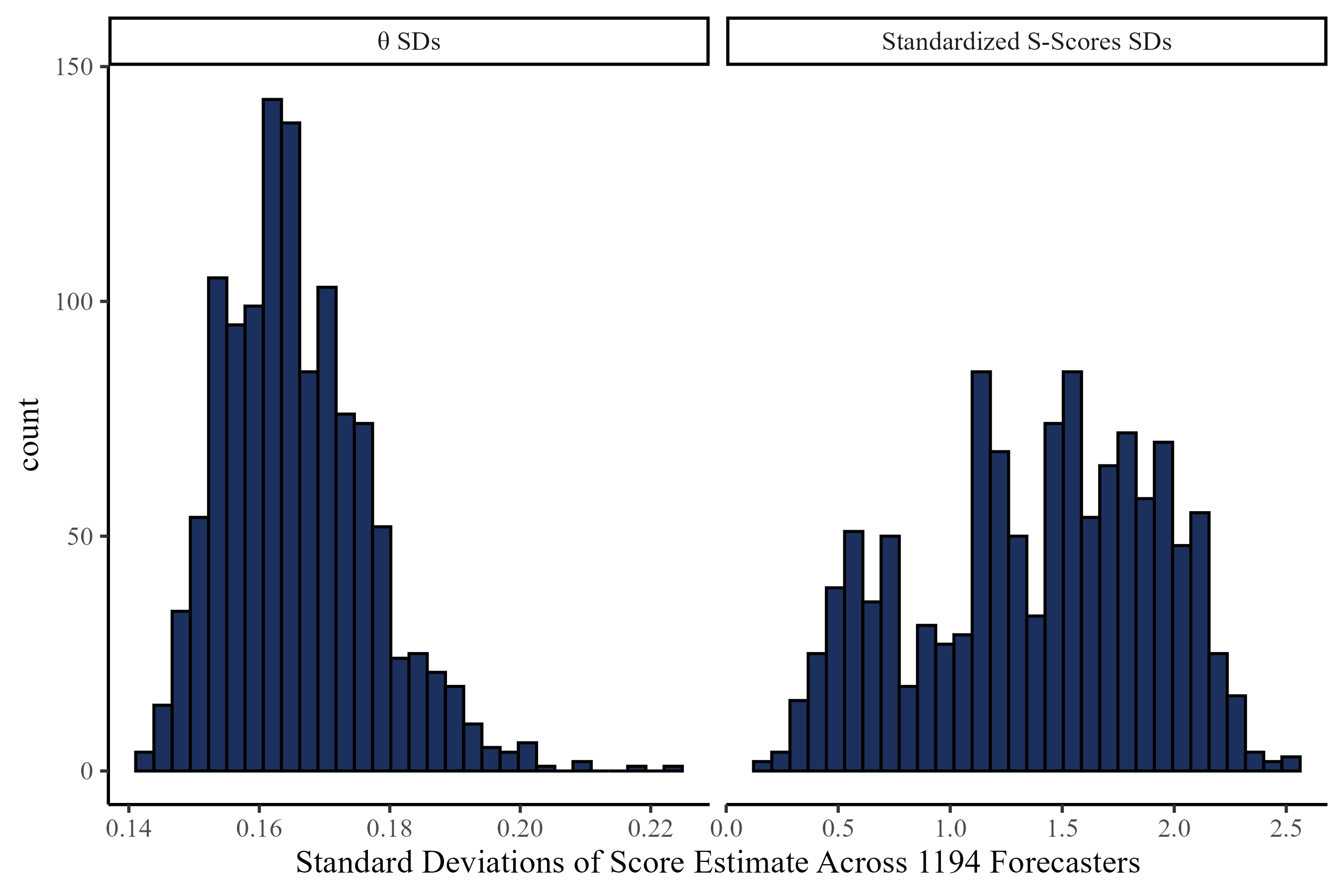

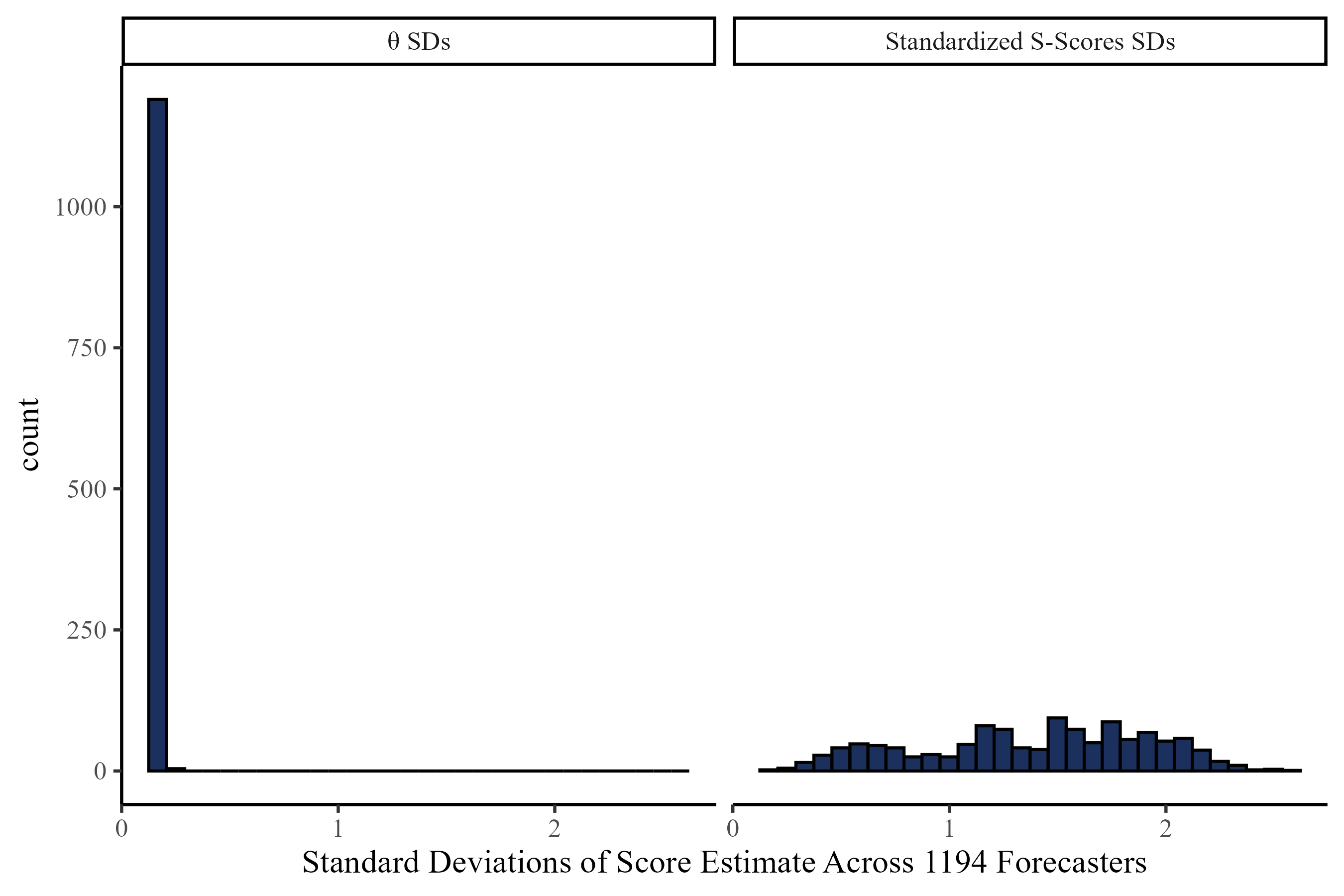

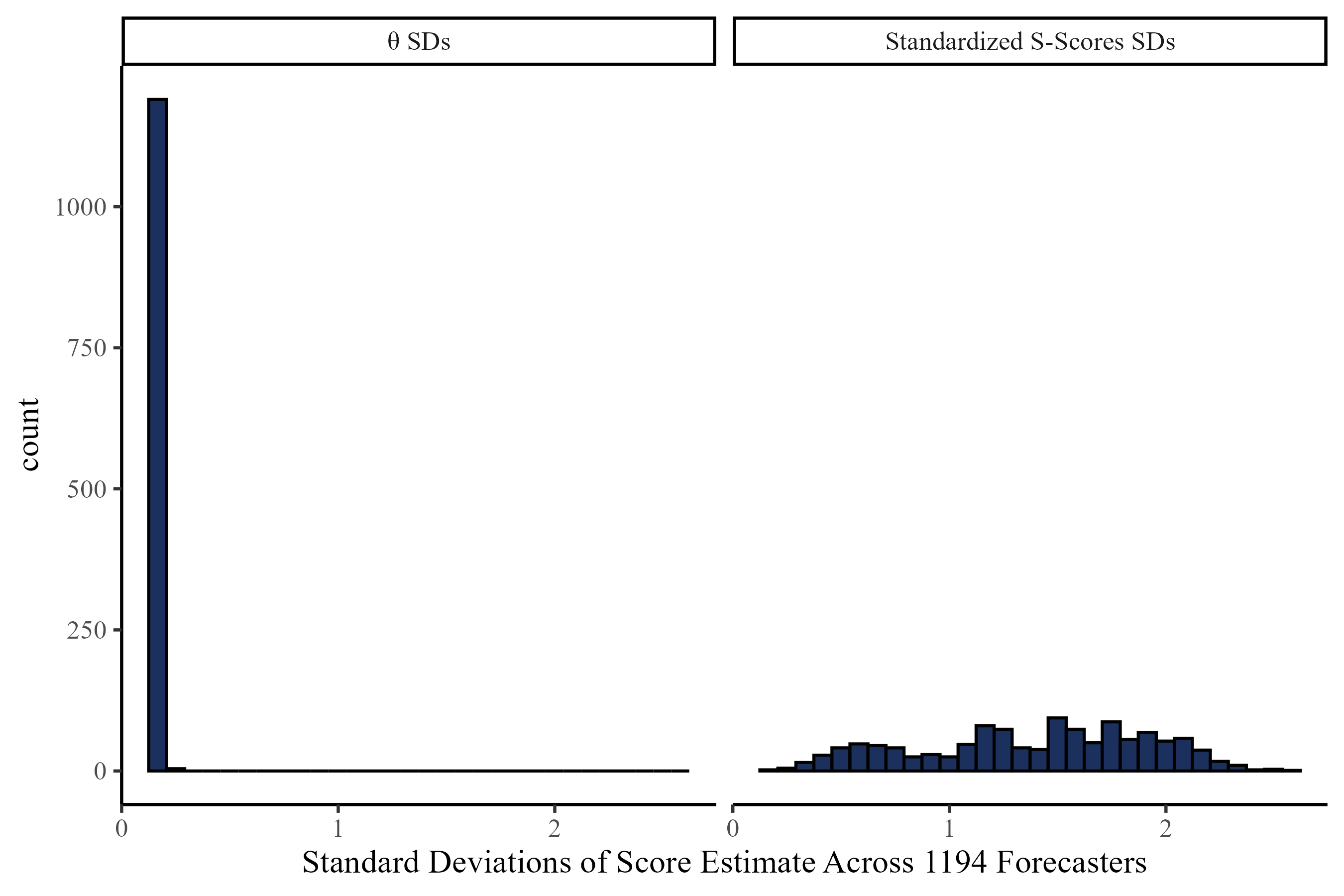

Uncertainty Around \(\theta\) and S-Scores

\(\theta\) has less uncertainty around its estimates:

Predicting Out of Sample Accuracy

Takeaways

- This approach captures meaningful difference across FPT items (i.e., bias, difficulty, discrimination,…)

- The \(\theta\) metric is easily understood and presents good statistical properties

- The \(\theta\) metric predicts out of sample accuracy well

\(\theta\) And S-scores?

<a href="https://tenor.com/view/magnus-carlsen-magnus-slam-table-slam-angry-gif-16228483763522153196">

Magnus Carlsen Slam GIF

</a>

from

<a href="https://tenor.com/search/magnus+carlsen-gifs">Magnus Carlsen GIFs</a>References And Contacts

Appendix

Negative Log-Likelihood of \(\theta\)

Negative log-likelihood function of \(\theta\) given item parameters and forecast:

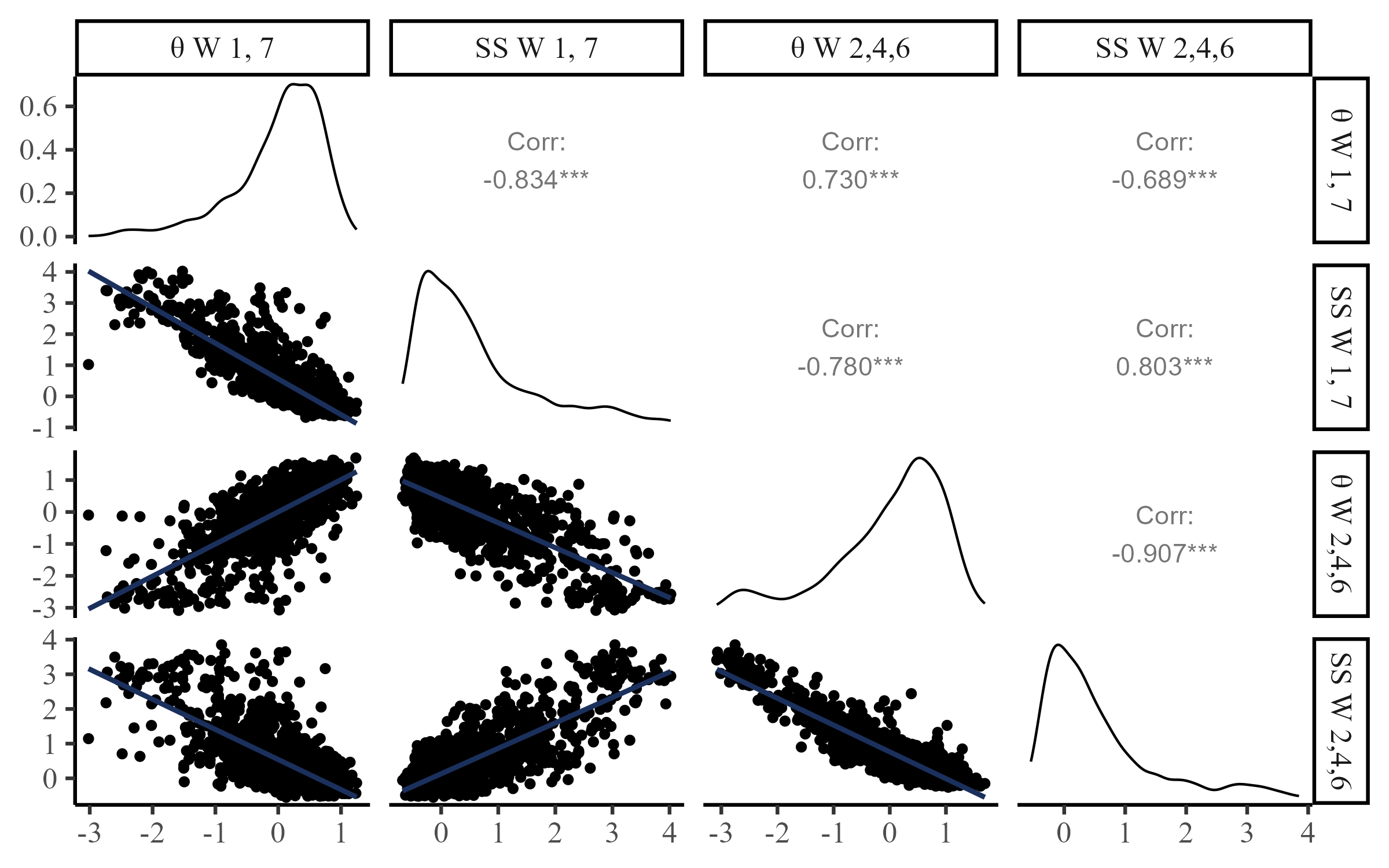

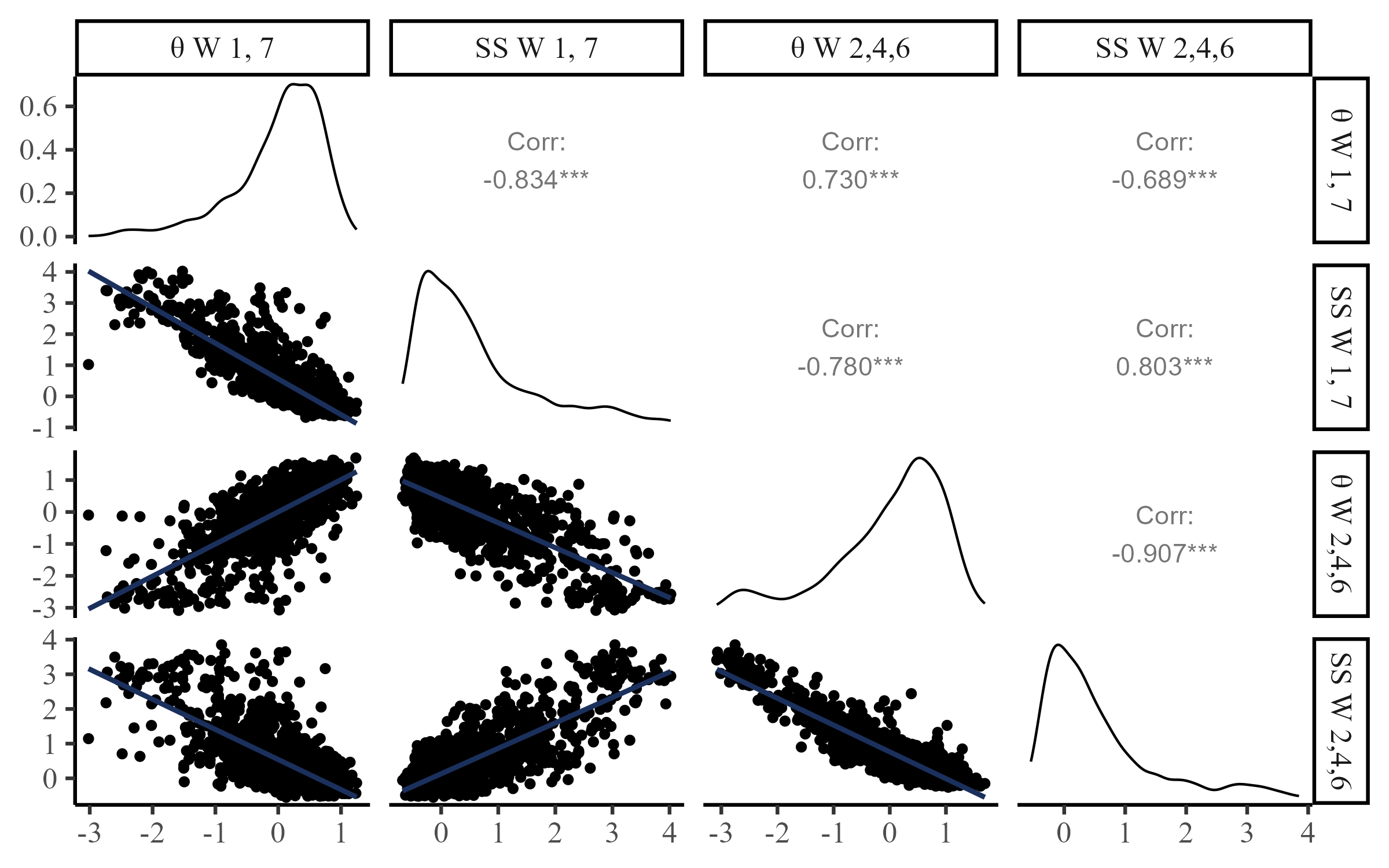

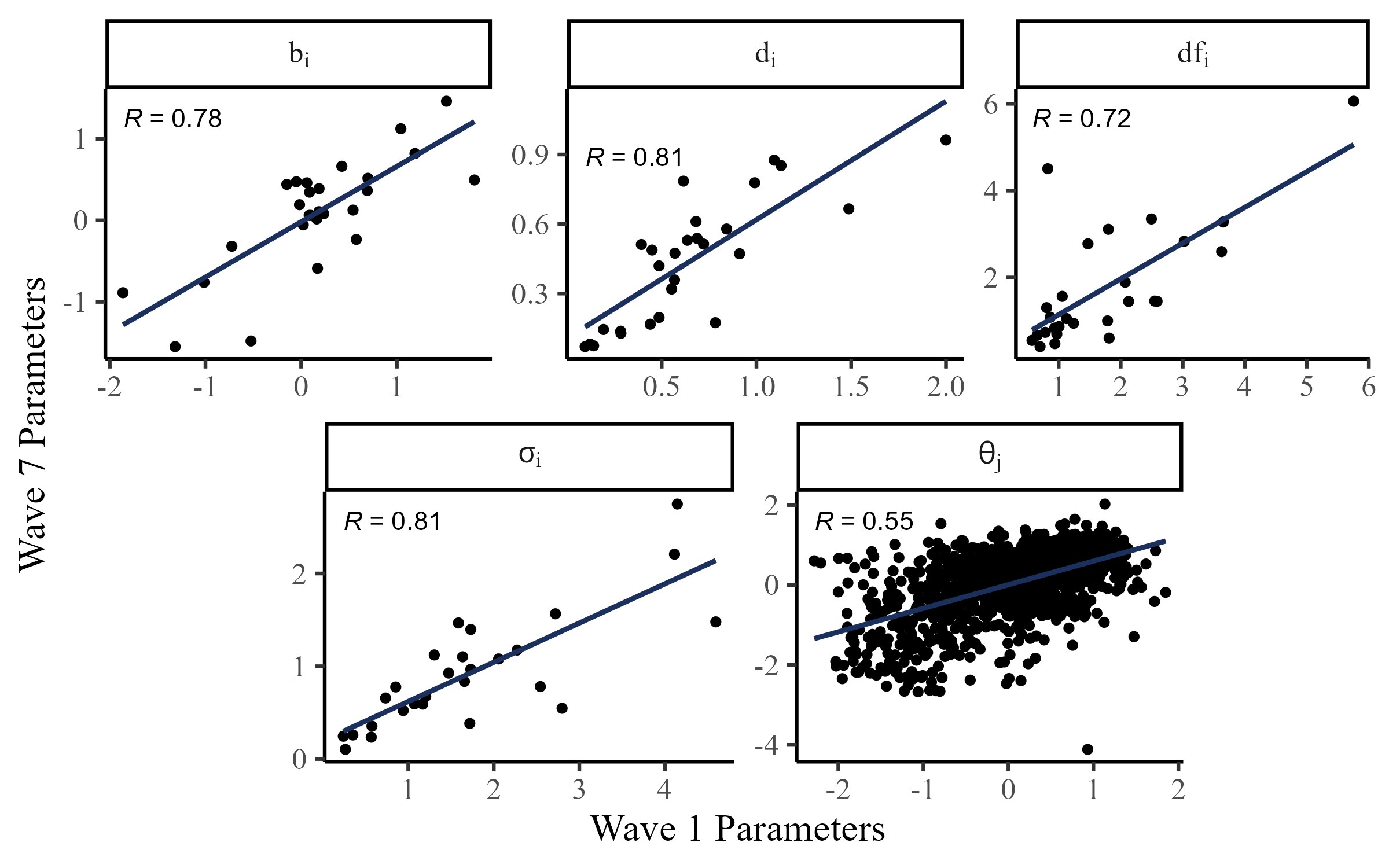

Stability of Parameters

Given the complexity of the FPT items, item parameters are likely to change depending on many factors. Still, there seems to be reasonable stability even after a month between Wave 1 and Wave 7 (test-retest):

SPUDM 2025