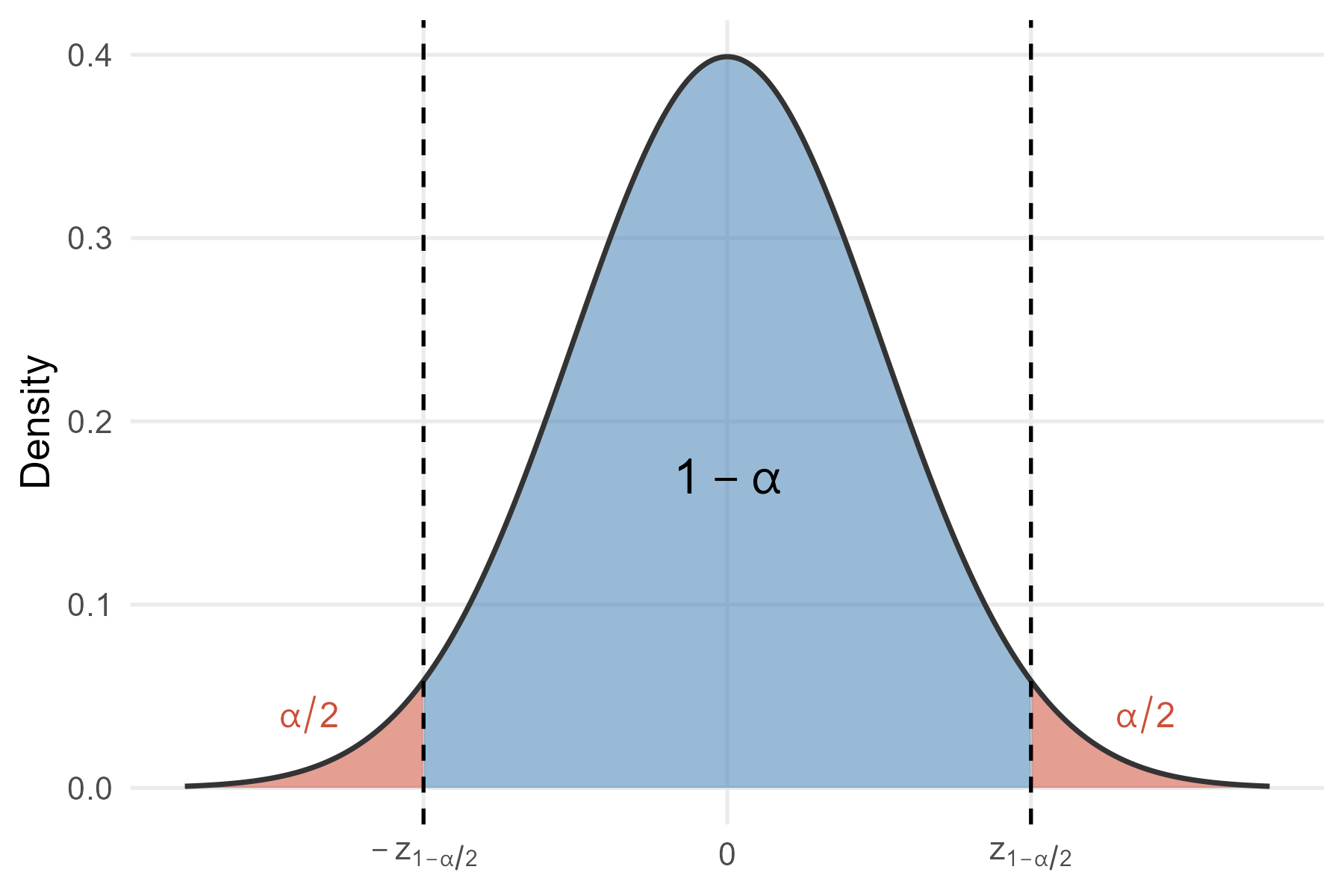

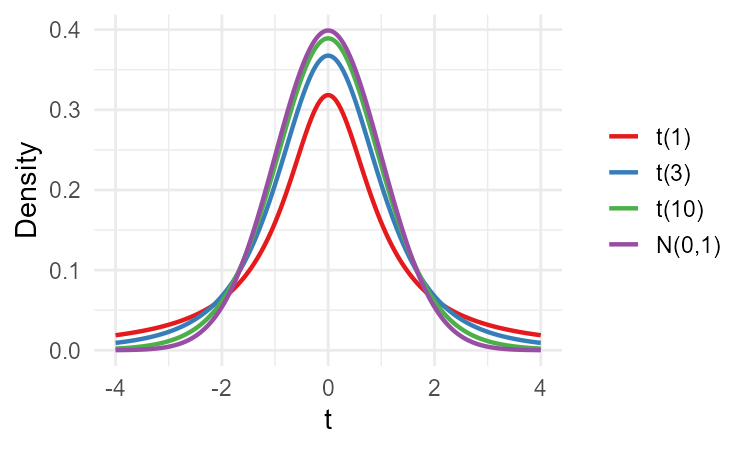

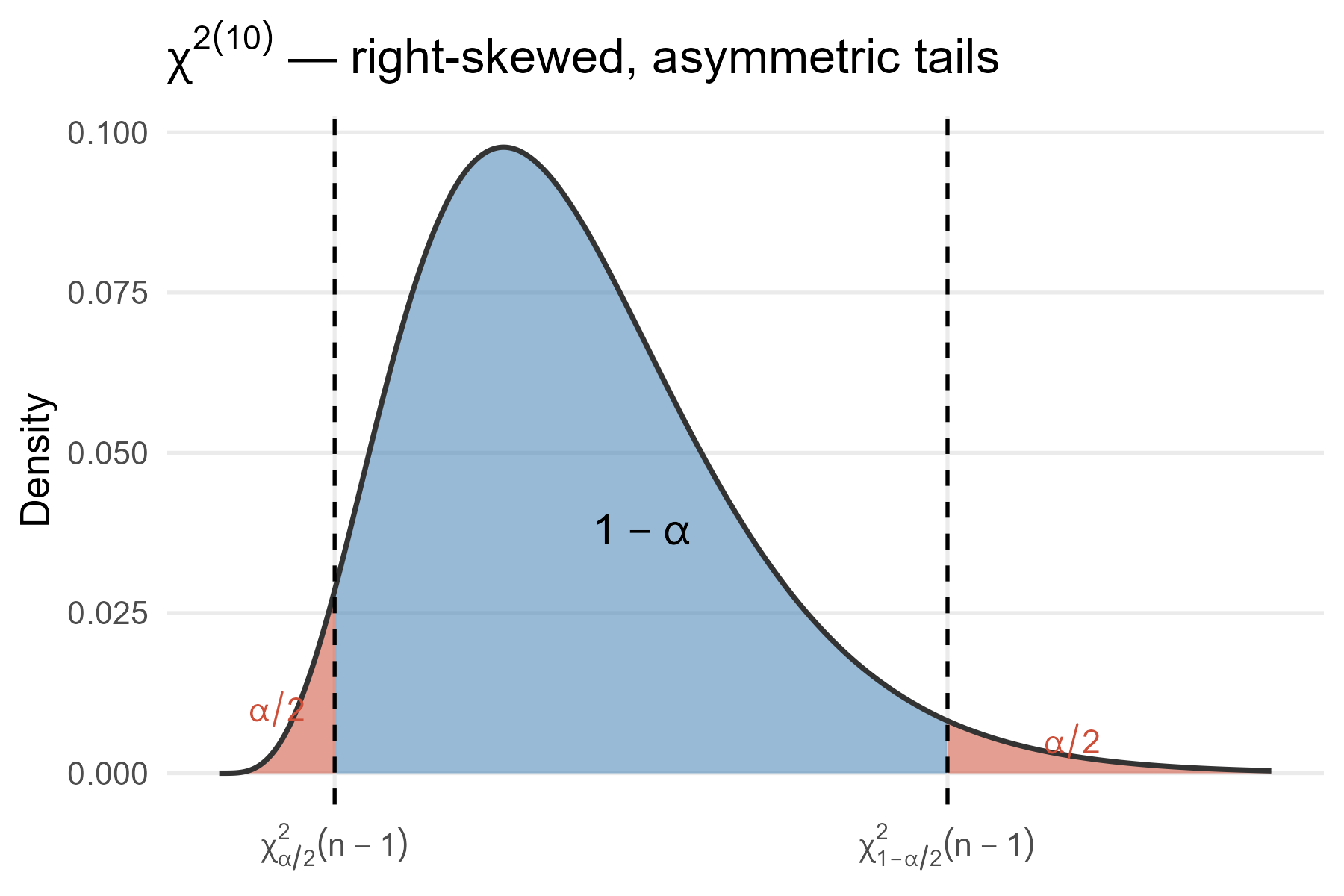

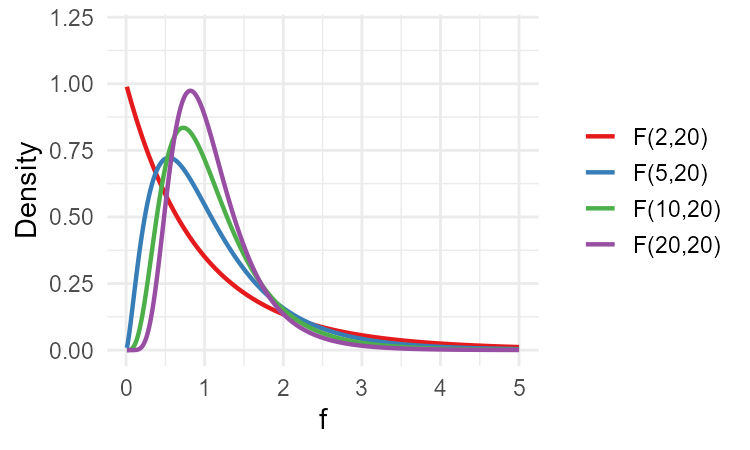

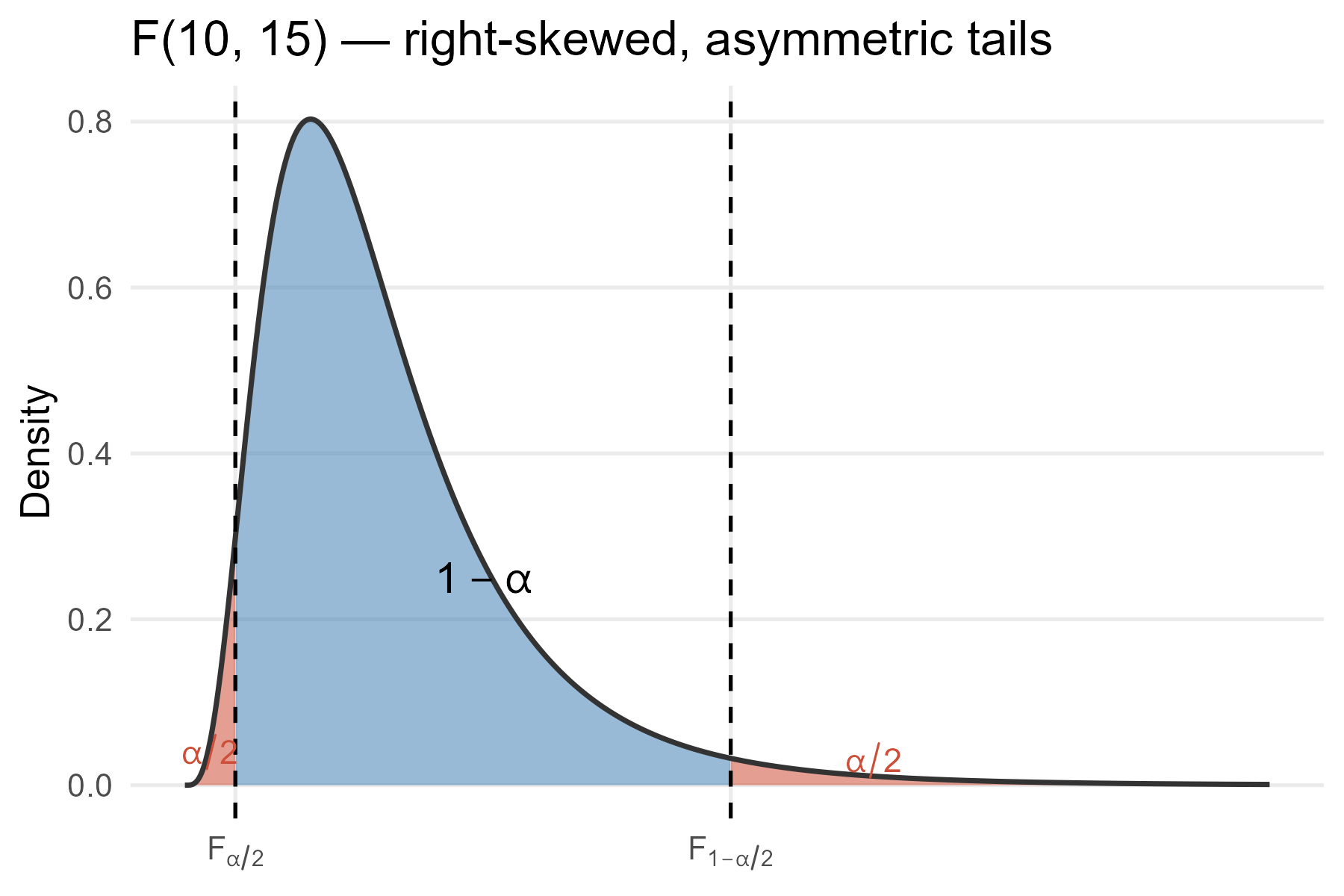

class: center, inverse, middle <style>.xe__progress-bar__container { top:0; opacity: 1; position:absolute; right:0; left: 0; } .xe__progress-bar { height: 0.25em; background-color: #808080; width: calc(var(--slide-current) / var(--slide-total) * 100%); } .remark-visible .xe__progress-bar { animation: xe__progress-bar__wipe 200ms forwards; animation-timing-function: cubic-bezier(.86,0,.07,1); } @keyframes xe__progress-bar__wipe { 0% { width: calc(var(--slide-previous) / var(--slide-total) * 100%); } 100% { width: calc(var(--slide-current) / var(--slide-total) * 100%); } }</style> <style type="text/css"> .pull-left { float: left; width: 44%; } .pull-right { float: right; width: 44%; } .pull-right ~ p { clear: both; } .pull-left-wide { float: left; width: 66%; } .pull-right-wide { float: right; width: 66%; } .pull-right-wide ~ p { clear: both; } .pull-left-narrow { float: left; width: 30%; } .pull-right-narrow { float: right; width: 30%; } .tiny123 { font-size: 0.40em; } .small123 { font-size: 0.80em; } .large123 { font-size: 2em; } .red { color: red } .orange { color: orange } .green { color: green } </style> # Statistics ## Confidence intervals ### (Chapter 13) ### Christian Vedel,<br>Department of Economics<br>University of Southern Denmark ### Email: [christian-vs@sam.sdu.dk](mailto:christian-vs@sam.sdu.dk) ### Updated 2026-05-05 --- class: middle # Today's lecture .pull-left-wide[ **Turning point estimates into intervals that quantify uncertainty** - **Section 1:** Confidence interval for the mean value - **Section 2:** Confidence interval for the variance - **Section 3:** Confidence interval for the difference in means - **Section 4:** Confidence interval for the ratio of two variances - **Section 5:** Determining the required sample size ] .pull-right-narrow[  ] --- class: inverse, middle, center # Confidence interval for the mean value --- # Why confidence intervals? .pull-left-wide[ - Estimators such as `\(\bar{X}\)` are random variables — the probability that they exactly equal the population value is virtually zero - We hope they are "close" to the true value, but how close? ] -- .pull-left-wide[ - A **confidence interval** uses the sampling distribution of the estimator to construct a range of values that conveys this uncertainty: - a point estimate gives you one number - a confidence interval gives you a range and a probability that the range contains the true value ] --- # Example: average income in a population .pull-left-wide[ - Suppose we want to estimate the average monthly income `\(\mu\)` in a large population - We cannot survey everyone — instead we draw a simple random sample of `\(n = 100\)` households - We observe: `\(\bar{X} = 35{,}200\)` DKK and `\(S = 12{,}000\)` DKK ] -- .pull-left-wide[ - The point estimate `\(\bar{X} = 35{,}200\)` DKK is our best single guess — but we know it will not equal `\(\mu\)` exactly - A 95% confidence interval (using the formula we will derive) gives: `$$\hat{I} = \left[35{,}200 - 1.96 \cdot \frac{12{,}000}{\sqrt{100}},\;\; 35{,}200 + 1.96 \cdot \frac{12{,}000}{\sqrt{100}}\right] \approx [32{,}848,\; 37{,}552] \text{ DKK}$$` - In 95% of samples drawn this way, the resulting interval would contain the true mean income — we will now derive where this formula comes from ] --- # Setting up the CI for `\(\mu\)` .pull-left-wide[ - We want an interval `\(\hat{I} = [W_l, W_u]\)` centred around `\(\bar{X}\)`: `$$\hat{I} = \left[\bar{X} - k,\; \bar{X} + k\right]$$` - Because `\(\bar{X}\)` is random, the bounds are random — the interval will not always contain `\(\mu\)` ] -- .pull-left-wide[ - We choose the **confidence level** `\(1 - \alpha\)`: the probability that the interval contains `\(\mu\)` `$$1 - \alpha = P\!\left(\mu \in \hat{I}\right) = P\!\left(\bar{X} - k \leq \mu \leq \bar{X} + k\right)$$` ] --- # Finding `\(k\)`: standardising `\(\bar{X}\)` .pull-left-wide[ - For a simple random sample, `\(\bar{X} \overset{a}{\sim} \mathcal{N}\!\left(\mu, \sigma^2/n\right)\)`, so: `$$Z = \frac{\bar{X} - \mu}{\sigma / \sqrt{n}} \overset{a}{\sim} \mathcal{N}(0, 1)$$` ] -- .pull-left-wide[ - Rewriting the coverage condition in terms of `\(Z\)`: `$$1 - \alpha = P\!\left(-\frac{\sqrt{n}\,k}{\sigma} \leq Z \leq \frac{\sqrt{n}\,k}{\sigma}\right) = \Phi(a) - \Phi(-a)$$` where `\(a = \sqrt{n}\,k/\sigma\)` ] -- .pull-left-wide[ - The **critical value** `\(z_{1-\alpha/2}\)` satisfies `\(\Phi(z_{1-\alpha/2}) = 1 - \alpha/2\)`, giving `\(a = z_{1-\alpha/2}\)` and: `$$k = \frac{z_{1-\alpha/2} \cdot \sigma}{\sqrt{n}}$$` ] --- # Illustration: standard normal and the CI for `\(\mu\)` .pull-left-narrow[ - Symmetric around 0 → CI is symmetric around `\(\bar{X}\)` - Equal tail areas `\(\alpha/2\)` on each side - Critical values `\(\pm z_{1-\alpha/2}\)` are equidistant from centre ] .pull-right-wide[  ] --- # CI for `\(\mu\)`: simple random sample .pull-left-wide[ > **CI for `\(\mu\)`, `\(\sigma\)` known (approximate):** `$$\hat{I} = \left[\bar{X} - z_{1-\alpha/2} \cdot \frac{\sigma}{\sqrt{n}},\;\; \bar{X} + z_{1-\alpha/2} \cdot \frac{\sigma}{\sqrt{n}}\right]$$` ] -- .pull-left-wide[ **Common critical values:** | Confidence level | `\(z_{1-\alpha/2}\)` | |---|---| | 90% | 1.645 | | 95% | 1.960 | | 99% | 2.576 | ] --- # CI for `\(\mu\)`: `\(\sigma\)` unknown .pull-left-wide[ - In practice `\(\sigma\)` is rarely known — we replace it with the sample standard deviation `\(S\)` ] -- .pull-left-wide[ > **CI for `\(\mu\)`, `\(\sigma\)` unknown (approximate):** `$$\hat{I} = \left[\bar{X} - z_{1-\alpha/2} \cdot \sqrt{\frac{S^2}{n}},\;\; \bar{X} + z_{1-\alpha/2} \cdot \sqrt{\frac{S^2}{n}}\right]$$` - Approximate because it relies on the approximate normal distribution of `\(\bar{X}\)` - If we know the population distribution of `\(X\)`, we can do better ] --- # Exact CI: `\(X \sim \mathcal{N}(\mu, \sigma^2)\)`, `\(\sigma\)` known .pull-left-wide[ - If `\(X\)` is normally distributed and `\(\sigma^2\)` is known, then `\(Z = \sqrt{n}(\bar{X} - \mu)/\sigma\)` is **exactly** `\(\mathcal{N}(0,1)\)` ] -- .pull-left-wide[ > **Exact CI for `\(\mu\)`, normal population, `\(\sigma\)` known:** `$$\hat{I} = \left[\bar{X} - z_{1-\alpha/2} \cdot \frac{\sigma}{\sqrt{n}},\;\; \bar{X} + z_{1-\alpha/2} \cdot \frac{\sigma}{\sqrt{n}}\right]$$` - Same formula as before — but now exact, not approximate ] --- # The Student's `\(t\)`-distribution .pull-left[ > If `\(Z \sim \mathcal{N}(0,1)\)` and `\(Y \sim \chi^2(k)\)` independent: `$$T = \frac{Z}{\sqrt{Y/k}} \sim t(k)$$` - `\(E(T) = 0\)`, `\(\quad Var(T) = \dfrac{k}{k-2}\)` - Heavier tails than normal; `\(t(k) \to \mathcal{N}(0,1)\)` as `\(k \to \infty\)` - Used for CI when `\(\sigma^2\)` is unknown — which is exactly what we do next ] .pull-right[  ] --- # Exact CI: `\(X \sim \mathcal{N}(\mu, \sigma^2)\)`, `\(\sigma\)` unknown .pull-left-wide[ - When `\(\sigma^2\)` is unknown, replacing it with `\(S^2\)` changes the distribution of the pivot - It can be shown that: `$$t = \frac{\bar{X} - \mu}{\sqrt{S^2/n}} \sim t(n-1)$$` - The ***t*-distribution** has heavier tails than the normal; as `\(n \to \infty\)` it converges to `\(\mathcal{N}(0,1)\)` ] -- .pull-left-wide[ `$$\hat{I} = \left[\bar{X} - t_{1-\alpha/2}(n-1) \cdot \sqrt{\frac{S^2}{n}},\;\; \bar{X} + t_{1-\alpha/2}(n-1) \cdot \sqrt{\frac{S^2}{n}}\right]$$` ] --- # `\(t\)` vs `\(z\)`: when does it matter? .pull-left-wide[ - The `\(t(k)\)` distribution converges to `\(\mathcal{N}(0,1)\)` as `\(k \to \infty\)` — for large samples the two are nearly identical - **Rule of thumb:** - `\(n \gtrsim 30\)`: the normal approximation is usually acceptable in practice - `\(n \gtrsim 100\)`: safe to use `\(z\)` even if the population distribution is moderately skewed ] -- .pull-left-wide[ - **Practical advice:** when `\(\sigma^2\)` is unknown, default to `\(t\)` — it is exact for normal populations and reduces to `\(z\)` for large samples - This rule applies generally across all the CIs in this lecture ] --- # CI for `\(\mu\)`: `\(X \sim Bernoulli(p)\)` .pull-left-wide[ - If `\(X \sim Bernoulli(p)\)`, then `\(E(X) = p\)` and `\(Var(X) = p(1-p)\)` - The sample mean `\(\bar{X}\)` estimates `\(p\)`; we estimate the variance as `\(\bar{X}(1 - \bar{X})\)` - This exploits the Bernoulli structure and is more efficient than the general `\(S^2\)` ] -- .pull-left-wide[ > **Approximate CI for `\(p\)`:** `$$\hat{I} = \left[\bar{X} - z_{1-\alpha/2} \cdot \sqrt{\frac{\bar{X}(1-\bar{X})}{n}},\;\; \bar{X} + z_{1-\alpha/2} \cdot \sqrt{\frac{\bar{X}(1-\bar{X})}{n}}\right]$$` ] --- # CI for `\(\mu\)`: stratified sample .pull-left-wide[ - For a stratified sample, we use `\(\bar{X}_{st} = \sum_{j=1}^m w_j \bar{X}_j\)` as the estimator - We estimate `\(Var(\bar{X}_{st}) = \sum_{j=1}^m w_j^2 \sigma_j^2/n_j\)` by replacing `\(\sigma_j^2\)` with `\(S_j^2\)`: `$$\widehat{Var}(\bar{X}_{st}) = \sum_{j=1}^m w_j^2 \cdot \frac{S_j^2}{n_j}$$` ] -- .pull-left-wide[ > **Approximate CI for `\(\mu\)`, stratified sample:** `$$\hat{I} = \left[\bar{X}_{st} - z_{1-\alpha/2} \cdot \sqrt{\widehat{Var}(\bar{X}_{st})},\;\; \bar{X}_{st} + z_{1-\alpha/2} \cdot \sqrt{\widehat{Var}(\bar{X}_{st})}\right]$$` ] --- # .red[Raise your hand 1: CI for the mean] <div class="countdown" id="timer_534d70e4" data-update-every="1" tabindex="0" style="top:TRUE;right:0;"> <div class="countdown-controls"><button class="countdown-bump-down">−</button><button class="countdown-bump-up">+</button></div> <code class="countdown-time"><span class="countdown-digits minutes">00</span><span class="countdown-digits colon">:</span><span class="countdown-digits seconds">20</span></code> </div> .pull-left-wide[ **Q1.** A 95% CI for `\(\mu\)` is `\([45, 55]\)`. Which statement is correct? - **A)** There is a 95% probability that `\(\mu\)` lies in `\([45, 55]\)` - **B)** 95% of future sample means will fall in `\([45, 55]\)` - **C)** If we repeated the sampling many times, 95% of the resulting intervals would contain `\(\mu\)` ] -- .pull-left-wide[ **Q2.** When should we use the `\(t\)`-distribution rather than the normal for a CI for `\(\mu\)`? - **A)** When the population is normal and `\(\sigma^2\)` is unknown — the `\(t\)` arises from estimating `\(\sigma\)` with `\(S\)` - **B)** Whenever the sample size is small (`\(n < 30\)`) - **C)** Whenever the population distribution is unknown ] --- # .red[Practice 1: CI for the mean] .pull-left-wide[ A random sample of `\(n = 25\)` students has mean exam score `\(\bar{X} = 68\)` and sample standard deviation `\(S = 10\)`. Assume scores are normally distributed. 1. Construct a 95% CI for the population mean `\(\mu\)` using the `\(t\)`-distribution 2. How does the CI change if `\(n = 100\)` instead? (Recalculate) 3. If the population standard deviation were known to be `\(\sigma = 10\)`, would you use `\(z\)` or `\(t\)`? Construct that CI for `\(n = 25\)` *Hint:* `\(t_{0.975}(24) \approx 2.064\)`; `\(t_{0.975}(99) \approx 1.984\)`; `\(z_{0.975} = 1.96\)` ] --- class: inverse, middle, center # Confidence interval for the variance --- # CI for the variance .pull-left-wide[ - The sample variance `\(S^2\)` estimates `\(\sigma^2\)`; to build a CI we need its sampling distribution - When `\(X \sim \mathcal{N}(\mu, \sigma^2)\)`, it can be shown that: `$$Y = \frac{(n-1) S^2}{\sigma^2} \sim \chi^2(n-1)$$` - The `\(\chi^2\)` distribution is right-skewed — so the CI will be **asymmetric** around `\(S^2\)` ] -- .pull-left-wide[ - Trapping `\(Y\)` between its `\(\alpha/2\)` and `\(1-\alpha/2\)` quantiles and rearranging for `\(\sigma^2\)` gives: > **CI for `\(\sigma^2\)`:** `$$\hat{I} = \left[\frac{(n-1)S^2}{\chi^2_{1-\alpha/2}(n-1)},\;\; \frac{(n-1)S^2}{\chi^2_{\alpha/2}(n-1)}\right]$$` ] --- # Illustration: `\(\chi^2(n-1)\)` distribution and the CI for `\(\sigma^2\)` .pull-left-narrow[ - Right-skewed: longer upper tail - `\(\chi^2_{\alpha/2}\)` and `\(\chi^2_{1-\alpha/2}\)` are **not** equidistant from the centre - This is why the CI for `\(\sigma^2\)` is asymmetric — it stretches further upward than downward ] .pull-right-wide[  ] --- # .red[Raise your hand 2: CI for the variance] <div class="countdown" id="timer_2bb33bbe" data-update-every="1" tabindex="0" style="top:TRUE;right:0;"> <div class="countdown-controls"><button class="countdown-bump-down">−</button><button class="countdown-bump-up">+</button></div> <code class="countdown-time"><span class="countdown-digits minutes">00</span><span class="countdown-digits colon">:</span><span class="countdown-digits seconds">20</span></code> </div> .pull-left-wide[ **Q1.** Why is the CI for `\(\sigma^2\)` not symmetric around `\(S^2\)`? - **A)** Because `\(S^2\)` is a biased estimator of `\(\sigma^2\)` - **B)** Because the `\(\chi^2\)` distribution is right-skewed — the lower and upper critical values are not equidistant from the centre - **C)** Because `\(\alpha\)` is split unequally between the two tails ] -- .pull-left-wide[ **Q2.** The pivot `\((n-1)S^2/\sigma^2 \sim \chi^2(n-1)\)` is an **exact** result. What does it require? - **A)** That `\(X\)` is normally distributed in the population - **B)** Only that `\(n\)` is large — the CLT makes it hold for any distribution - **C)** That `\(\sigma^2\)` is known — otherwise the pivot is not well-defined ] --- # .red[Practice 2: CI for the variance] .pull-left-wide[ A normal population is sampled with `\(n = 20\)`, giving `\(S^2 = 16\)`. 1. Construct a 95% CI for `\(\sigma^2\)` 2. Is the interval symmetric around `\(S^2 = 16\)`? Why or why not? 3. What does it mean if `\(\sigma^2 = 9\)` is inside the interval? *Hint:* `\(\chi^2_{0.025}(19) \approx 8.91\)`; `\(\chi^2_{0.975}(19) \approx 32.85\)` ] --- class: inverse, middle, center # Confidence interval for the difference in means --- # Setup: two independent samples .pull-left-wide[ - We often want to compare two groups (e.g. wage gap between men and women) - Assume two independent simple random samples of sizes `\(n_1\)` and `\(n_2\)` ] -- .pull-left-wide[ - The natural estimator of `\(\mu_1 - \mu_2\)` is `\(\bar{X}_1 - \bar{X}_2\)`: `$$E(\bar{X}_1 - \bar{X}_2) = \mu_1 - \mu_2 \quad \text{(unbiased)}$$` `$$Var(\bar{X}_1 - \bar{X}_2) = \frac{\sigma_1^2}{n_1} + \frac{\sigma_2^2}{n_2}$$` ] -- .pull-left-wide[ - By the CLT, `\(\bar{X}_1 - \bar{X}_2\)` is approximately normal, so: `$$\frac{(\bar{X}_1 - \bar{X}_2) - (\mu_1 - \mu_2)}{\sqrt{\sigma_1^2/n_1 + \sigma_2^2/n_2}} \overset{a}{\sim} \mathcal{N}(0,1)$$` ] --- # CI for `\(\mu_1 - \mu_2\)`: `\(\sigma_1^2, \sigma_2^2\)` known or unknown .pull-left-wide[ .small123[ > **Approximate CI for `\(\mu_1 - \mu_2\)`, `\(\sigma_j^2\)` known:** `$$\hat{I} = \left[(\bar{X}_1 - \bar{X}_2) \pm z_{1-\alpha/2} \cdot \sqrt{\frac{\sigma_1^2}{n_1} + \frac{\sigma_2^2}{n_2}}\right]$$` ] ] -- .pull-left-wide[ .small123[ > **Approximate CI for `\(\mu_1 - \mu_2\)`, `\(\sigma_j^2\)` unknown (replace with `\(S_j^2\)`):** `$$\hat{I} = \left[(\bar{X}_1 - \bar{X}_2) \pm z_{1-\alpha/2} \cdot \sqrt{\frac{S_1^2}{n_1} + \frac{S_2^2}{n_2}}\right]$$` *or* `$$\hat{I} = \left[(\bar{X}_1 - \bar{X}_2) \pm t_{1-\alpha/2}(n_1+n_2-2) \cdot \sqrt{\frac{S_1^2}{n_1} + \frac{S_2^2}{n_2}}\right]$$` ] ] --- # Pooled variance estimator .pull-left-wide[ - If we have good reason to believe `\(\sigma_1^2 = \sigma_2^2 = \sigma^2\)`, we can combine information from both samples ] -- .pull-left-wide[ > The **pooled variance estimator** is: `$$S_p^2 = \frac{(n_1 - 1)S_1^2 + (n_2 - 1)S_2^2}{n_1 + n_2 - 2}$$` ] -- .pull-left-wide[ > **Exact CI for `\(\mu_1 - \mu_2\)`, equal unknown variances:** `$$\hat{I} = \left[(\bar{X}_1 - \bar{X}_2) \pm t_{1-\alpha/2}(n_1+n_2-2) \cdot \sqrt{S_p^2 \left(\frac{1}{n_1} + \frac{1}{n_2}\right)}\right]$$` - **Use `\(t\)`, not `\(z\)`, when `\(\sigma^2\)` is unknown** — `\(t(n_1+n_2-2)\)` is exact for normal populations; `\(z\)` is only asymptotically valid ] --- # .red[Raise your hand 3: CI for the difference in means] <div class="countdown" id="timer_4f773b65" data-update-every="1" tabindex="0" style="top:TRUE;right:0;"> <div class="countdown-controls"><button class="countdown-bump-down">−</button><button class="countdown-bump-up">+</button></div> <code class="countdown-time"><span class="countdown-digits minutes">00</span><span class="countdown-digits colon">:</span><span class="countdown-digits seconds">20</span></code> </div> .pull-left-wide[ **Q1.** A 95% CI for `\(\mu_1 - \mu_2\)` is `\([-2,\; 8]\)`. What can we conclude? - **A)** `\(\mu_1 > \mu_2\)` at the 95% confidence level, since the interval is mostly positive - **B)** The data are consistent with both `\(\mu_1 > \mu_2\)` and `\(\mu_1 \leq \mu_2\)` — zero is inside the interval - **C)** `\(\mu_2 > \mu_1\)` at the 95% confidence level, since the interval contains negative values ] -- .pull-left-wide[ **Q2.** Why does the pooled variance estimator `\(S_p^2\)` give a better estimate than using `\(S_1^2\)` and `\(S_2^2\)` separately? - **A)** Because it always produces a smaller variance estimate, narrowing the CI - **B)** Because it avoids the need to assume normality in either group - **C)** Because when `\(\sigma_1^2 = \sigma_2^2\)`, pooling combines information from both samples, reducing estimation uncertainty ] --- # .red[Practice 3: CI for the difference in means] .pull-left-wide[ Two independent samples of wages (€/hour): - Group 1 (men): `\(n_1 = 36\)`, `\(\bar{X}_1 = 28\)`, `\(S_1^2 = 25\)` - Group 2 (women): `\(n_2 = 49\)`, `\(\bar{X}_2 = 25\)`, `\(S_2^2 = 16\)` 1. Construct a 95% CI for `\(\mu_1 - \mu_2\)` (use `\(z_{0.975} = 1.96\)`, variances unknown) 2. Does the CI suggest a statistically meaningful difference between the groups? 3. Now assume equal variances. Calculate `\(S_p^2\)` and construct the pooled CI. Does the conclusion change? ] --- class: inverse, middle, center # Confidence interval for the ratio of two variances --- # The `\(F\)`-distribution .pull-left[ > If `\(Y_1 \sim \chi^2(d_1)\)` and `\(Y_2 \sim \chi^2(d_2)\)` are independent: `$$F = \frac{Y_1/d_1}{Y_2/d_2} \sim F(d_1, d_2)$$` - `\(E(F) = \dfrac{d_2}{d_2-2}\)` (for `\(d_2 > 2\)`) - Right-skewed, positive values only - We will use it for the CI for `\(\sigma_2^2/\sigma_1^2\)` ] .pull-right[  ] --- # When do we compare variances? .pull-left-wide[ - Sometimes we want to know whether variability differs between two groups - We construct a CI for the **ratio** `\(\sigma_2^2 / \sigma_1^2\)` - If this ratio is 1, the variances are equal ] -- .pull-left-wide[ - Assume `\(X_j \sim \mathcal{N}(\mu_j, \sigma_j^2)\)` in each group (`\(j = 1, 2\)`), independent samples - It can be shown that: `$$\frac{S_1^2 / \sigma_1^2}{S_2^2 / \sigma_2^2} = \frac{S_1^2 \cdot \sigma_2^2}{S_2^2 \cdot \sigma_1^2} \sim F(n_1 - 1,\; n_2 - 1)$$` ] --- # Deriving the CI for `\(\sigma_2^2 / \sigma_1^2\)` .pull-left-wide[ - Let `\(F_{\alpha/2}\)` and `\(F_{1-\alpha/2}\)` be the critical values from `\(F(n_1-1, n_2-1)\)`: `$$P\!\left(F_{\alpha/2} \leq \frac{S_1^2 \cdot \sigma_2^2}{S_2^2 \cdot \sigma_1^2} \leq F_{1-\alpha/2}\right) = 1 - \alpha$$` ] -- .pull-left-wide[ - Rearranging to isolate `\(\sigma_2^2/\sigma_1^2\)`: > **Exact CI for `\(\sigma_2^2/\sigma_1^2\)`:** `$$\hat{I} = \left[\frac{S_2^2}{S_1^2} \cdot F_{\alpha/2}(n_1-1, n_2-1),\;\; \frac{S_2^2}{S_1^2} \cdot F_{1-\alpha/2}(n_1-1, n_2-1)\right]$$` - Also **not symmetric** around `\(S_2^2/S_1^2\)` because the `\(F\)`-distribution is skewed ] --- # Illustration: `\(F(n_1-1,\, n_2-1)\)` distribution and the CI for `\(\sigma_2^2/\sigma_1^2\)` .pull-left-narrow[ - Right-skewed, positive-valued - `\(F_{\alpha/2}\)` and `\(F_{1-\alpha/2}\)` are **not** equidistant from 1 - Hence the CI for `\(\sigma_2^2/\sigma_1^2\)` is also asymmetric — same principle as the `\(\chi^2\)` case ] .pull-right-wide[  ] --- # .red[Raise your hand 4: CI for the ratio of variances] <div class="countdown" id="timer_dc057b46" data-update-every="1" tabindex="0" style="top:TRUE;right:0;"> <div class="countdown-controls"><button class="countdown-bump-down">−</button><button class="countdown-bump-up">+</button></div> <code class="countdown-time"><span class="countdown-digits minutes">00</span><span class="countdown-digits colon">:</span><span class="countdown-digits seconds">20</span></code> </div> .pull-left-wide[ **Q1.** A 95% CI for `\(\sigma_2^2/\sigma_1^2\)` is `\([0.8,\; 2.5]\)`. What can we conclude? - **A)** `\(\sigma_2 > \sigma_1\)`, since most of the interval is above 1 - **B)** The data are consistent with equal variances — 1 is inside the interval — as well as with `\(\sigma_2^2\)` being up to 2.5 times `\(\sigma_1^2\)` - **C)** `\(\sigma_2^2 > \sigma_1^2\)` at the 95% confidence level ] -- .pull-left-wide[ **Q2.** Why does the `\(F\)`-distribution appear when comparing two sample variances? - **A)** Because the ratio of two normal random variables follows an `\(F\)`-distribution - **B)** Because `\(F\)` is always used when comparing statistics from two independent groups - **C)** Because each `\((n_j-1)S_j^2/\sigma_j^2 \sim \chi^2(n_j-1)\)`, and the ratio of two independent chi-square variables (divided by their degrees of freedom) defines an `\(F\)` ] --- # .red[Practice 4: CI for the ratio of variances] .pull-left-wide[ Two independent normal samples: - Group 1: `\(n_1 = 11\)`, `\(S_1^2 = 8\)` - Group 2: `\(n_2 = 16\)`, `\(S_2^2 = 18\)` Construct a 95% CI for `\(\sigma_2^2/\sigma_1^2\)`. *Hint:* `\(F_{0.025}(10, 15) \approx 0.30\)`; `\(F_{0.975}(10, 15) \approx 3.06\)` Does the CI suggest the variances are significantly different? ] --- class: inverse, middle, center # Determining the required sample size --- # Width of a CI and sample size .pull-left-wide[ - The variance of `\(\bar{X}\)` is `\(\sigma^2/n\)` — larger `\(n\)` gives a narrower CI - We can invert the CI formula to find the `\(n\)` needed to achieve a desired width ] -- .pull-left-wide[ - The width of the CI for `\(\mu\)` (known `\(\sigma^2\)`) is `\(2 z_{1-\alpha/2} \cdot \sigma/\sqrt{n}\)` - Suppose we want the half-width to be at most `\(K\)`: `$$z_{1-\alpha/2} \cdot \frac{\sigma}{\sqrt{n}} \leq K \quad \Longrightarrow \quad n \geq \frac{z_{1-\alpha/2}^2 \cdot \sigma^2}{K^2}$$` ] -- .pull-left-wide[ > **Required sample size:** `$$n^* = \frac{z_{1-\alpha/2}^2 \cdot \sigma^2}{K^2}$$` - Requires knowing `\(\sigma^2\)` — use a pilot study if unknown ] --- # Example: choosing `\(n\)` for a desired precision .pull-left-wide[ **Setting:** we want to estimate the mean height of students with a 95% CI and half-width at most 2 cm. A previous study suggests `\(\sigma \approx 10\)` cm. `$$n^* = \frac{1.96^2 \times 10^2}{2^2} = \frac{3.84 \times 100}{4} = \frac{384}{4} \approx 97$$` ] -- .pull-left-wide[ **What if we want twice the precision** — half-width `\(\leq 1\)` cm? `$$n^* = \frac{1.96^2 \times 10^2}{1^2} \approx 385$$` Halving the half-width requires **4× the sample size** — because width `\(\propto 1/\sqrt{n}\)`, so to halve it you must quadruple `\(n\)` ] --- # Sample size for Bernoulli `\(X\)` .pull-left-wide[ - If `\(X \sim Bernoulli(p)\)`, then `\(\sigma^2 = p(1-p)\)`, which depends on the unknown `\(p\)` ] -- .pull-left-wide[ - The product `\(p(1-p)\)` is maximised at `\(p = 0.5\)`, giving `\(\sigma^2 \leq 0.25\)` - Substituting this worst-case bound: `$$n^* = \frac{z_{1-\alpha/2}^2 \cdot 0.25}{K^2}$$` - This guarantees the desired width **regardless of the true** `\(p\)` — a conservative bound ] --- # .red[Raise your hand 5: Sample size] <div class="countdown" id="timer_80d815bf" data-update-every="1" tabindex="0" style="top:TRUE;right:0;"> <div class="countdown-controls"><button class="countdown-bump-down">−</button><button class="countdown-bump-up">+</button></div> <code class="countdown-time"><span class="countdown-digits minutes">00</span><span class="countdown-digits colon">:</span><span class="countdown-digits seconds">20</span></code> </div> .pull-left-wide[ **Q1.** To halve the width of a CI for `\(\mu\)`, how must `\(n\)` change? - **A)** Double `\(n\)` — width is proportional to `\(1/n\)` - **B)** Quadruple `\(n\)` — width is proportional to `\(1/\sqrt{n}\)`, so halving width requires multiplying `\(n\)` by 4 - **C)** Triple `\(n\)` — halving the width requires tripling the observations ] -- .pull-left-wide[ **Q2.** For `\(X \sim Bernoulli(p)\)`, using `\(p(1-p) \leq 0.25\)` in the sample size formula gives what guarantee? - **A)** The required `\(n\)` is sufficient regardless of the true `\(p\)` — it is a conservative worst-case bound - **B)** The CI width is exactly minimised when `\(p = 0.5\)` - **C)** The formula only applies when `\(p\)` is close to `\(0.5\)` ] --- # .red[Practice 5: Sample size] .pull-left-wide[ A researcher wants a 95% CI for the mean income in a population, with a half-width of at most €500. A pilot study suggests `\(\sigma \approx 2{,}500\)`. 1. Calculate the required sample size `\(n^*\)` 2. How does `\(n^*\)` change if the required half-width is tightened to €250? 3. Suppose instead `\(X \sim Bernoulli(p)\)` (e.g. employed/not employed) and we want half-width `\(\leq 0.03\)`. What is the conservative `\(n^*\)`? *Hint:* `\(z_{0.975} = 1.96\)` ] --- # How to (not) interpret a confidence interval *This often causes confusion, so let's be clear about what a CI does and does not mean.* .pull-left[ ### It isn't: - The probability that the true parameter is in the interval + (the parameter is fixed, not random) - The probability that the interval contains the true parameter + (the interval is random, but we don't talk about "the" interval — we talk about the procedure that generates intervals, which has a certain long-run success rate) ] .pull-right[ ### It is: - A random interval that contains the true parameter with probability `\(1-\alpha\)` in repeated sampling - A range of plausible values for the parameter based on the observed data and the sampling distribution of the estimator ] --- class: inverse, middle, center # .orange[Advanced: Clopper-Pearson interval for a proportion] ### Not part of the curriculum — for the curious --- # .orange[Why the standard Bernoulli CI can fail] .pull-left-wide[ - The approximate CI for `\(p\)` relies on the normal approximation to the binomial - This works well when `\(n\)` is large and `\(p\)` is not too close to 0 or 1 - **But it breaks down when:** - `\(n\)` is small — the CLT has not yet kicked in - `\(p\)` is near 0 or 1 — the binomial distribution is strongly skewed - The resulting interval can extend **outside** `\([0,1]\)`, which is logically impossible for a probability ] -- .pull-left-wide[ **Example:** `\(n = 20\)`, `\(k = 2\)` successes (so `\(\hat{p} = 0.10\)`) `$$\hat{p} \pm 1.96\sqrt{\frac{\hat{p}(1-\hat{p})}{n}} = 0.10 \pm 1.96\sqrt{\frac{0.10 \times 0.90}{20}} = 0.10 \pm 0.131 = [-0.031,\; 0.231]$$` The lower bound is **negative** — the normal approximation has failed ] --- # .orange[The Clopper-Pearson exact interval] .pull-left-wide[ - The **Clopper-Pearson interval** is constructed directly from the binomial distribution — no normal approximation - Given `\(k\)` successes out of `\(n\)` trials, the exact `\(1-\alpha\)` CI is `\([p_L,\; p_U]\)` where: `$$p_L = B_{\alpha/2}(k,\; n-k+1), \qquad p_U = B_{1-\alpha/2}(k+1,\; n-k)$$` - `\(B_q(a, b)\)` denotes the `\(q\)`-th quantile of the **Beta$(a,b)$ distribution** - Special cases: if `\(k = 0\)` set `\(p_L = 0\)`; if `\(k = n\)` set `\(p_U = 1\)` ] -- .pull-left-wide[ - The interval is **conservative**: the true coverage is at least `\(1-\alpha\)` for every `\(p\)` and `\(n\)` - It always stays within `\([0, 1]\)` by construction - The cost: slightly wider intervals than the approximate CI when the approximation is valid ] --- # .orange[Example: Clopper-Pearson vs. normal approximation] .pull-left-wide[ **Setting:** `\(n = 20\)` households surveyed, `\(k = 2\)` report unemployment; `\(\hat{p} = 0.10\)` | | Lower bound | Upper bound | |---|---|---| | Normal approximation | `\(-0.031\)` | `\(0.231\)` | | Clopper-Pearson (exact) | `\(0.012\)` | `\(0.317\)` | *Note:* `\(p_L = B_{0.025}(2,\,19) \approx 0.012\)`; `\(p_U = B_{0.975}(3,\,18) \approx 0.317\)` ] -- .pull-left-wide[ - The exact interval is fully inside `\([0,1]\)` and provides a meaningful lower bound - The approximation fails badly here: `\(n\)` is small and `\(p\)` is near 0 - In practice: use the normal approximation when `\(n\hat{p} \geq 5\)` and `\(n(1-\hat{p}) \geq 5\)`; use Clopper-Pearson otherwise ] --- # Key takeaways .pull-left-wide[ **CI for `\(\mu\)`:** use `\(z\)` when `\(\sigma\)` known; use `\(t(n-1)\)` when `\(\sigma\)` unknown and `\(X\)` normal — `\(t\)` is exact, `\(z\)` is only asymptotic **CI for `\(\sigma^2\)`:** `\(\chi^2(n-1)\)` pivot — asymmetric interval; exact only when `\(X\)` is normal ] -- .pull-left-wide[ **CI for `\(\mu_1 - \mu_2\)`:** approximate with `\(z\)` and separate `\(S_j^2\)`; exact with `\(t(n_1+n_2-2)\)` and pooled `\(S_p^2\)` when `\(\sigma_1^2 = \sigma_2^2\)` and `\(X\)` normal **CI for `\(\sigma_2^2/\sigma_1^2\)`:** `\(F(n_1-1, n_2-1)\)` pivot — also asymmetric; exact only when `\(X\)` is normal ] -- .pull-left-wide[ **Sample size:** `\(n^* = z_{1-\alpha/2}^2 \sigma^2 / K^2\)`; width `\(\propto 1/\sqrt{n}\)` — halving the width requires 4× the observations; for Bernoulli use `\(\sigma^2 = 0.25\)` as a conservative bound **Rule of thumb:** `\(n \gtrsim 30\)` for `\(z\)` to be adequate; `\(n \gtrsim 100\)` if the population is skewed ] --- # Before next time .pull-left[ - Read the assigned reading - Next time: Hypothesis testing `\(\rightarrow\)` Chapter 14 ] .pull-right[  ]