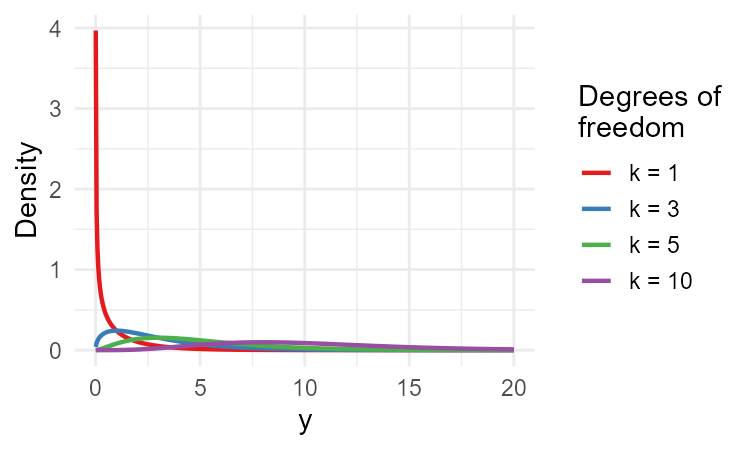

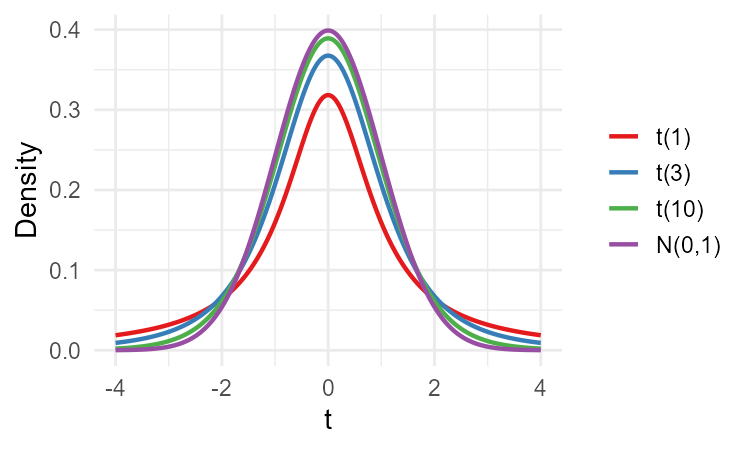

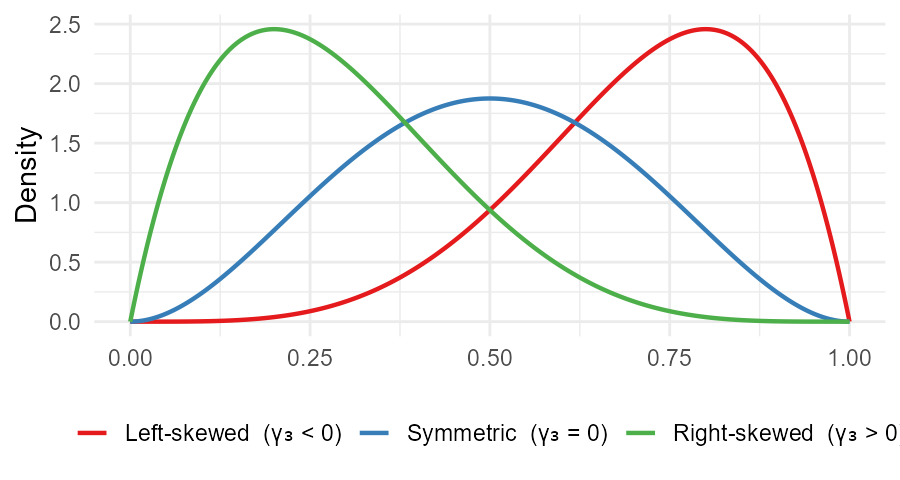

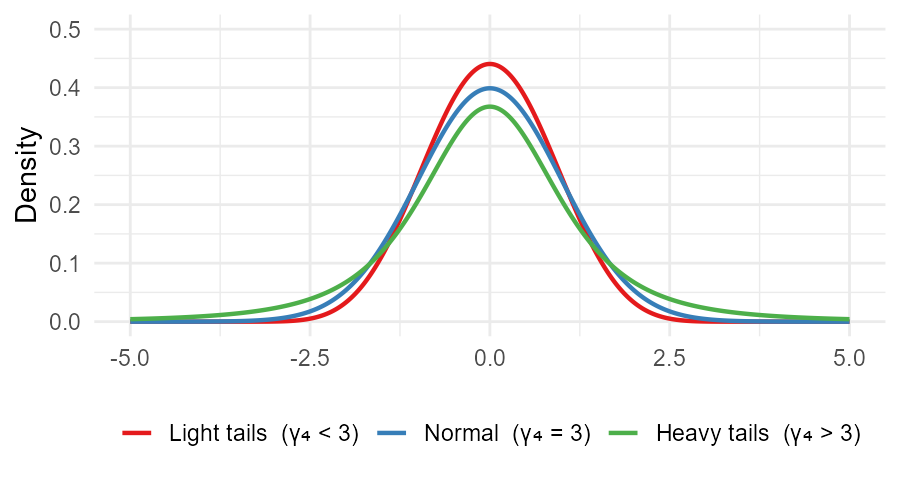

class: center, inverse, middle <style>.xe__progress-bar__container { top:0; opacity: 1; position:absolute; right:0; left: 0; } .xe__progress-bar { height: 0.25em; background-color: #808080; width: calc(var(--slide-current) / var(--slide-total) * 100%); } .remark-visible .xe__progress-bar { animation: xe__progress-bar__wipe 200ms forwards; animation-timing-function: cubic-bezier(.86,0,.07,1); } @keyframes xe__progress-bar__wipe { 0% { width: calc(var(--slide-previous) / var(--slide-total) * 100%); } 100% { width: calc(var(--slide-current) / var(--slide-total) * 100%); } }</style> <style type="text/css"> .pull-left { float: left; width: 44%; } .pull-right { float: right; width: 44%; } .pull-right ~ p { clear: both; } .pull-left-wide { float: left; width: 66%; } .pull-right-wide { float: right; width: 66%; } .pull-right-wide ~ p { clear: both; } .pull-left-narrow { float: left; width: 30%; } .pull-right-narrow { float: right; width: 30%; } .tiny123 { font-size: 0.40em; } .small123 { font-size: 0.80em; } .large123 { font-size: 2em; } .red { color: red } .orange { color: orange } .green { color: green } </style> # Statistics ## Estimators of other descriptive measures ### (Chapter 12) ### Christian Vedel,<br>Department of Economics<br>University of Southern Denmark ### Email: [christian-vs@sam.sdu.dk](mailto:christian-vs@sam.sdu.dk) ### Updated 2026-04-27 ??? 1 min // 12:16 --- class: middle # Today's lecture .pull-left-wide[ **Extending the analogy principle to estimate variance, shape, and distribution** - **Section 0:** A few more distributions - **Section 1:** An estimator of the variance - **Section 2:** Estimators of higher-order moments - **Section 3:** Estimators of the covariance and correlation coefficient - **Section 4:** Estimating a distribution - **Section 5:** Estimators of quantiles ] .pull-right-narrow[  ] ??? 2 min // 12:18 --- # The chi-square distribution .pull-left[ > If `\(Z_1, \ldots, Z_k \overset{iid}{\sim} N(0,1)\)`, then: `$$Y = \sum_{i=1}^k Z_i^2 \sim \chi^2(k)$$` - `\(E(Y) = k\)`, `\(\quad Var(Y) = 2k\)` - Right-skewed, positive values only - We will use it for the distribution of `\(S^2\)` ] .pull-right[  ] ??? 3 min // 12:21 --- # The Student's `\(t\)`-distribution .pull-left[ > If `\(Z \sim N(0,1)\)` and `\(Y \sim \chi^2(k)\)` independent: `$$T = \frac{Z}{\sqrt{Y/k}} \sim t(k)$$` - `\(E(T) = 0\)`, `\(\quad Var(T) = \dfrac{k}{k-2}\)` - Heavier tails than normal; `\(t(k) \to N(0,1)\)` as `\(k \to \infty\)` - .red[**Next lecture:** used for CI when `\(\sigma^2\)` is unknown] ] .pull-right[  ] ??? 3 min // 12:24 --- # Variance estimators .pull-left-wide[ **Analogy principle:** estimate `\(Var(X) = \sum_{i=1}^N (x_i - \mu)^2 \cdot f_X(x_i)\)` by replacing all unknown population quantities with sample counterparts ] -- .pull-left-wide[ > **When `\(\mu\)` is known** — replace `\(f_X(x_i)\)` with `\(1/n\)`: `$$\tilde{S}^2 = \frac{1}{n} \cdot \sum_{i=1}^n (X_i - \mu)^2 \quad \text{(unbiased for } \sigma^2\text{)}$$` ] -- .pull-left-wide[ > **When `\(\mu\)` is unknown** — also replace `\(\mu\)` with `\(\bar{X}\)`: `$$b^2 = \frac{1}{n} \cdot \sum_{i=1}^n \left(X_i - \bar{X}\right)^2$$` - **Biased:** `\(E(b^2) = \dfrac{n-1}{n}\sigma^2 < \sigma^2\)` — underestimates `\(\sigma^2\)` ] ??? 4 min // 12:28 --- # The sample variance .pull-left-wide[ **Why is `\(b^2\)` biased?** Decompose `\((X_i - \bar{X}) = (X_i - \mu) - (\bar{X} - \mu)\)`, square and sum: `$$\sum_{i=1}^n (X_i - \bar{X})^2 = \sum_{i=1}^n (X_i-\mu)^2 - n(\bar{X}-\mu)^2$$` Taking expectations (using `\(E(X_i-\mu)^2 = \sigma^2\)` and `\(E(\bar{X}-\mu)^2 = \sigma^2/n\)`): `$$E\!\left[\sum_{i=1}^n (X_i - \bar{X})^2\right] = n\sigma^2 - \sigma^2 = (n-1)\sigma^2, \quad \text{so } E(b^2) = \frac{n-1}{n}\sigma^2 < \sigma^2 \quad\blacksquare$$` ] -- .pull-left-wide[ > Correcting for the bias — divide by `\(n-1\)` instead of `\(n\)`: `$$S^2 = \frac{1}{n-1} \cdot \sum_{i=1}^n \left(X_i - \bar{X}\right)^2$$` - `\(S^2\)` is **unbiased** and **consistent** for `\(\sigma^2\)` ] ??? 10 min // 12:38 --- # An estimator of the standard deviation .pull-left-wide[ - Natural estimator of `\(\sigma\)`: `\(S = \sqrt{S^2}\)` - But `\(E[g(X)] \neq g[E(X)]\)` in general, so: `$$E(S) = E\!\left(\sqrt{S^2}\right) \neq \sqrt{E(S^2)} = \sqrt{\sigma^2} = \sigma$$` ] -- .pull-left-wide[ **Why is `\(S\)` biased?** Since `\(\sqrt{\cdot}\)` is **concave**, Jensen's inequality gives: `$$E(S) = E\!\left[\sqrt{S^2}\right] \leq \sqrt{E\!\left[S^2\right]} = \sigma \quad\blacksquare$$` - `\(S\)` strictly **underestimates** `\(\sigma\)` for finite `\(n\)` - As `\(n \to \infty\)`: `\(S^2 \xrightarrow{p} \sigma^2\)`, so `\(S \xrightarrow{p} \sigma\)` — **consistent** despite the bias ] ??? 3 min // 12:41 --- # Distribution of `\(S^2\)` and the chi-square .pull-left-wide[ In a normal population, the scaled quantity `\(Y = S^2 \cdot \dfrac{n-1}{\sigma^2} \sim \chi^2(n-1)\)` `$$F(S^2 \leq a) = P\!\left(\chi^2(n-1) \leq a \cdot \frac{n-1}{\sigma^2}\right)$$` - The `\(\chi^2\)` distribution is **not symmetric** and takes only positive values ] -- .pull-left-wide[ **Example:** Wages are normally distributed with `\(\sigma^2 = 100\)`. We draw `\(n = 11\)` and observe `\(S^2 = 130\)`. Scale to `\(\chi^2\)`: `\(\quad Y = 130 \cdot \dfrac{10}{100} = 13\)` `$$F(S^2 \leq 130) = P(\chi^2(10) \leq 13) \approx 0.77$$` About 77% of samples would produce a variance at most this large — not unusual. ] ??? 3 min // 12:44 --- # .red[Raise your hand 1: Sample variance] <div class="countdown" id="timer_54cdc6f3" data-update-every="1" tabindex="0" style="top:TRUE;right:0;"> <div class="countdown-controls"><button class="countdown-bump-down">−</button><button class="countdown-bump-up">+</button></div> <code class="countdown-time"><span class="countdown-digits minutes">00</span><span class="countdown-digits colon">:</span><span class="countdown-digits seconds">20</span></code> </div> .pull-left-wide[ **Q1.** Which of the following are correct reasons to divide by `\(n - 1\)` rather than `\(n\)`? (There may be more than one.) - **A)** It corrects for bias — `\(b^2\)` underestimates `\(\sigma^2\)` because `\(\bar{X}\)` is chosen to minimise the very deviations we are summing - **B)** The `\(n\)` deviations `\((X_i - \bar{X})\)` must sum to zero, so only `\(n-1\)` are free - **C)** It makes `\(S^2\)` consistent — `\(b^2\)` is not consistent ] -- .pull-left-wide[ **Q2.** `\(S^2\)` is unbiased for `\(\sigma^2\)`. Is `\(S = \sqrt{S^2}\)` unbiased for `\(\sigma\)`? - **A)** Yes — the square root of an unbiased estimator is also unbiased - **B)** Yes — because `\(S^2\)` is consistent, `\(S\)` inherits unbiasedness - **C)** No — `\(E(\sqrt{S^2}) \neq \sqrt{E(S^2)}\)` in general, so `\(S\)` is biased (though consistent) ] ??? 3 min // 12:47 --- # .red[Practice 1: Sample variance] .pull-left-wide[ A sample of `\(n = 5\)` observations: `\(\{2, 4, 4, 6, 9\}\)`. 1. Calculate `\(\bar{X}\)` 2. Calculate `\(S^2\)` (the unbiased sample variance) 3. Why would `\(b^2 = \frac{1}{n}\sum(X_i - \bar{X})^2\)` give a different answer? Calculate `\(b^2\)` and compare 4. For large `\(n\)`, does the difference between `\(S^2\)` and `\(b^2\)` matter? Why? ] ??? 3 min // 12:50 --- # Central moments and skewness .pull-left[ - The `\(k\)`-th **central moment** and its estimator: `$$m_k^* = E\!\left[(X-\mu)^k\right], \quad \hat{m}_k^* = \frac{1}{n}\sum_{i=1}^n(X_i-\bar{X})^k$$` > **Skewness** (standardised 3rd central moment): `$$\gamma_3 = \frac{m_3^*}{\sigma^3}, \qquad \hat{\gamma}_3 = \frac{\hat{m}_3^*}{S^3}$$` - `\(\gamma_3 > 0\)`: right-skewed (long right tail) - `\(\gamma_3 < 0\)`: left-skewed (long left tail) - `\(\gamma_3 = 0\)`: symmetric ] .pull-right[  ] ??? 4 min // 12:54 --- # Kurtosis .pull-left[ > **Kurtosis** is the standardised fourth central moment: `$$\gamma_4 = \frac{m_4^*}{\sigma^4}, \qquad \hat{\gamma}_4 = \frac{\hat{m}_4^*}{S^4}$$` - `\(\gamma_4 > 3\)`: heavier tails than normal (leptokurtic) - `\(\gamma_4 < 3\)`: lighter tails (platykurtic) - `\(\gamma_4 = 3\)`: normal distribution - Deviations from `\(\gamma_3 = 0\)` or `\(\gamma_4 = 3\)` signal non-normality ] .pull-right[  ] ??? 3 min // 12:57 --- # .red[Raise your hand 2: Skewness and kurtosis] <div class="countdown" id="timer_98fdc2cd" data-update-every="1" tabindex="0" style="top:TRUE;right:0;"> <div class="countdown-controls"><button class="countdown-bump-down">−</button><button class="countdown-bump-up">+</button></div> <code class="countdown-time"><span class="countdown-digits minutes">00</span><span class="countdown-digits colon">:</span><span class="countdown-digits seconds">20</span></code> </div> .pull-left-wide[ **Q1.** A sample gives `\(\hat{\gamma}_3 = 1.8\)`. What does this indicate? - **A)** The distribution has a longer right tail than left — it is right-skewed - **B)** The distribution is more peaked than the normal - **C)** The distribution has higher variance than a symmetric distribution would ] -- .pull-left-wide[ **Q2.** A sample gives `\(\hat{\gamma}_4 = 5\)`, compared to 3 for the normal. What does this suggest? - **A)** The distribution is more spread out (higher variance) than the normal - **B)** The distribution has heavier tails and a sharper peak than the normal — excess kurtosis - **C)** The distribution is right-skewed ] ??? 3 min // 13:00 — BREAK --- # .red[Practice 2: Skewness and kurtosis] .pull-left-wide[ A dataset of `\(n = 6\)` income values (in €k): `\(\{10, 12, 14, 14, 16, 60\}\)`. 1. Calculate `\(\bar{X}\)` and `\(S^2\)` 2. Calculate `\(\hat{m}_3^*\)` (the third central moment) 3. Calculate `\(\hat{\gamma}_3\)`. Is income right- or left-skewed? Does this make intuitive sense? ] ??? 4 min // 13:19 — SESSION 2 resumes 13:15 --- # Covariance and correlation .pull-left-wide[ > The **sample covariance** is: `$$\widehat{Cov}(X, Y) = \frac{1}{n} \cdot \sum_{i=1}^n \left(X_i - \bar{X}\right)\!\left(Y_i - \bar{Y}\right)$$` - Constructed by replacing `\(\mu_X\)`, `\(\mu_Y\)`, and `\(f(x_i, y_j)\)` with their sample counterparts ] -- .pull-left-wide[ > The **sample correlation coefficient** is: `$$\hat{\rho}(X, Y) = \frac{\widehat{Cov}(X, Y)}{\sqrt{S_X^2 \cdot S_Y^2}}$$` - For large `\(n\)`: `\(\hat{\rho}(X, Y) \overset{a}{\sim} \mathcal{N}\!\left(\rho,\, \dfrac{(1 - \rho^2)^2}{n - 2}\right)\)` ] ??? 4 min // 13:23 --- # .red[Raise your hand 3: Covariance and correlation] <div class="countdown" id="timer_7a565e14" data-update-every="1" tabindex="0" style="top:TRUE;right:0;"> <div class="countdown-controls"><button class="countdown-bump-down">−</button><button class="countdown-bump-up">+</button></div> <code class="countdown-time"><span class="countdown-digits minutes">00</span><span class="countdown-digits colon">:</span><span class="countdown-digits seconds">20</span></code> </div> .pull-left-wide[ **Q1.** `\(\widehat{Cov}(X, Y) = 0\)` in a sample. Can we conclude that `\(X\)` and `\(Y\)` are independent? - **A)** No — zero covariance rules out a linear relationship but not non-linear dependence - **B)** Yes — zero covariance is equivalent to independence - **C)** Only if both `\(X\)` and `\(Y\)` are normally distributed, since normality makes independence and zero covariance equivalent ] -- .pull-left-wide[ **Q2.** `\(\hat{\rho} = 0.9\)` between education and income. A colleague concludes "education causes higher income." What is the problem? - **A)** Nothing — high correlation always implies causation - **B)** Correlation measures linear association, not causation — a common cause (e.g. family background) could drive both - **C)** The formula for `\(\hat{\rho}\)` is only valid when both variables are normally distributed ] ??? 4 min // 13:27 --- # .red[Practice 3: Covariance and correlation] .pull-left-wide[ A researcher records hours of sleep the night before an exam and the exam score for 5 students: | Student | Sleep `\(X\)` (hrs) | Score `\(Y\)` | |:---:|:---:|:---:| | 1 | 4 | 45 | | 2 | 6 | 60 | | 3 | 7 | 70 | | 4 | 8 | 80 | | 5 | 9 | 85 | 1. Calculate `\(\bar{X}\)`, `\(\bar{Y}\)`, `\(S_X^2\)`, `\(S_Y^2\)` 2. Calculate `\(\widehat{Cov}(X, Y)\)` 3. Calculate `\(\hat{\rho}(X, Y)\)`. Interpret the result 4. Does this prove that more sleep *causes* better exam scores? ] ??? 4 min // 13:31 --- # The empirical distribution function .pull-left-wide[ - Recall that the CDF is `\(F_X(x) = P(X \leq x)\)`; by the analogy principle, `\(P(X \leq x)\)` is estimated by the fraction of sample observations `\(\leq x\)` > `$$\hat{F}_X(x) = \frac{\text{number of sample elements with } x_i \leq x}{n}$$` ] -- .pull-left-wide[ - **Unbiased and consistent:** `\(E\!\left[\hat{F}_X(x)\right] = F_X(x)\)` for all `\(x\)` - **Asymptotically normal:** `$$\hat{F}_X(x) \overset{a}{\sim} \mathcal{N}\!\left(F_X(x),\; \frac{F_X(x)\left[1 - F_X(x)\right]}{n}\right)$$` - Useful for: normality tests, comparing distributions, non-parametric CI for the full CDF ] ??? 4 min // 13:35 --- # .red[Raise your hand 4: Empirical distribution function] <div class="countdown" id="timer_c7d763cf" data-update-every="1" tabindex="0" style="top:TRUE;right:0;"> <div class="countdown-controls"><button class="countdown-bump-down">−</button><button class="countdown-bump-up">+</button></div> <code class="countdown-time"><span class="countdown-digits minutes">00</span><span class="countdown-digits colon">:</span><span class="countdown-digits seconds">20</span></code> </div> .pull-left-wide[ **Q1.** A sample of `\(n = 10\)` gives `\(\hat{F}_X(3) = 0.4\)`. What does this mean? - **A)** 40% of the sample observations are `\(\leq 3\)` - **B)** The probability that `\(X = 3\)` in the population is 0.4 - **C)** The 40th percentile of the distribution equals 3 ] -- .pull-left-wide[ **Q2.** Why is `\(\hat{F}_X(x)\)` approximately normally distributed for large `\(n\)`? - **A)** Because the CDF `\(F_X(x)\)` is a smooth, continuous function - **B)** Because `\(\hat{F}_X(x)\)` is a sample proportion — the mean of Bernoulli indicators `\(\mathbf{1}(X_i \leq x)\)` — and the CLT applies - **C)** Because all sample statistics are approximately normal for large `\(n\)` ] ??? 4 min // 13:39 --- # Estimated quantiles .pull-left-wide[ - Recall: the `\(p\)`-th quantile `\(q_p\)` is the value where `\(F_X\)` crosses `\(p\)` - We estimate quantiles from the empirical distribution function: `\(\hat{q}_p\)` is where `\(\hat{F}_X\)` crosses `\(p\)` ] -- .pull-left-wide[ - For example, the **sample median** `\(\hat{q}_{0.5}\)` is the value where `\(\hat{F}_X\)` crosses 0.5 - If this crossing happens over an interval, we use the midpoint as the sample median ] ??? 3 min // 13:42 --- # Order statistics and the sample median .pull-left-wide[ > The `\(k\)`-th **order statistic** `\(X_{(k)}\)` is the `\(k\)`-th smallest observation. Estimator of the `\(p\)`-th quantile: `$$\hat{q}_p = \begin{cases} X_{(np + 1)}, & \text{if } [np] \neq np \\ \dfrac{X_{(np)} + X_{(np + 1)}}{2}, & \text{if } [np] = np \end{cases}$$` where `\([np]\)` denotes the integer part of `\(np\)` ] -- .pull-left-wide[ **Sample median** (`\(p = 0.5\)`): `$$\hat{q}_{0.5} = \begin{cases} X_{\left(\frac{n+1}{2}\right)}, & \text{if } n \text{ is odd} \\ \dfrac{X_{\left(\frac{n}{2}\right)} + X_{\left(\frac{n}{2}+1\right)}}{2}, & \text{if } n \text{ is even} \end{cases}$$` - Robust to outliers — unlike the sample mean; useful when the distribution is skewed ] ??? 4 min // 13:46 --- # .red[Raise your hand 5: Quantiles] <div class="countdown" id="timer_882af3d6" data-update-every="1" tabindex="0" style="top:TRUE;right:0;"> <div class="countdown-controls"><button class="countdown-bump-down">−</button><button class="countdown-bump-up">+</button></div> <code class="countdown-time"><span class="countdown-digits minutes">00</span><span class="countdown-digits colon">:</span><span class="countdown-digits seconds">20</span></code> </div> .pull-left-wide[ **Q1.** A sample of `\(n = 10\)` sorted observations. What is the sample median? - **A)** `\(X_{(5)}\)` — the 5th order statistic - **B)** `\(X_{(6)}\)` — the 6th order statistic - **C)** `\(\dfrac{X_{(5)} + X_{(6)}}{2}\)` — the average of the 5th and 6th, because `\(n\)` is even ] -- .pull-left-wide[ **Q2.** A sample gives `\(\hat{q}_{0.25} = 50\)`. What does this mean? - **A)** 25% of the sample observations fall at or below 50 - **B)** The mean of the bottom quarter of the distribution is 50 - **C)** The value 50 occurs with frequency 25% in the sample ] ??? 4 min // 13:50 --- # .red[Practice 5: Order statistics and quantiles] .pull-left-wide[ A sorted sample of `\(n = 9\)`: `\(\{3, 5, 7, 8, 10, 11, 14, 18, 25\}\)`. 1. Find the sample median `\(\hat{q}_{0.5}\)` 2. Find the first quartile `\(\hat{q}_{0.25}\)` 3. Find the third quartile `\(\hat{q}_{0.75}\)` 4. Calculate the interquartile range `\(\hat{q}_{0.75} - \hat{q}_{0.25}\)`. Why is this a useful summary of spread? ] ??? 4 min // 13:54 --- # Key takeaways .pull-left-wide[ **Variance:** `\(S^2 = \frac{1}{n-1}\sum(X_i - \bar{X})^2\)` — unbiased; `\(S\)` is biased but consistent for `\(\sigma\)` **Higher-order moments:** `\(\hat{m}_k^* = \frac{1}{n}\sum(X_i - \bar{X})^k\)` — skewness (`\(\gamma_3\)`) and kurtosis (`\(\gamma_4\)`) compare shape to the normal ] -- .pull-left-wide[ **Covariance and correlation:** `$$\widehat{Cov}(X,Y) = \frac{1}{n}\sum(X_i-\bar{X})(Y_i-\bar{Y}), \quad \hat{\rho} = \frac{\widehat{Cov}(X,Y)}{\sqrt{S_X^2 S_Y^2}}$$` ] -- .pull-left-wide[ **EDF and quantiles:** `\(\hat{F}_X(x) = \frac{\#\{x_i \leq x\}}{n}\)` — unbiased, consistent, asymptotically normal; quantiles estimated via order statistics ] ??? 4 min // 13:58 --- # Before next time .pull-left[ - Read the assigned reading - Next time: Confidence intervals `\(\rightarrow\)` Chapter 13 ] .pull-right[  ] ??? 2 min // 14:00