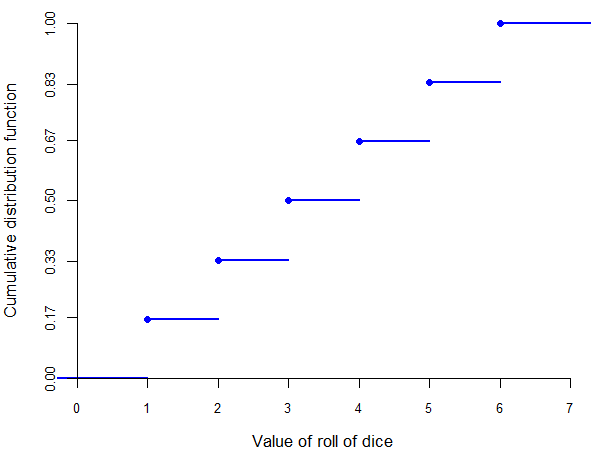

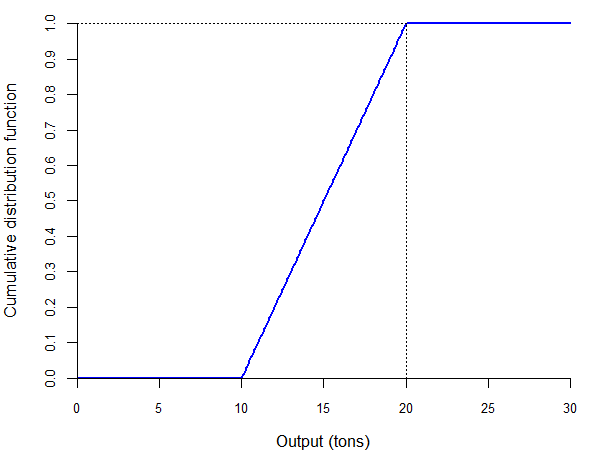

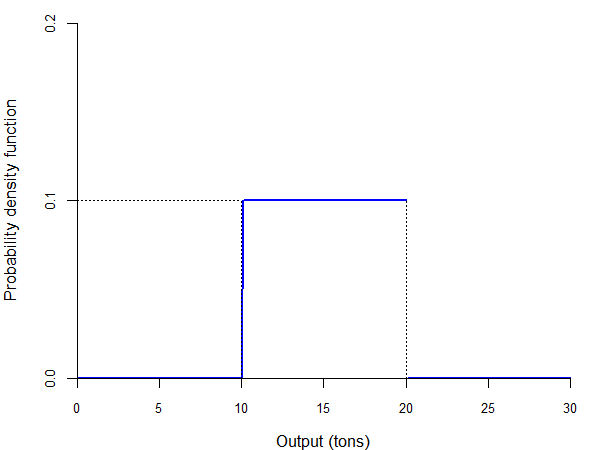

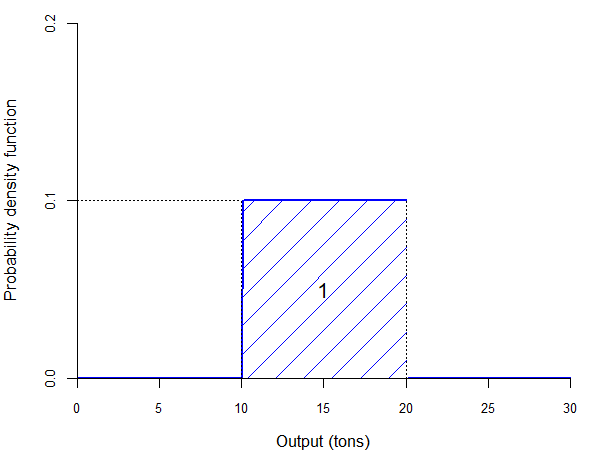

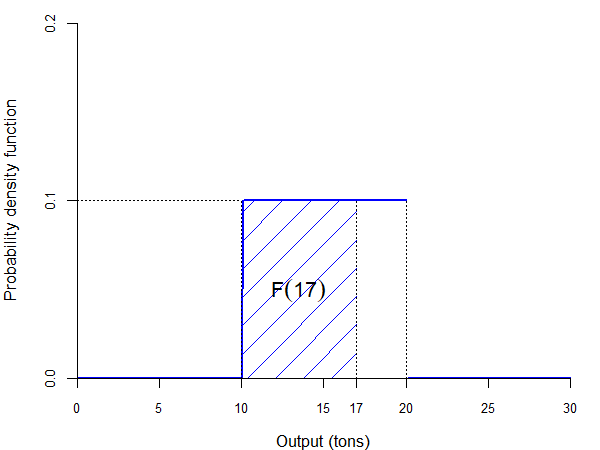

class: center, inverse, middle <style>.xe__progress-bar__container { top:0; opacity: 1; position:absolute; right:0; left: 0; } .xe__progress-bar { height: 0.25em; background-color: #808080; width: calc(var(--slide-current) / var(--slide-total) * 100%); } .remark-visible .xe__progress-bar { animation: xe__progress-bar__wipe 200ms forwards; animation-timing-function: cubic-bezier(.86,0,.07,1); } @keyframes xe__progress-bar__wipe { 0% { width: calc(var(--slide-previous) / var(--slide-total) * 100%); } 100% { width: calc(var(--slide-current) / var(--slide-total) * 100%); } }</style> <style type="text/css"> .pull-left { float: left; width: 44%; } .pull-right { float: right; width: 44%; } .pull-right ~ p { clear: both; } .pull-left-wide { float: left; width: 66%; } .pull-right-wide { float: right; width: 66%; } .pull-right-wide ~ p { clear: both; } .pull-left-narrow { float: left; width: 30%; } .pull-right-narrow { float: right; width: 30%; } .tiny123 { font-size: 0.40em; } .small123 { font-size: 0.80em; } .large123 { font-size: 2em; } .red { color: red } .orange { color: orange } .green { color: green } </style> # Statistics ## Random variables ### (Chapter 4) ### Christian Vedel,<br>Department of Economics<br>University of Southern Denmark ### Email: [christian-vs@sam.sdu.dk](mailto:christian-vs@sam.sdu.dk) ### Updated 2026-02-23 .footnote[ .left[ .small123[ *Please beware. I work on these slides until the last minute before the lecture and push most changes along the way. Until the actual lecture, this is just a draft* ] ] ] --- # This lecture .pull-left-wide[ - Random variables: definition and types - Discrete random variables: - probability and cumulative distribution functions - joint, marginal, and conditional probabilities - Bayes' theorem and independence - Continuous random variables: - cumulative distribution and density functions - relationships between two continuous random variables - Practice tasks throughout ] --- # From events to random variables .pull-left-wide[ - Last time: outcomes `\(\omega \in \Omega\)`, events `\(A \subseteq \Omega\)`, and probabilities `\(P(A)\)`. - In applications we usually care about a *numerical* quantity (wage, price, number of children, etc.). - A **random variable** assigns a number to each outcome: `\(X:\Omega \to \mathbb{R}\)`. - Then we ask questions like: - discrete: `\(P(X = x)\)` - continuous: `\(P(a < X \le b)\)` ] --- class: inverse, middle, center # Definition of a random variable --- # Random variable .pull-left-wide[ - Start with a probability model: outcomes `\(\omega \in \Omega\)` and probabilities `\(P(\cdot)\)`. - A random variable simply *labels each outcome with a number*. > A **random variable** is a function `\(X:\Omega\to\mathbb{R}\)`. - Examples: - coin toss (`\(\Omega=\{H,T\}\)`): `$$X(H)=0,\quad X(T)=1$$` - dice roll (`\(\Omega=\{1,2,3,4,5,6\}\)`): `$$X(k)=k \text{ for } k=1,\dots,6$$` ] --- # Random variable -- .pull-left-wide[ - As a general rule, we use letters at the end of the alphabet: - uppercase to denote random variables (e.g., `\(X\)`, `\(Y\)`) - lowercase for the specific values a random variable can take (e.g., `\(x\)`, `\(y\)`) ] -- .pull-left-wide[ - Note that we can choose the values assigned to the various outcomes, but sometimes certain values may be more suited to the problem at hand ] -- .pull-left-wide[ - For example, suppose that the experiment is "choose a person at random, ask her age" - outcomes: values of "age of the person" (say, 43 years) - random variable: assign a number to the outcome "age of person = 43 years" - "natural" value: 43 ] --- # Types of random variables -- .pull-left-wide[ - There are two types of random variables based on the number of values they can take: ] -- .pull-left-wide[ - **discrete random variables** can take a *countable* (finite or infinite) number of values - examples: toss of a coin, roll of a dice, pick an integer - if an experiment has a finite number of outcomes, then the corresponding random variable is *usually* discrete ] -- .pull-left-wide[ - **continuous random variables** take an *uncountable* number of values - examples: exact quantity of rainfall over a year, exact profit corresponding to a share (profit divided by number of shares) - note that the same experiment can produce a discrete or a continuous random variable, depending on how "accurately" the outcome is measured ] --- # .red[Practice: Random variables and types] .pull-left-wide[ 1. Define a random variable `\(X\)` for the experiment "draw one card from a standard deck". 2. Is your `\(X\)` discrete or continuous? Explain in one sentence. 3. Give one alternative coding of the same experiment and explain why it is still a valid random variable. ] --- class: middle # Why this can feel confusing .pull-left-wide[ - We introduce *two* functions that both output numbers — so it’s easy to mix them up: - **Probability measure:** `\(P:\mathcal{F}\to[0,1]\)` input = an **event** `\(A\subseteq\Omega\)` (a set of outcomes) output = a **probability** - **Random variable:** `\(X:\Omega\to\mathbb{R}\)` input = an **outcome** `\(\omega\in\Omega\)` output = a **numerical value** (wage, indicator, count, etc.) > *Probability measures* are the functions that assign probabilities to *events*, while *random variables* is just a way of describing those events. **Which we will then assign probabilities to using the probability measure.** ] --- # Overview of concepts thusfar ### Concept hierarchy (Lectures 02–03) .pull-left[ **World / research question** `\(\rightarrow\)` **uncertainty** **Population / super-population** - we observe a **sample** via a **selection mechanism** - (population + selection + sample) = **experiment** **Probability model** `\((\Omega,\mathcal{F},P)\)` - outcomes `\(\omega \in \Omega\)` - events `\(A \subseteq \Omega\)`, with `\(A \in \mathcal{F}\)` - probabilities `\(P(A)\)` ] .pull-right[ **Derived tools** - conditional probability `\(P(A\mid B)\)` - independence `\(A \perp B\)` - conditional independence `\(A \perp B \mid C\)` .red[ **Random variables**: turn outcomes into numbers - `\(X:\Omega\to\mathbb{R}\)` - `\(P(X\le x)=P(\{\omega: X(\omega)\le x\})\)` - discrete: `\(P(X=x)\)`, continuous: `\(P(a<X\le b)\)` ] ] --- class: inverse, middle, center # Discrete random variables --- # Probability function -- .pull-left-wide[ - One advantage of using random variables is that now we work with numbers instead of abstract events ] -- .pull-left-wide[ - So, we can use mathematical concepts built for numbers, such as functions ] -- .pull-left-wide[ > The **probability function** `\(f(\cdot)\)` of a discrete random variable `\(X\)` is defined as: > `$$f(x) = P(X = x)$$` ] -- .pull-left-wide[ - When working with several random variables, say `\(X\)` and `\(Y\)`, it is sometimes useful to distinguish their probability functions by indexing them: `\(f_X(x)\)` and `\(f_Y(y)\)` ] --- # Probability function -- .pull-left-wide[ - Examples: - in the experiment of tossing a coin, we would have: `$$f(0) = P(X = 0) = P(\text{"heads"}) = \frac{1}{2}$$` `$$f(1) = P(X = 1) = P(\text{"tails"}) = \frac{1}{2}$$` ] -- .pull-left-wide[ > **Properties of the probability function:** > > (i) `\(0 \leq f(x) \leq 1\)` for all `\(x\)` > > (ii) `\(\displaystyle\sum_{i=1}^N f(x_i) = f(x_1) + f(x_2) + \ldots + f(x_N) = 1\)`, where `\(x_1, x_2, \ldots, x_N\)` are the possible values of `\(X\)` ] --- # Cumulative distribution function -- .pull-left-wide[ > The **cumulative distribution function** `\(F(\cdot)\)` of a discrete random variable `\(X\)` is defined as: > `$$F(x) = P(X \leq x) = \sum_{x_i \leq x} f(x_i)$$` ] -- .pull-left-wide[ - Note that, by construction, the cumulative distribution is an *increasing* function ] -- .pull-left-wide[ - Example: - in the experiment of rolling a dice, we would have: `$$f(4) = P(X = 4) = P(\text{"dice shows 4"}) = \frac{1}{6}$$` `$$F(4) = P(X \leq 4) = P(\text{"dice shows at most 4"})$$` `$$= P(X = 1) + P(X = 2) + P(X = 3) + P(X = 4) = \frac{4}{6}$$` ] --- # Cumulative distribution function .center[  ] --- # Cumulative distribution function -- .pull-left-wide[ - The cumulative distribution function also allows us to calculate the probability that `\(X\)` will take on a value in a given range: ] -- .pull-left-wide[ `$$P(a < X \leq b) = F(b) - F(a)$$` ] -- .pull-left-wide[ and: `$$P(a \leq X \leq b) = F(b) - F(a) + f(a)$$` ] --- # .red[Practice: Discrete probability and CDF] .pull-left-wide[ Suppose `\(X\)` is the outcome of a fair six-sided die. 1. Compute `\(f(3)\)`. 2. Compute `\(F(4)\)`. 3. Compute `\(P(2 < X \leq 5)\)` using the CDF. 4. Compute `\(P(2 \leq X \leq 5)\)` and explain the difference from question 3. ] --- # Probability functions and relative frequencies -- .pull-left-wide[ - Suppose the frequency of a particular outcome `\(z\)` in the population is given by the function `\(g(z)\)` (e.g., in an urn including 3 red balls and 5 blue balls, red balls have a relative frequency of "3 in 8" and blue balls of "5 in 8") ] -- .pull-left-wide[ - Let `\(Z\)` be a random variable whose values indicate the outcome in this population (i.e., the color of the ball) ] -- .pull-left-wide[ - If all elements in the population have an equal chance of being selected, then the probability function of `\(Z\)` is: `$$f(z) = g(z)$$` ] -- .pull-left-wide[ - In our example, if `\(Z = 1\)` indicates "red ball" and `\(Z = 2\)` indicates "blue ball," then: `$$f(1) = \frac{3}{8} \qquad f(2) = \frac{5}{8}$$` ] --- class: inverse, middle, center # Relationships between discrete random variables --- # Relationships between random variables -- .pull-left-wide[ - Sometimes, we are interested in studying the relationship between two types of events ] -- .pull-left-wide[ - For example, suppose that a bank wants to assess the risk of bankruptcy of a company asking for a loan ] -- .pull-left-wide[ - The bank knows that this will depend on the state of the economy: booming or in recession ] -- .pull-left-wide[ - Hence, the bank would like to know how risky the loan is as a function of: - how likely the company is to go bankrupt - how likely the economy is to be in recession ] --- # Joint probability -- .pull-left-wide[ > The **joint probability function** `\(f(\cdot, \cdot)\)` for two discrete random variables `\(X\)` and `\(Y\)` is defined as: > `$$f(x, y) = P(X = x \text{ and } Y = y)$$` ] -- .pull-left-wide[ - Example: | | `\(X = 0\)` (bankrupt) | `\(X = 1\)` (not bankrupt) | |---|:---:|:---:| | `\(Y = 0\)` (recession) | 0.2 | 0.2 | | `\(Y = 1\)` (boom) | 0.1 | 0.5 | ] --- # Joint probability -- .pull-left-wide[ > **Properties of the joint probability function:** > > 1. `\(0 \leq f(x, y) \leq 1\)` for all `\(x, y\)` > > 2. `\(\displaystyle \sum_{i=1}^{N_x} \sum_{j=1}^{N_y} f(x_i, y_j) = f(x_1, y_1) + f(x_1, y_2) + \ldots + f(x_{N_x}, y_{N_y}) = 1\)` > > where `\(x_1, x_2, \ldots, x_{N_x}\)` are all the possible values of `\(X\)`, and `\(y_1, y_2, \ldots, y_{N_y}\)` are all the possible values of `\(Y\)` ] -- .pull-left-wide[ - In our example, what we need (for the second property) is: `$$0.2 + 0.2 + 0.1 + 0.5 = 1$$` ] --- # Marginal probability -- .pull-left-wide[ > The **marginal probability function** `\(f_X(\cdot)\)` of a discrete random variable `\(X\)` is defined as: > `$$f_X(x) = \sum_{j=1}^{N_y} f(x, y_j) = f(x, y_1) + f(x, y_2) + \ldots + f(x, y_{N_y})$$` ] --- # Marginal probability -- .pull-left-wide[ - In other words, the marginal probability function of `\(X\)` is the column sum of probabilities (and the marginal probability function of `\(Y\)` is the row sum): | | `\(X = 0\)` (bankrupt) | `\(X = 1\)` (not bankrupt) | `\(f_Y(\cdot)\)` | |---|:---:|:---:|:---:| | `\(Y = 0\)` (recession) | 0.2 | 0.2 | 0.4 | | `\(Y = 1\)` (boom) | 0.1 | 0.5 | 0.6 | | `\(f_X(\cdot)\)` | 0.3 | 0.7 | | ] --- # Conditional probability -- .pull-left-wide[ - Recall the definition of the conditional probability: `$$P(A | B) = \frac{P(A \cap B)}{P(B)}$$` ] -- .pull-left-wide[ - Now suppose that the event `\(A\)` is represented by `\(X = x\)` and the event `\(B\)` by `\(Y = y\)` ] -- .pull-left-wide[ - We can then write the conditional probability as: `$$P(X = x | Y = y) = \frac{P(X = x \text{ and } Y = y)}{P(Y = y)}$$` ] -- .pull-left-wide[ - But note that, by definition: - the numerator is the joint probability function `\(f(x, y)\)` - the denominator is the marginal probability function `\(f_Y(y)\)` ] --- # Conditional probability -- .pull-left-wide[ > The **conditional probability function** `\(f_{X|Y}(\cdot, \cdot)\)` of a discrete random variable `\(X\)` given that `\(Y = y\)` is defined as: > `$$f_{X|Y}(x | y) = \frac{f(x, y)}{f_Y(y)}$$` > if `\(f_Y(y) > 0\)` ] -- .pull-left-wide[ - Example: - what is the probability of bankruptcy ( `\(X = 0\)` ) given that we know we are in a boom ( `\(Y = 1\)` )? `$$f_{X|Y}(0 | 1) = \frac{f(0, 1)}{f_Y(1)} = \frac{0.1}{0.6} = 0.167 = 16.7\%$$` ] --- # Bayes' theorem -- .pull-left-wide[ - In practice, sometimes we know the conditional probability of `\(X\)` given `\(Y\)`, but we are interested in the conditional probability of `\(Y\)` given `\(X\)` ] -- .pull-left-wide[ - For example, you may know from a car dealer friend of yours what is the probability of a "lemon" (bad car) having a low price, but you would want to know what is the probability that a cheap car is a lemon ] -- .pull-left-wide[ - We can use the definition of the conditional probability to write: `$$f_{Y|X}(y | x) = \frac{f(x, y)}{f_X(x)}$$` ] -- .pull-left-wide[ - From here it is easy to prove the following theorem ] --- # Bayes' theorem -- .pull-left-wide[ > **Bayes' theorem:** > > 1. `\(f_{X|Y}(x | y) = f_{Y|X}(y | x) \cdot \displaystyle\frac{f_X(x)}{f_Y(y)}\)` > > 2. `\(f_{X|Y}(x | y) = f_{Y|X}(y | x) \cdot \displaystyle\frac{f_X(x)}{\displaystyle\sum_{i=1}^{N_x} \left\{ f_{Y|X}(y | x_i) \cdot f_X(x_i)\right\}}\)` ] --- # Bayes' theorem: Example -- .pull-left-wide[ - Let `\(X = 1\)` if the car is a lemon ( `\(X = 0\)` otherwise) and `\(Y = 1\)` if the price is low ( `\(Y = 0\)` otherwise) ] -- .pull-left-wide[ - A car dealer friend tells you: 75% chance of a low price if the car is a lemon, 20% if not: `$$f_{Y|X}(1 | 1) = 0.75, \quad f_{Y|X}(0 | 1) = 0.25$$` `$$f_{Y|X}(1 | 0) = 0.20, \quad f_{Y|X}(0 | 0) = 0.80$$` ] -- .pull-left-wide[ - Technical reports say 25% of cars on the market are lemons: `$$f_X(1) = 0.25 \quad f_X(0) = 0.75$$` ] --- # Bayes' theorem: Example -- .pull-left-wide[ - From here you only need to apply Bayes' theorem to find the conditional probability of a lemon given that the price is low: `$$f_{X|Y}(1 | 1) = f_{Y|X}(1 | 1) \cdot \frac{f_X(1)}{\displaystyle\sum_{i=1}^{N_x} \left\{ f_{Y|X}(1 | x_i) \cdot f_X(x_i)\right\}}$$` ] -- .pull-left-wide[ `$$= f_{Y|X}(1 | 1) \cdot \frac{f_X(1)}{f_{Y|X}(1 | 0) \cdot f_X(0) + f_{Y|X}(1 | 1) \cdot f_X(1)}$$` ] -- .pull-left-wide[ `$$= 0.75 \cdot \frac{0.25}{0.20 \cdot 0.75 + 0.75 \cdot 0.25} = 0.556$$` ] -- .pull-left-wide[ - Therefore, there is a 55.6% chance that a car is a lemon if it has a low price ] --- # Bayes' theorem: Name classification -- .pull-left-wide[ - A database records how often each name appears per gender (frequency per 10,000): | Name | Male | Female | |------|:----:|:------:| | Carl | 1,023 | 3 | | Carla | 5 | 2,148 | | Chris | 850 | 820 | ] -- .pull-left-wide[ - These frequencies tell us `\(P(\text{Name} \mid \text{Gender})\)` — how common a name is *within* a gender ] -- .pull-left-wide[ - But what if we only observe the name and want to infer the gender? Can we turn this around to get `\(P(\text{Gender} \mid \text{Name})\)`? ] --- # .red[Practice: Bayes' theorem — Name classification] .pull-left-wide[ A historical census lists a person named "Carla" but no gender. Assume `\(P(\text{Female}) = 0.5\)`. 1. Extract `\(P(\text{"Carla"} \mid \text{Female})\)` and `\(P(\text{"Carla"} \mid \text{Male})\)` from the frequency table. 2. Compute `\(P(\text{"Carla"})\)` using the law of total probability. 3. Apply Bayes' theorem to find `\(P(\text{Female} \mid \text{"Carla"})\)`. 4. Would the result be as clear-cut for "Chris"? Why? .small123[*This is the idea behind the **Naive Bayes classifier** — a simple but powerful method also used for spam filtering, sentiment analysis, and medical diagnosis.*] ] --- # Independence -- .pull-left-wide[ - Recall the definition of independence between two events `\(A\)` and `\(B\)`: `$$P(A \cap B) = P(A) \cdot P(B)$$` ] -- .pull-left-wide[ - Now suppose that the event `\(A\)` is represented by `\(X = x\)` and the event `\(B\)` by `\(Y = y\)` ] -- .pull-left-wide[ - We can then write the independence condition as: `$$P(X = x \text{ and } Y = y) = P(X = x) \cdot P(Y = y)$$` ] -- .pull-left-wide[ - But note that, by definition: - the left hand side is the joint probability function `\(f(x, y)\)` - the right hand side is the product of marginal probability functions `\(f_X(x)\)` and `\(f_Y(y)\)` ] --- # Independence -- .pull-left-wide[ > Two discrete random variables `\(X\)` and `\(Y\)` are **independent** if and only if: > `$$f(x, y) = f_X(x) \cdot f_Y(y)$$` > for all `\(x\)` and `\(y\)`. This implies that: > > 1. `\(f_{X|Y}(x | y) = f_X(x)\)` for all values `\(y\)` such that `\(f_Y(y) > 0\)` > > 2. `\(f_{Y|X}(y | x) = f_Y(y)\)` for all values `\(x\)` such that `\(f_X(x) > 0\)` ] -- .pull-left-wide[ - Examples: tossing a coin twice, rolling a dice twice ] --- # .red[Practice: Joint, marginal, conditional] .pull-left-wide[ Using the table below, | | `\(X = 0\)` | `\(X = 1\)` | |---|:---:|:---:| | `\(Y = 0\)` | 0.25 | 0.15 | | `\(Y = 1\)` | 0.35 | 0.25 | 1. Compute `\(f_X(1)\)` and `\(f_Y(0)\)`. 2. Compute `\(f_{X|Y}(1|0)\)`. 3. Check whether `\(X\)` and `\(Y\)` are independent. ] --- class: inverse, middle, center # Continuous random variables --- # Continuous random variables -- .pull-left-wide[ - Imagine that your company wants to predict its output next year ] -- .pull-left-wide[ - It knows that it will produce between 10 and 20 tons of concrete, but it can be *any* number between 10 and 20 (with equal probability) ] -- .pull-left-wide[ - What is the probability that it will produce *exactly* 15 tons? Basically zero - if it produces 14.999999 or 15.000001, this is not exactly 15 - it would be extremely hard to get to exactly 15 tons ] -- .pull-left-wide[ - Using the same argument, the probability of producing *exactly* any particular quantity is zero `\(\Rightarrow\)` the concept of probability function does not make sense ] -- .pull-left-wide[ - Since continuous random variables have an uncountable number of values, we cannot use the exact same concepts as in the case of discrete random variables ] --- # Cumulative distribution function -- .pull-left-wide[ > The **cumulative distribution function** `\(F(\cdot)\)` of a continuous random variable `\(X\)` is defined as: > `$$F(x) = P(X \leq x)$$` ] -- .pull-left-wide[ - This is the same definition as in the case of a discrete random variable ] -- .pull-left-wide[ - However, note that in this case it does not make a difference if the inequality is strict or not: `$$P(X \leq x) = P(X < x \text{ or } X = x) = P(X < x) + P(X = x) = P(X < x)$$` because `\(P(X = x) = 0\)` ] --- # Cumulative distribution function .center[  ] --- # Probability density function -- .pull-left-wide[ > The **probability density function** `\(f(\cdot)\)` of a continuous random variable `\(X\)` is defined as: > `$$f(x) = \frac{dF(x)}{dx}$$` ] -- .pull-left-wide[ - the area under the probability density function is always equal to one: `$$\int_{-\infty}^\infty f(x) \, dx = 1$$` ] -- .pull-left-wide[ - the cumulative distribution function is the integral of the probability density function: `$$F(x) = \int_{-\infty}^x f(z) \, dz$$` ] --- # Note: Summation vs. Integration: .pull-left[ - In the case of discrete random variables, we had: `$$P(X \leq x) = \sum_{x_i \leq x} f(x_i)$$` - Here we have: `$$P(X \leq x) = \int_{-\infty}^x f(z) \, dz$$` *Essentially the same. As you know from calculus, integration is essentially continous summation. This is one of the reasons you needed to learn that.* ] --- # Example -- .pull-left-wide[ - In our example, the likelihood of any value between 10 and 20 is the same ] -- .pull-left-wide[ - In other words, the value of the density function is the same for all values of `\(X\)`: `$$f(x) = c$$` ] -- .pull-left-wide[ - We can then use the properties of the probability density function to calculate the exact value of `\(c\)`: `$$\int_{-\infty}^\infty f(x) \, dx = \int_{10}^{20} c \, dx = (20 - 10) c = 1 \; \Rightarrow \; c = 0.1$$` ] -- .pull-left-wide[ - We can now calculate the cumulative distribution function: `$$F(x) = \int_{-\infty}^x f(z) \, dz = \int_{10}^x 0.1 \, dz = 0.1 (x - 10)$$` for all `\(z\)` such that `\(10 \leq z \leq 20\)` ] --- # Example -- .pull-left-wide[ - Now we can write explicitly the probability density function: `$$f(x) = \begin{cases} 0, & \text{if } x < 10 \\ 0.1, & \text{if } 10 \leq x \leq 20 \\ 0, & \text{if } x > 20 \end{cases}$$` ] -- .red[Note: *The output is not is a density, not a probability. The probability of any particular value is zero.*] -- .pull-left-wide[ - The cumulative distribution function is: `$$F(x) = \begin{cases} 0, & \text{if } x < 10 \\ 0.1(x - 10), & \text{if } 10 \leq x \leq 20 \\ 1, & \text{if } x > 20 \end{cases}$$` ] --- # Probability density function .center[  ] --- # Probability density and cumulative distribution functions .center[  ] --- # Probability density and cumulative distribution functions .center[  ] --- # .red[Practice: Continuous random variables] .pull-left-wide[ Assume `\(X \sim U[10,20]\)`. 1. Write the density `\(f(x)\)`. 2. Compute `\(P(12 \leq X \leq 16)\)`. 3. Compute `\(F(18)\)`. 4. Explain why `\(P(X=15)=0\)` even though 15 is in the support. ] --- # .red[Raise your hand: The density function] <div class="countdown" id="timer_d9a0e14a" data-update-every="1" tabindex="0" style="top:TRUE;right:0;"> <div class="countdown-controls"><button class="countdown-bump-down">−</button><button class="countdown-bump-up">+</button></div> <code class="countdown-time"><span class="countdown-digits minutes">01</span><span class="countdown-digits colon">:</span><span class="countdown-digits seconds">00</span></code> </div> -- .pull-left-wide[ **Q1.** `\(X \sim U[0, 0.5]\)`. What is the value of `\(f(x)\)` for `\(x=0.05\)`? - **A)** 0.5 — the upper bound of the support - **B)** 1 — `\(f\)` is probability-related, so it can't exceed 1 - **C)** 2 — the PDF must integrate to 1 over an interval of length 0.5 ] -- .pull-left-wide[ **Q2.** `\(X\)` is a continuous random variable. What is `\(P(X \leq 5) - P(X < 5)\)`? - **A)** `\(f(5)\)` — read off the density at 5 - **B)** `\(F(5)\)` — the cumulative probability up to 5 - **C)** 0 — single-point probability is always zero for continuous RVs ] --- class: inverse, middle, center # Relationships between continuous random variables --- # Joint probability density -- .pull-left-wide[ - We can use the same notions defined in the case of discrete random variables, but replacing the probability function with the probability density function and summations with integrals ] -- .pull-left-wide[ > The **joint probability density function** `\(f(\cdot, \cdot)\)` for two continuous random variables `\(X\)` and `\(Y\)` is written as `\(f(x, y)\)`. ] -- .pull-left-wide[ - Since this is a probability density function, the area under it must "sum up" to one: `$$\int_{-\infty}^\infty \int_{-\infty}^\infty f(x, y) \, dx \, dy = 1$$` ] --- # Marginal probability density -- .pull-left-wide[ > The **marginal probability density function** `\(f_X(\cdot)\)` of a continuous random variable `\(X\)` is defined as: > `$$f_X(x) = \int_{-\infty}^\infty f(x, y) \, dy$$` ] -- .pull-left-wide[ - Since this is a probability density function, the area under it must "sum up" to one: `$$\int_{-\infty}^\infty f_X(x) \, dx = 1$$` ] --- # Conditional probability density -- .pull-left-wide[ > The **conditional probability density function** `\(f_{X|Y}(x|y)\)` of a continuous random variable `\(X\)` given that `\(Y = y\)` is defined as: > `$$f_{X|Y}(x | y) = \frac{f(x, y)}{f_Y(y)} \text{ if } f_Y(y) > 0.$$` ] -- .pull-left-wide[ - Again, the area under this function must "sum up" to one because it is a probability density function: `$$\int_{-\infty}^\infty f_{X|Y}(x | y) \, dx = 1$$` for all `\(y\)` such that `\(f_Y(y) > 0\)` ] --- # Bayes' theorem -- .pull-left-wide[ - Bayes' theorem applies to the continuous case in a similar way to the discrete case ] -- .pull-left-wide[ > **Bayes' theorem:** > > 1. `\(f_{X|Y}(x | y) = f_{Y|X}(y | x) \cdot \displaystyle\frac{f_X(x)}{f_Y(y)}\)` > > 2. `\(f_{X|Y}(x | y) = f_{Y|X}(y | x) \cdot \displaystyle\frac{f_X(x)}{\displaystyle\int_{-\infty}^{\infty} f_{Y|X}(y | z) f_X(z) \, dz}\)` ] --- # Independence -- .pull-left-wide[ - Finally, the concept of independence is similar in the continuous case to the discrete case ] -- .pull-left-wide[ > Two continuous random variables `\(X\)` and `\(Y\)` are **independent** if and only if: > `$$f(x, y) = f_X(x) \cdot f_Y(y)$$` > for all `\(x\)` and `\(y\)`. This implies that: > > 1. `\(f_{X|Y}(x | y) = f_X(x)\)` for all values `\(y\)` such that `\(f_Y(y) > 0\)` > > 2. `\(f_{Y|X}(y | x) = f_Y(y)\)` for all values `\(x\)` such that `\(f_X(x) > 0\)` ] --- # .red[Practice: Continuous relationships] .pull-left-wide[ Let `$$f(x,y)=2, \quad 0<y<x<1,$$` and `\(f(x,y)=0\)` otherwise. 1. Find `\(f_X(x)\)`. 2. Find `\(f_{Y|X}(y|x)\)` for `\(0<y<x<1\)`. 3. Are `\(X\)` and `\(Y\)` independent? Justify. ] --- # .red[Raise your hand: Joint and marginal densities] <div class="countdown" id="timer_8cca172c" data-update-every="1" tabindex="0" style="top:TRUE;right:0;"> <div class="countdown-controls"><button class="countdown-bump-down">−</button><button class="countdown-bump-up">+</button></div> <code class="countdown-time"><span class="countdown-digits minutes">01</span><span class="countdown-digits colon">:</span><span class="countdown-digits seconds">00</span></code> </div> -- .pull-left-wide[ **Q1.** You have the joint PDF `\(f(x, y)\)`. How do you find the marginal PDF `\(f_X(x)\)`? - **A)** Set `\(y = x\)`: `\(\quad f_X(x) = f(x,\, x)\)` - **B)** Integrate out `\(y\)`: `\(\quad f_X(x) = \displaystyle\int_{-\infty}^{\infty} f(x, y)\, dy\)` - **C)** Differentiate: `\(\quad f_X(x) = \partial f(x, y) / \partial y\)` ] -- .pull-left-wide[ **Q2.** You verify that `\(f(x,y) = f_X(x) \cdot f_Y(y)\)` for all `\(x\)` and `\(y\)`. What follows? - **A)** `\(X\)` and `\(Y\)` have the same marginal distribution - **B)** `\(X\)` and `\(Y\)` are independent - **C)** The conditional density `\(f_{X|Y}(x|y)\)` does not exist ]